首页 > 代码库 > CENTOS7 安装openstack mitaka版本(最新整理完整版附详细截图和操作步骤,添加了cinder和vxlan)

CENTOS7 安装openstack mitaka版本(最新整理完整版附详细截图和操作步骤,添加了cinder和vxlan)

CENTOS7 安装openstack mitaka版本(最新整理完整版附详细截图和操作步骤,添加了cinder和vxlan,附上个节点的配置文件)

实验环境准备:

为了更好的实现分布式mitaka版本的效果。我才有的是VMware的workstations来安装三台虚拟机,分别来模拟openstack的controller节点 compute节点和cinder节点。(我的宿主机配置为 500g 硬盘 16g内存,i5cpu。强烈建议由条件的朋友将内存配置大一点,因为我之前分配的2g太卡。)

注意:要实验kvm虚拟机,在vmware里面启动虚拟机之前开启cpu虚拟化可以在开机之后输入:

egrep -c ‘(vmx|svm)‘ /proc/cpuinfo

查看。Vmwar如何开启:

具体配置开始:

Controller节点: 2cpu +4gram+50gdisk+2nic(192.168.1.182(桥接)+192.168.8.183(nat))

compute节点: 2cpu +4gram+50gdisk+2nic(192.168.1.183(桥接)+192.168.8.183(nat))

cinder节点: 1cpu +1gram+10gdisk+20gdisk(次硬盘为安装完成之后添加,不要岁系统添加到lvm,如果手动分区就可以随虚拟机一起安装,安装cinder的磁盘,一点要干净的磁盘,要不然会报错(楼主在这个问题上面哭了半天才发现))+2nic(192.168.1.184(桥接)+192.168.8.184(nat))

现在正是开始:

一. 所有节点都要安装和配置的基础环境

系统安装完成之后,所有节点关掉防火墙,关掉selinux,设置好root密码

systemctl stop firewalld.service

vi /etc/sysconfig/selinux 将enforce改成disabled

设置好root密码:我的root密码同意设置为adm*123,为了方便和不出差错,我将以后的数据库的密码和keystone ,glance,rabbitmq,nova,cinder的密码都设置为adm*123

所有节点添加主机名解析

设置主机名:

Controller节点:hostnamectl set-hostname controller

Compute 节点:hostnamectl set-hostname compute

Cinder 节点:hostnamectlset-hostname cinder

登录到每个节点将主机名添加解析:

vi /etc/hosts加入:

3 添加时间同步

在controller节点:

yum install chrony –y

配置时间同步:

vi /etc/chrony.conf加入:

server time.windows.com iburst

设置开机启动以及启动chronyd

systemctl enable chronyd.service

systemctl start chronyd.service

在compute和cinder节点:

yum install chrony -y

vi /etc/chrony.conf加入:

server controller iburst

设置开机启动以及启动chronyd:

systemctl enable chronyd.service

systemctl start chronyd.service

5.配置openstack mitaka的yum源和安装基础软件包(所有节点都需要)

安装yum源:

yum install centos-release-openstack-mitaka -y

在主机上更新软件包和内核:

yum upgrade

安装OpenStackclient:

yum install python-openstackclient -y

如果你开起了selinux可以安装openstack-selinux,如果关掉了就不要安装。我们这里选择不安装。如果需要在安装直接运行:

yum install openstack-selinux

二.我的计划是在controller节点上面安装mysql,rabbitmq,keystone,glance,nova-api等

1. 下面安装mysql(记住在controller节点上面):

安装mysql:

yum install mariadb mariadb-server python2-PyMySQL -y

配置:

vi/etc/my.cnf 加入:

bind-address= 192.168.1.182

default-storage-engine= innodb

innodb_file_per_table

max_connections= 4096

collation-server= utf8_general_ci

character-set-server= utf8

配置开机启动和启动mysql:

systemctl enable mariadb.service

systemctl start mariadb.service

设置密码:

mysql_secure_installation

按照操作一步一步完成即可,都是傻瓜式的操作,我的设置为amd*123

2 .安装rabbitmq(个个服务之间的节点的通讯服务)

安装软件:

yum install rabbitmq-server

启动服务:

systemctl enable rabbitmq-server.service

systemctl start rabbitmq-server.service

创建rabbitmq用户openstack并设置密码为adm*123

rabbitmqctl add_user openstack adm*123

给刚刚创建的openstack用户授权:

rabbitmqctl set_permissions openstack ".*"".*"".*"

3.安装memcache

安装:

yum install memcached python-memcached

启动服务:

systemctl enable memcached.service

systemctl start memcached.service

3 安装keystone(认证服务)

创建keystone的数据库:

登录数据库:mysql –uroot –padm*123然后执行:

CREATE DATABASE keystone;

授权keystone用户:

GRANT ALL PRIVILEGES ON keystone.* TO ‘keystone‘@‘localhost‘ \

IDENTIFIED BY ‘adm*123‘;

GRANT ALL PRIVILEGES ON keystone.* TO ‘keystone‘@‘%‘ \

IDENTIFIED BY ‘adm*123‘;

生成admin的token的随机码:

openssl rand -hex 10

copy下来这个值,到时候会配置keystone的配置文件里面。

安装keystone的相关软件:

yum install openstack-keystone httpd mod_wsgi -y

修改配置文件:

[DEFAULT]

...

admin_token = 刚刚生成的随机数

数据库连接:

[database]

...

connection = mysql+pymysql://keystone:adm*123@controller/keystone

打开token:

[token]

...

provider = fernet

保存退出之后,同步数据库

su -s /bin/sh -c "keystone-manage db_sync" keystone

初始化fernet key:

keystone-manage fernet_setup --keystone-user keystone --keystone-group keystone

配置httpd服务:

vi/etc/httpd/conf/httpd.conf

修改ServerName

ServerName controller

在/etc/httpd/conf.d/下增加 wsgi的conf文件:

vi/etc/httpd/conf.d/wsgi-keystone.conf 加入下面内容:

Listen5000

Listen35357

<VirtualHost*:5000>

WSGIDaemonProcess keystone-public processes=5 threads=1 user=keystone group=keystone display-name=%{GROUP}WSGIProcessGroup keystone-public

WSGIScriptAlias / /usr/bin/keystone-wsgi-public

WSGIApplicationGroup %{GLOBAL}WSGIPassAuthorizationOn

ErrorLogFormat"%{cu}t %M"ErrorLog/var/log/httpd/keystone-error.log

CustomLog/var/log/httpd/keystone-access.log combined

<Directory/usr/bin>

Requireall granted

</Directory>

</VirtualHost>

<VirtualHost*:35357>

WSGIDaemonProcess keystone-admin processes=5 threads=1 user=keystone group=keystone display-name=%{GROUP}WSGIProcessGroup keystone-admin

WSGIScriptAlias / /usr/bin/keystone-wsgi-admin

WSGIApplicationGroup %{GLOBAL}WSGIPassAuthorizationOn

ErrorLogFormat"%{cu}t %M"ErrorLog/var/log/httpd/keystone-error.log

CustomLog/var/log/httpd/keystone-access.log combined

<Directory/usr/bin>

Requireall granted

</Directory>

</VirtualHost>

保存退出。

启动httpd服务:

systemctl enable httpd.service

systemctl start httpd.service

1 创建service和endpoint:

配置授权的token

exportOS_TOKEN=生成的随机数

配置endpoint的url:

exportOS_URL=http://controller:35357/v3

配置api的版本:

exportOS_IDENTITY_API_VERSION=3

创建认证服务:

openstack service create \

--name keystone --description "OpenStack Identity" identity

创建认证服务器的api 的endpoint:

openstack endpoint create --region RegionOne \

identity public http://controller:5000/v3

openstack endpoint create --region RegionOne \

identity internal http://controller:5000/v3

openstack endpoint create --region RegionOne \

identity admin http://controller:35357/v3

创建域,项目,用户,角色:

创建default域:

openstack domain create --description "Default Domain" default

创建admin项目:

openstack project create --domain default \

--description "Admin Project" admin

创建admin用户:

openstack user create --domain default \

--password-prompt admin

提示输入密码,我们都输入adm*123

创建admin的角色:

openstack role create admin

将admin用户添加到admin这个角色和项目里面:

openstack role add --project admin --user admin admin

确认操作:

如果出现以下结果,代表上面的操作没有问题,如果有问题,请返回检查:

取消环境变量;

unset OS_TOKEN OS_URL

执行:admin user,如果有返回这个成功:

openstack --os-auth-url http://controller:35357/v3 \

--os-project-domain-name default --os-user-domain-name default \

--os-project-name admin --os-username admin token issue

如果adm*123 ,看返回结果

我的返回结果:

创建admin 管理用户和demo普通用户的环境脚本:

我的admin脚本内容:

我的demo脚本内容:

直接执行

. admin-openrc

然后运行:

openstack token issue

就可以反正相同结果。

三. 安装glance(镜像服务)

登录数据库创建glance数据库:

mysql -u root –padm*123

创建glance数据库:

CREATE DATABASE glance;

授权:

GRANT ALL PRIVILEGES ON glance.* TO ‘glance‘@‘localhost‘ \

IDENTIFIED BY ‘adm*123‘;

GRANT ALL PRIVILEGES ON glance.* TO ‘glance‘@‘%‘ \

IDENTIFIED BY ‘adm*123‘;

退出mysql端。

创建glance的用户,加入到admin组,创建image服务,创建endpoint。

. admin-openrc

创建用户和添加角色:

openstack user create --domain default --password-prompt glance

密码:adm*123

添加角色:

openstack role add --project service --user glance admin

创建服务:

openstack service create --name glance \

--description "OpenStack Image" image

创建endpoint:

openstack endpoint create --region RegionOne \

image public http://controller:9292

openstack endpoint create --region RegionOne \

image internal http://controller:9292

openstack endpoint create --region RegionOne \

image admin http://controller:9292

安装软件:

yum install openstack-glance -y

配置:

vi /etc/glance/glance-api.conf

直接到database配置:

[database]

...

connection = mysql+pymysql://glance:adm*123@controller/glance

找到keystone_authtoken:

...

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = glance

password = adm*123

找到paste_deploy:

[paste_deploy]

...

flavor = keystone

配置glance_store:

[glance_store]

...

stores = file,http

default_store = file

filesystem_store_datadir = /var/lib/glance/images/

配置glance-registry

vi /etc/glance/glance-registry.conf

[database]

...

connection = mysql+pymysql://glance:adm*123@controller/glance

配置:[keystone_authtoken] [paste_deploy]:

[keystone_authtoken]

...

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = glance

password = adm*123

[paste_deploy]

...

flavor = keystone

保存退出。

同步数据库:

su -s /bin/sh -c "glance-manage db_sync" glance

启动服务:

systemctl enable openstack-glance-api.service \

openstack-glance-registry.service

systemctl start openstack-glance-api.service \

openstack-glance-registry.service

确认glance操作:

下载测试镜像:

wget http://download.cirros-cloud.net/0.3.4/cirros-0.3.4-x86_64-disk.img

执行环境变量:

. admin-openrc

上传镜像到glance:

openstack image create "cirros"\

--file cirros-0.3.4-x86_64-disk.img \

--disk-format qcow2 --container-format bare \

--public

上传完成之后查看:

openstack image list

你可以看到你刚刚长传完成的镜像,我上传了几个镜像。我的输出:

五 在controller节点安装计算服务

登录数据库,创建nova,nova_api数据库。

mysql -u root –padm*123

创建数据库:

CREATE DATABASE nova_api;

CREATE DATABASE nova;

授权:

GRANT ALL PRIVILEGES ON nova_api.* TO ‘nova‘@‘localhost‘ \

IDENTIFIED BY ‘adm*123‘;

GRANT ALL PRIVILEGES ON nova_api.* TO ‘nova‘@‘%‘ \

IDENTIFIED BY ‘ adm*123‘;

GRANT ALL PRIVILEGES ON nova.* TO ‘nova‘@‘localhost‘ \

IDENTIFIED BY ‘ adm*123‘;

GRANT ALL PRIVILEGES ON nova.* TO ‘nova‘@‘%‘ \

IDENTIFIED BY ‘ adm*123‘;

创建nova用户,角色,服务,endpoint(跟上面类似)

执行环境变量:

. admin-openrc

创建用户,密码adm*123

openstack user create --domain default \

--password-prompt nova

加入角色:

openstack role add --project service --user nova admin

创建compute服务:

openstack service create --name nova \

--description "OpenStack Compute" compute

创建endpoint:

openstack endpoint create --region RegionOne \

compute public http://controller:8774/v2.1/%\(tenant_id\)s

openstack endpoint create --region RegionOne \

compute internal http://controller:8774/v2.1/%\(tenant_id\)s

openstack endpoint create --region RegionOne \

compute admin http://controller:8774/v2.1/%\(tenant_id\)s

安装软件:

yum install openstack-nova-api openstack-nova-conductor \

openstack-nova-console openstack-nova-novncproxy \

openstack-nova-scheduler -y

配置nova.conf

vi /etc/nova/nova.conf

[DEFAULT]

...

enabled_apis = osapi_compute,metadata

[api_database]

...

connection = mysql+pymysql://nova:adm*123@controller/nova_api

[database]

...

connection = mysql+pymysql://nova:adm*123@controller/nova

[DEFAULT]

...

rpc_backend = rabbit

auth_strategy = keystone

my_ip = 192.168.1.182

use_neutron = True

firewall_driver = nova.virt.firewall.NoopFirewallDriver

[oslo_messaging_rabbit]

...

rabbit_host = controller

rabbit_userid = openstack

rabbit_password = RABBIT_PASS

[keystone_authtoken]

...

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = nova

password = adm*123

[vnc]

...

vncserver_listen = $my_ip

vncserver_proxyclient_address = $my_ip

[glance]

...

api_servers = http://controller:9292

[oslo_concurrency]

...

lock_path = /var/lib/nova/tmp

保存之后退出。

同步数据库:

su -s /bin/sh -c "nova-manage api_db sync" nova

su -s /bin/sh -c "nova-manage db sync" nova

启动服务:

systemctl enable openstack-nova-api.service \

openstack-nova-consoleauth.service openstack-nova-scheduler.service \

openstack-nova-conductor.service openstack-nova-novncproxy.service

systemctl start openstack-nova-api.service \

openstack-nova-consoleauth.service openstack-nova-scheduler.service \

openstack-nova-conductor.service openstack-nova-novncproxy.service

六 在compute点安装compute服务

安装软件:

yum install openstack-nova-compute -y

配置nova.conf

vi /etc/nova/nova.conf

[DEFAULT]

...

rpc_backend = rabbit

auth_strategy = keystone

my_ip = 192.168.1.183

use_neutron = True

firewall_driver = nova.virt.firewall.NoopFirewallDriver

[oslo_messaging_rabbit]

...

rabbit_host = controller

rabbit_userid = openstack

rabbit_password = RABBIT_PASS

[keystone_authtoken]

...

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = nova

password = adm*123

[vnc]

...

enabled = True

vncserver_listen = 0.0.0.0

vncserver_proxyclient_address = $my_ip

novncproxy_base_url = http://controller:6080/vnc_auto.html

[glance]

...

api_servers = http://controller:9292

[oslo_concurrency]

...

lock_path = /var/lib/nova/tmp

保存退出。

启动服务:

systemctl enable libvirtd.service openstack-nova-compute.service

systemctl start libvirtd.service openstack-nova-compute.service

计算节点安装完毕之后,回到controller节点确认操作是否正确:

执行环境变量:

. admin-openrc

查看计算服务:

openstack compute service list

我的输出:

七 安装neutron服务(网络服务)

在controller节点安装neutron:

创建neutron数据库(方法同上):

mysql -u root –padm*123

创建数据库并授权:

CREATE DATABASE neutron;

GRANT ALL PRIVILEGES ON neutron.* TO ‘neutron‘@‘localhost‘ \

IDENTIFIED BY ‘adm*123‘;

GRANT ALL PRIVILEGES ON neutron.* TO ‘neutron‘@‘%‘ \

IDENTIFIED BY ‘adm*123‘;

创建用户(密码adm*123),角色,服务和endpoint:

执行环境变量:

. admin-openrc

创建用户(密码adm*123)

openstack user create --domain default --password-prompt neutron

角色:

openstack role add --project service --user neutron admin

服务:

openstack service create --name neutron \

--description "OpenStack Networking" network

Endpoint:

openstack endpoint create --region RegionOne \

network public http://controller:9696

openstack endpoint create --region RegionOne \

network internal http://controller:9696

openstack endpoint create --region RegionOne \

network admin http://controller:9696

我们这里的网络我打算建立vxlan,执行如下操作:

安装软件:

yum install openstack-neutron openstack-neutron-ml2 \

openstack-neutron-linuxbridge ebtables -y

配置/etc/neutron/neutron.conf:

[database]

...

connection = mysql+pymysql://neutron:adm*123@controller/neutron

[DEFAULT]

...

core_plugin = ml2

service_plugins = router

allow_overlapping_ips = True

rpc_backend = rabbit

auth_strategy = keystone

notify_nova_on_port_status_changes = True

notify_nova_on_port_data_changes = True

[oslo_messaging_rabbit]

...

rabbit_host = controller

rabbit_userid = openstack

rabbit_password = adm*123

[keystone_authtoken]

...

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = adm*123

[nova]

...

auth_url = http://controller:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = nova

password = adm*123

[oslo_concurrency]

...

lock_path = /var/lib/neutron/tmp

配置ml2:

vi /etc/neutron/plugins/ml2/ml2_conf.ini

[ml2]

...

type_drivers = flat,vlan,vxlan

tenant_network_types = vxlan

mechanism_drivers = linuxbridge,l2population

extension_drivers = port_security

flat_networks = provider

vni_ranges = 1:1000

enable_ipset = True

我的配置:

配置linux briadge agent:

vi /etc/neutron/plugins/ml2/linuxbridge_agent.ini

[linux_bridge]

physical_interface_mappings=provider:eno33554960

[vxlan]

enable_vxlan=True

local_ip=OVERLAY_INTERFACE_IP_ADDRESS

l2_population=True

[securitygroup]

...

enable_security_group = True

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

我的配置:

配置l3_agent:

vi /etc/neutron/l3_agent.ini

[DEFAULT]

...

interface_driver = neutron.agent.linux.interface.BridgeInterfaceDriver

external_network_bridge =

配置dhcp agent:

vi /etc/neutron/dhcp_agent.ini

[DEFAULT]

...

interface_driver = neutron.agent.linux.interface.BridgeInterfaceDriver

dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq

enable_isolated_metadata = True

配置metadata agent:

vi /etc/neutron/metadata_agent.ini

[DEFAULT]

...

nova_metadata_ip = controller

metadata_proxy_shared_secret = adm*123

配置nova使用neutron:

vi /etc/nova/nova.conf

[neutron]

...

url = http://controller:9696

auth_url = http://controller:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = adm*123

service_metadata_proxy = True

metadata_proxy_shared_secret = adm*123

保存退出。

创建软连接:

ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini

同步数据库:

su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf \

--config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron

启动服务:

systemctl restart openstack-nova-api.service

systemctl enable neutron-server.service \

neutron-linuxbridge-agent.service neutron-dhcp-agent.service \

neutron-metadata-agent.service

systemctl start neutron-server.service \

neutron-linuxbridge-agent.service neutron-dhcp-agent.service \

neutron-metadata-agent.service

systemctl enable neutron-l3-agent.service

systemctl start neutron-l3-agent.service

在compute节点安装neutron:

yum install openstack-neutron-linuxbridge ebtables ipset -y

配置/etc/neutron/neutron.conf

[DEFAULT]

...

rpc_backend = rabbit

auth_strategy = keystone

[oslo_messaging_rabbit]

...

rabbit_host = controller

rabbit_userid = openstack

rabbit_password = RABBIT_PASS

[keystone_authtoken]

...

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = adm*123

[oslo_concurrency]

...

lock_path = /var/lib/neutron/tmp

配置:/etc/neutron/plugins/ml2/linuxbridge_agent.ini

[linux_bridge]

physical_interface_mappings=provider:eno33554960

[vxlan]

enable_vxlan=True

local_ip=192.168.1.183

l2_population=True

[securitygroup]

...

enable_security_group = True

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

我的配置:

配置nova使用neutron:

配置/etc/nova/nova.conf

[neutron]

...

url = http://controller:9696

auth_url = http://controller:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = adm*123

保存退出。

启动服务:

systemctl restart openstack-nova-compute.service

systemctl enable neutron-linuxbridge-agent.service

systemctl start neutron-linuxbridge-agent.service

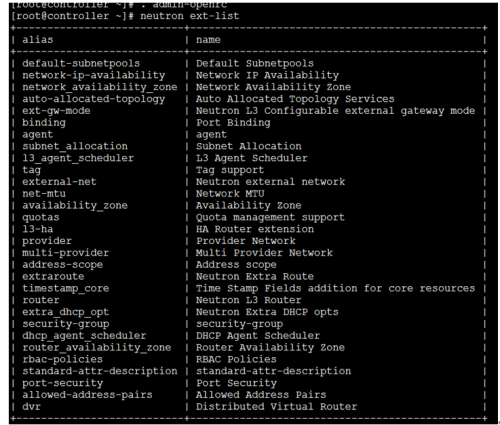

回到controller节点确认操作:

执行环境变量:

. admin-openrc

执行:

neutron ext-list

我的输出:

八 安装dashboard

我的dashboard安装在controller节点上。

安装软件:

yum install openstack-dashboard -y

配置/etc/openstack-dashboard/local_settings

OPENSTACK_HOST="controller"

ALLOWED_HOSTS=[‘*‘, ]

SESSION_ENGINE = ‘django.contrib.sessions.backends.cache‘

CACHES = { ‘default‘: {‘BACKEND‘: ‘django.core.cache.backends.memcached.MemcachedCache‘,

‘LOCATION‘: ‘controller:11211‘,

}

}

OPENSTACK_KEYSTONE_URL="http://%s:5000/v3" % OPENSTACK_HOST

PENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT=True

OPENSTACK_API_VERSIONS = {"identity": 3,

"image": 2,

"volume": 2,

}

OPENSTACK_KEYSTONE_DEFAULT_DOMAIN="default"

OPENSTACK_KEYSTONE_DEFAULT_ROLE="user"

OPENSTACK_NEUTRON_NETWORK = {...

‘enable_router‘: False,

‘enable_quotas‘: False,

‘enable_distributed_router‘: False,

‘enable_ha_router‘: False,

‘enable_lb‘: False,

‘enable_firewall‘: False,

‘enable_vpn‘: False,

‘enable_fip_topology_check‘: False,

}

TIME_ZONE="UTC"

保存退出。

启动服务:

systemctl restart httpd.service memcached.service

这个时候可以通过网络访问openstack了。

注意:现在只是可以通过openstack访问虚拟机了,但是不能安装虚拟机,没有安装cinder,所以我们接下来安装cinder。

九 安装cinder(存储)

在controller节点上执行如下操作:

创建cinder数据库,并授权:

mysql -u root –padm*123

创建数据库:

CREATE DATABASE cinder;

授权:

GRANT ALL PRIVILEGES ON cinder.* TO ‘cinder‘@‘localhost‘ \

IDENTIFIED BY ‘adm*123‘;

GRANT ALL PRIVILEGES ON cinder.* TO ‘cinder‘@‘%‘ \

IDENTIFIED BY ‘adm*123‘;

创建用户,角色,服务,endpoint:

. admin-openrc

用户密码为adm*123:

openstack user create --domain default --password-prompt cinder

openstack role add --project service --user cinder admin

openstack service create --name cinder \

--description "OpenStack Block Storage" volume

openstack service create --name cinderv2 \

--description "OpenStack Block Storage" volumev2

openstack endpoint create --region RegionOne \

volume public http://controller:8776/v1/%\(tenant_id\)s

openstack endpoint create --region RegionOne \

volume internal http://controller:8776/v1/%\(tenant_id\)s

openstack endpoint create --region RegionOne \

volume admin http://controller:8776/v1/%\(tenant_id\)s

openstack endpoint create --region RegionOne \

volumev2 public http://controller:8776/v2/%\(tenant_id\)s

openstack endpoint create --region RegionOne \

volumev2 internal http://controller:8776/v2/%\(tenant_id\)s

openstack endpoint create --region RegionOne \

volumev2 admin http://controller:8776/v2/%\(tenant_id\)s

安装软件:

yum install openstack-cinder -y

配置/etc/cinder/cinder.conf

[database]

...

connection = mysql+pymysql://cinder:adm*123@controller/cinder

[DEFAULT]

...

rpc_backend = rabbit

auth_strategy = keystone

my_ip = 192.168.1.182

[oslo_messaging_rabbit]

...

rabbit_host = controller

rabbit_userid = openstack

rabbit_password = adm*123

[keystone_authtoken]

...

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = cinder

password = adm*123

[oslo_concurrency]

...

lock_path = /var/lib/cinder/tmp

保存退出。

同步数据库:

su -s /bin/sh -c "cinder-manage db sync" cinder

配置nova使用cinder:

配置/etc/nova/nova.conf

[cinder]

os_region_name=RegionOne

保存退出。

启动服务:

systemctl restart openstack-nova-api.service

systemctl enable openstack-cinder-api.service openstack-cinder-scheduler.service

systemctl start openstack-cinder-api.service openstack-cinder-scheduler.service

十. 在cinder节点配置cinder

安装lvm(一般都系统自带了)

yum install lvm2

systemctl enable lvm2-lvmetad.service

systemctl start lvm2-lvmetad.service

创建pv和vg组,这个时候就要用到我们添加的那个干净的磁盘了,可以使用fdisk –l 查看,我这儿使用的sdc(一定要干净的磁盘,我在这里爬坑的时候你们一定在笑)

pvcreate /dev/sdc

创建组:

vgcreate cinder-volumes /dev/sdc

配置lvm的权限:

配置/etc/lvm/lvm.conf,添加

找到devices组添加:

说明:我的是sda,sdb,全部都是lvm卷,所以要全部添加

filter= [ "a/sda/", "a/sdb/","a/sdc/","r/.*/"]

安装软件:

yum install openstack-cinder targetcli python-keystone -y

配置/etc/cinder/cinder.conf

[database]

...

connection = mysql+pymysql://cinder:adm*123@controller/cinder

[DEFAULT]

...

rpc_backend = rabbit

auth_strategy = keystone

my_ip = 192.168.1.184

enabled_backends = lvm

在文档末尾加入:

[lvm]

...

volume_driver = cinder.volume.drivers.lvm.LVMVolumeDriver

volume_group = cinder-volumes

iscsi_protocol = iscsi

iscsi_helper = lioadm

[oslo_concurrency]

...

lock_path = /var/lib/cinder/tmp

启动服务:

systemctl enable openstack-cinder-volume.service target.service

systemctl start openstack-cinder-volume.service target.service

确认操作:

在controller节点:

. admin-openrc

cinder service-list

看输出结果

我的结果为:

到现在为止,我们可以创建虚拟机。

再次之前我们需要创建vxlan。

步骤如下:

创建provider网络:

neutron--debug net-create --shared provider --router:external True--provider:network_type flat --provider:physical_network provider

创建子网,floatip使用:

neutronsubnet-create provider 192.168.1.0/24 --name public-sub --allocation-poolstart=192.168.1.210,end=192.168.1.220 --dns-nameserver 61.139.2.69 --gateway192.168.1.1

创建vxlan私网:

neutronnet-create private --provider:network_type vxlan --router:external False--shared

创建子网:

neutron subnet-create private --nameinternal-subnet --gateway 192.168.13.1 192.168.13.0/24

由于我做这个时候没有截图,所以截图使用来自网上,请谅解:

创建了网络之后创建路由,虚拟机才可以通网。

在dashboard里面点击项目—网络—路由—新建路由即可。

到此你可以上传你制作好的镜像,创建你的虚拟机了(镜像可以在晚上去下载,也可以自己制作,网上的大神多得很 哈哈)。

最后总结一下自己遇到的问题:

在cinder的时候遇到这种错误(所以服务正常):

2016-10-2712:53:33.077 76504 ERROR cinder.scheduler.flows.create_volume[req-1d9179f3-913c-4e72-a357-437ed4ed3c2d 3da30194de374868990d83f474149ae663ed5b1babb74b3080f90a365efbcb84 - - -] Failed to run taskcinder.scheduler.flows.create_volume.ScheduleCreateVolumeTask;volume:create: Novalid host was found. No weighed hosts available

最后是新加一个干净的磁盘,重新配置解决。

在nova和neutron的时候,出现401的授权错误

查看日志

查看配置文件

删除用户,重新建立

我的属于第三种,没有任何问题,就是删除之后,重新建立解决。

3 在安装虚拟的时候不要使用iso文件去安装,一定要自己制作的镜像(不知道的可以网上找,多得你数不清),我使用centos7dvd版本的iso安装文件去安装,始终没有办法进入系统,安装完成之后重启还是进入安装界面。

附上我的dashboard的几个截图:

最后附上所有节点的配置文件。如有错误请指正交了 674591788@qq.com.

本文出自 “nginx安装优化” 博客,谢绝转载!

CENTOS7 安装openstack mitaka版本(最新整理完整版附详细截图和操作步骤,添加了cinder和vxlan)