首页 > 代码库 > 分析日志文件

分析日志文件

在tomcat中安装logback插件,此插件用于生成日志。该日志中包括很多信息,我们的目的是将需要的数据进行整理,将其插入到MySQL数据库中,并将其做成定时任务执行。

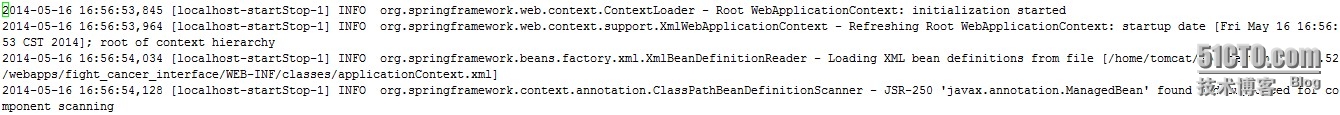

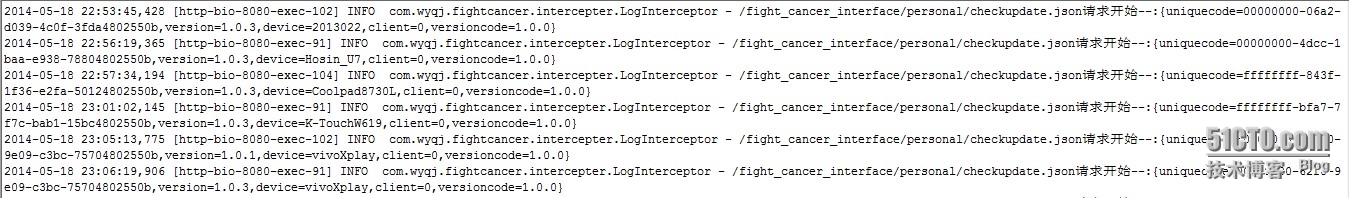

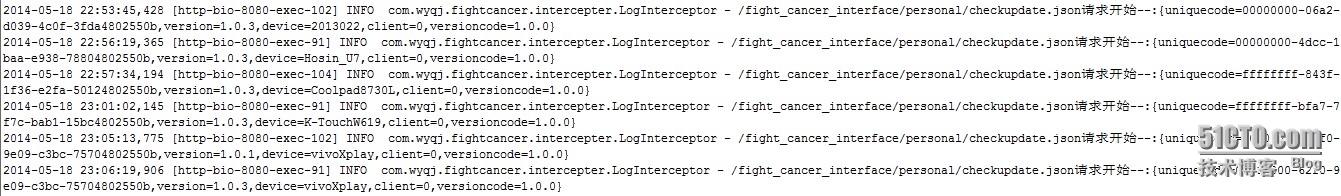

一下是原日志文件内容:

我们需要client,uniquecode,device,versioncode,interface,createtime这些字段

思路如下:

1.我们只需要对包含接口和请求开始字段的行进行数据整理。

grep "personal/checkupdate.json请求开始" /home/logs/fight_cancer_interface/info.2014-05-19.log

2.我们要确定这些行中必须包括client,uniquecode,device,versioncode,interface,createtime这些字段。

grep "personal/checkupdate.json请求开始" /home/logs/fight_cancer_interface/info.2014-05-19.log|grep "client="|grep "uniquecode="|grep "device="|grep "client="|grep "versioncode="

3.需要将多余的字段删除

grep "personal/checkupdate.json请求开始" /home/logs/fight_cancer_interface/info.2014-05-19.log|grep "client="|grep "uniquecode="|grep "device="|grep "client="|grep "versioncode="|awk -F"请求开始--:" ‘{print $1,$2}‘|awk -F"INFO com.wyqj.fightcancer.intercepter.LogInterceptor - /fight_cancer_interface/" ‘{print $1,$2}‘|awk -F".json" ‘{print $1,$2}‘|awk -F"[" ‘{print $1,"@@@###",$2}‘|awk -F"]" ‘{print $1,"@@@###",$2}‘|awk -F‘@@@###‘ ‘{print $1,$3}‘|sed ‘s/personal\/checkupdate/personal_checkupdate/g‘ | awk -F ",[[:digit:]][[:digit:]][[:digit:]]" ‘{print $1,$2}‘|sed "s/ {/,/g"|sed "s/ personal/,personal/g"|sed "s/}//g"|awk -F"," ‘{print $6","$3","$5","$7","$2","$1");"}‘|sed "s/,/\‘,\‘/g"|sed "s/);/\‘);/g"|sed "s/^/\‘/g"

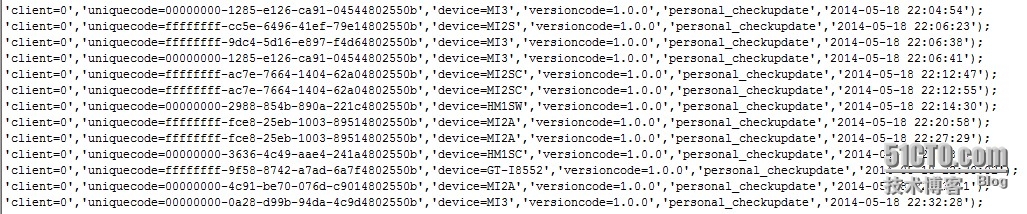

4.将最前面加入数据库插入语句

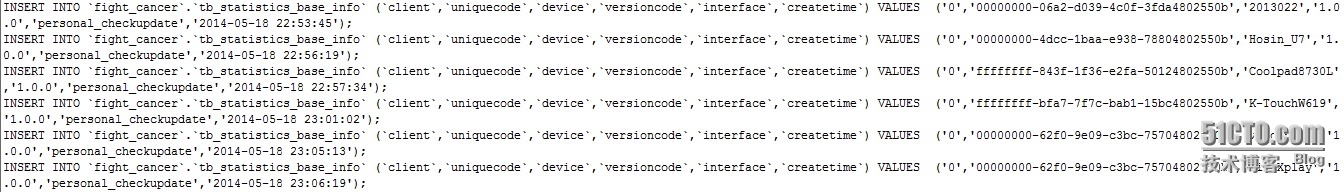

grep "personal/checkupdate.json请求开始" /home/logs/fight_cancer_interface/info.2014-05-18.log|grep "client="|grep "uniquecode="|grep "device="|grep "client="|grep "versioncode="|awk -F"请求开始--:" ‘{print $1,$2}‘|awk -F"INFO com.wyqj.fightcancer.intercepter.LogInterceptor - /fight_cancer_interface/" ‘{print $1,$2}‘|awk -F".json" ‘{print $1,$2}‘|awk -F"[" ‘{print $1,"@@@###",$2}‘|awk -F"]" ‘{print $1,"@@@###",$2}‘|awk -F‘@@@###‘ ‘{print $1,$3}‘|sed ‘s/personal\/checkupdate/personal_checkupdate/g‘ | awk -F ",[[:digit:]][[:digit:]][[:digit:]]" ‘{print $1,$2}‘|sed "s/ {/,/g"|sed "s/ personal/,personal/g"|sed "s/}//g"|awk -F"," ‘{print $6","$3","$5","$7","$2","$1");"}‘|sed "s/,/\‘,\‘/g"|sed "s/);/\‘);/g"|sed "s/^/\‘/g"|sed ‘s/^/INSERT INTO `fight_cancer`.`tb_statistics_base_info` (`client`,`uniquecode`,`device`,`versioncode`,`interface`,`createtime`) VALUES (/g‘|sed "s/client=//g"|sed "s/uniquecode=//g"|sed "s/device=//g" |sed "s/versioncode=//g"

5.将这些sql语句生成.sql文件

grep "personal/checkupdate.json请求开始" /home/logs/fight_cancer_interface/info.2014-05-18.log|grep "client="|grep "uniquecode="|grep "device="|grep "client="|grep "versioncode="|awk -F"请求开始--:" ‘{print $1,$2}‘|awk -F"INFO com.wyqj.fightcancer.intercepter.LogInterceptor - /fight_cancer_interface/" ‘{print $1,$2}‘|awk -F".json" ‘{print $1,$2}‘|awk -F"[" ‘{print $1,"@@@###",$2}‘|awk -F"]" ‘{print $1,"@@@###",$2}‘|awk -F‘@@@###‘ ‘{print $1,$3}‘|sed ‘s/personal\/checkupdate/personal_checkupdate/g‘ | awk -F ",[[:digit:]][[:digit:]][[:digit:]]" ‘{print $1,$2}‘|sed "s/ {/,/g"|sed "s/ personal/,personal/g"|sed "s/}//g"|awk -F"," ‘{print $6","$3","$5","$7","$2","$1");"}‘|sed "s/,/\‘,\‘/g"|sed "s/);/\‘);/g"|sed "s/^/\‘/g"|sed ‘s/^/INSERT INTO `fight_cancer`.`tb_statistics_base_info` (`client`,`uniquecode`,`device`,`versioncode`,`interface`,`createtime`) VALUES (/g‘|sed "s/client=//g"|sed "s/uniquecode=//g"|sed "s/device=//g" |sed "s/versioncode=//g" > 2014-05-18.sql

6.将sql文件生成数据库

mysql -u用户名 -p密码 数据库名 < 2014-05-18.sql

7.完善脚本

#!/bin/bash

#dates=`date +%Y-%m-%d`

dates=`date -d"1 days ago" +%Y-%m-%d`

path_log=/home/logs/fight_cancer_interface/info.$dates.log

path_sql=/home/sql/$dates.sql

grep "personal/checkupdate.json请求开始" $path_log|grep "client="|grep "uniquecode="|grep "device="|grep "client="|grep "versioncode="|awk -F"请求开始--:" ‘{print $1,$2}‘|awk -F"INFO com.wyqj.fightcancer.intercepter.LogInterceptor - /fight_cancer_interface/" ‘{print $1,$2}‘|awk -F".json" ‘{print $1,$2}‘|awk -F"[" ‘{print $1,"@@@###",$2}‘|awk -F"]" ‘{print $1,"@@@###",$2}‘|awk -F‘@@@###‘ ‘{print $1,$3}‘|sed ‘s/personal\/checkupdate/personal_checkupdate/g‘ | awk -F ",[[:digit:]][[:digit:]][[:digit:]]" ‘{print $1,$2}‘|sed "s/ {/,/g"|sed "s/ personal/,personal/g"|sed "s/}//g"|awk -F"," ‘{print $6","$3","$5","$7","$2","$1");"}‘|sed "s/,/\‘,\‘/g"|sed "s/);/\‘);/g"|sed "s/^/\‘/g"|sed ‘s/^/INSERT INTO `fight_cancer`.`tb_statistics_base_info` (`client`,`uniquecode`,`device`,`versioncode`,`interface`,`createtime`) VALUES (/g‘|sed "s/client=//g"|sed "s/uniquecode=//g"|sed "s/device=//g" |sed "s/versioncode=//g" > $path_sql

mysql -u用户名 -p密码 数据库名 < $path_sql

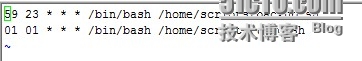

8.将此脚本做成定时任务

crontab -e

[注]这里我做了两个定时任务,这里需要注意的是,23点59分执行数据库备份脚本,01点01分执行日志整理脚本。日志整理脚本必须在数据库备份脚本执行之后执行。因为如果日志文件过大,需要很长时间执行,如果在其还没执行完就进行数据库备份,可能会出现问题。

这个脚本需要熟练运用sed和awk,通过此脚本也看出了sed和awk功能的强大。我之前尝试使用cut命令进行数据分割,都没能如愿。希望以后能更深入地学习sed和awk,并能够掌握sed和awk编程。

本文出自 “运维工作笔记” 博客,请务必保留此出处http://yyyummy.blog.51cto.com/8842100/1413520