首页 > 代码库 > 集群系列教程之:keepalived+lvs 部署

集群系列教程之:keepalived+lvs 部署

集群系列教程之:keepalived+lvs

前言:最近看群里很多人在问keepalived+lvs的架构怎么弄,出了各种各样的问题,为此特别放下了别的文档,先写一篇keepalived+lvs架构的文档,使那些有需求的人能够得以满足。但是此篇文档是架构文档,不是基础理论,但我想你能做这个架构,势必也了解了基础理论知识,更多的理论知识体系,请看下回分解。。。。

测试拓扑:

环境说明:

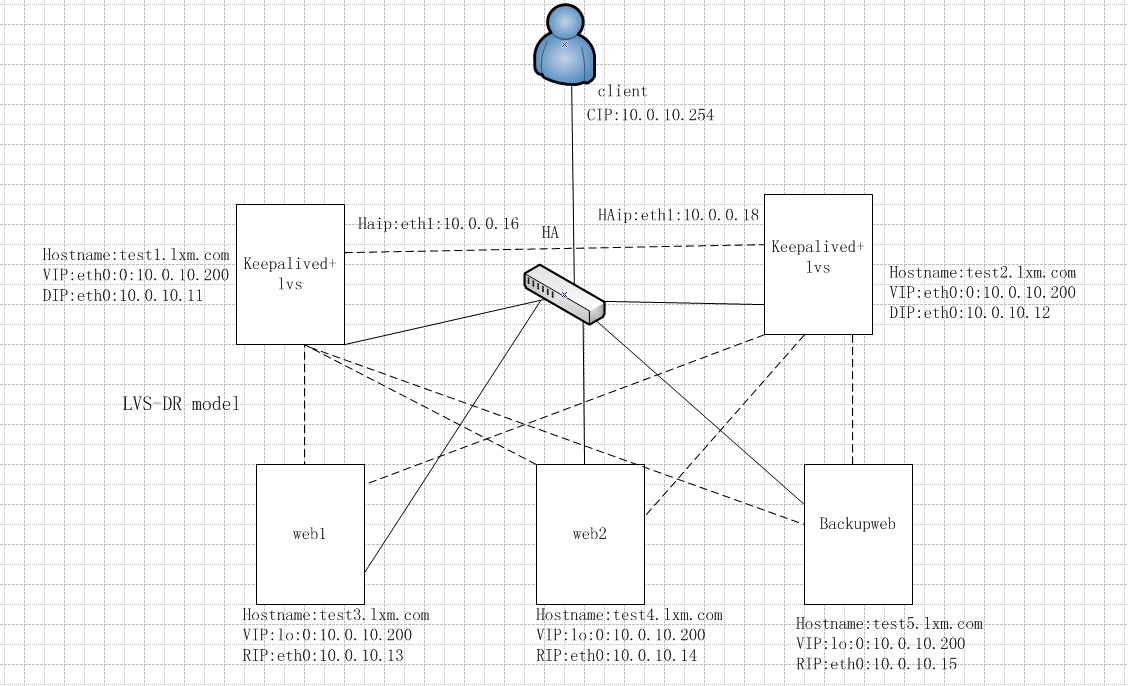

从上面的拓扑图,就可以看出本实验的环境信息,其中实线代表的是真实的物理连接,而虚线表示的是逻辑关系。

hostname:test1.lxm.com

IP:

vip:eth0:0:10.0.10.200/24 //会不会有人看不懂含义啊?这个表示vip地址配置在eth0网卡的虚拟网卡eth0:0上,且ip地址是10.0.10.200/24

DIP:eth0:10.0.10.11/24

HAip:eth1:10.0.0.16/24 //所谓的HAip就是要用来传递心跳信息的网卡上的ip地址,在线上环境通常是要把数据网卡和心跳网卡分开的

function: 用做keepalived+lvs的前端主机

hostname:test2.lxm.com

IP:

vip:eth0:0;10.0.10.200/24

DIP:eth0:10.0.10.12/24

HAip:eth1:10.0.10.18/24

function:用做keepalived+lvs的前端主机

hostname:test3.lxm.com

IP:

vip:lo:0:10.0.10.200/32

RIP:eth0:10.0.10.13/24

function:用作后端web

hostname:test4.lxm.com

IP:

vip:lo:0:10.0.10.200/32

RIP:eth0:10.0.10.14/24

function:用作后端web

hostname:test5.lxm.com

IP:

vip:lo:0:10.0.10.200/32

RIP:eth0:10.0.10.15/24

function: 用来做备用的web,什么 意思呢?就是当后端web全部故障的时候,keepalived可以设置将其请求转发到这台web上,给用户一个好的错误体验而已,体验内容随你自己喜欢。。

此外,这里的lvs使用的模型是DR模型,在拓扑上已经标注,其实从ip地址信息,也应该能看出来是DR模型。。。

初始化工作:

所有主机配置好网络环境,尤其要注意前端两台做keepalived+lvs的是两个网卡,其次关闭selinux和iptables,配置好yum源。另外一个重点是一定要所有主机同步好时间,这将是你集群能

否成功的关键。。

架构部署:

关于架构部署的事情,我想说的是,不要一上来就乱搞一通,这个装装,那个装装,最后一测试,巴拉巴拉一大堆错误,看到错误信息,脑袋直接蒙了,不知道该怎么检查。。所以部署架构

的时候一定要分层次。一个层次一个层次的去测试,直到最终完成。。

就比如这里谈到的keepalived+lvs的架构,下面分这几个层次部署:

1.先部署后端三台web服务器,并测试web服务能够正常访问;

2.在前端两台主机上分别部署lvs,整合lvs+web,分别测试两台lvs主机和后端web配合能正常提供服务;

3.在前端两台主机上分别部署keepalived,整合keepalived+lvs;

4.测试keepalived是否能够满足HA的需求;

哈哈,看了上面几个层次,是否顿觉架构清晰了不少,当然不排除你比我有更好的思路哦。。。。。

1.部署realserver(后端web)上的web服务

主机:test3.lxm.com

[root@test3 /]# rpm -qa | grep httpd //这里以httpd为例,如果你需要,你也可以使用nginx等其他web软件

[root@test3 /]# yum -y install httpd

[root@test3 /]# cd /var/www/html/

[root@test3 html]# ls

[root@test3 html]# echo "<h1>this is test3.lxm.com</h1>" > index.html

[root@test3 html]# vim /etc/httpd/conf/httpd.conf

将配置文件中的ServerName字段修改为:

ServerName 0.0.0.0:80

[root@test3 html]# service httpd start

Starting httpd: [ OK ]

[root@test3 html]#

[root@test3 html]# ps aux | grep httpd

root 1660 0.0 1.4 175708 3660 ? Ss 11:56 0:00 /usr/sbin/httpd

apache 1662 0.0 0.9 175708 2392 ? S 11:56 0:00 /usr/sbin/httpd

apache 1663 0.0 0.9 175708 2392 ? S 11:56 0:00 /usr/sbin/httpd

apache 1664 0.0 0.9 175708 2392 ? S 11:56 0:00 /usr/sbin/httpd

apache 1665 0.0 0.9 175708 2392 ? S 11:56 0:00 /usr/sbin/httpd

apache 1666 0.0 0.9 175708 2392 ? S 11:56 0:00 /usr/sbin/httpd

apache 1667 0.0 0.9 175708 2392 ? S 11:56 0:00 /usr/sbin/httpd

apache 1668 0.0 0.9 175708 2392 ? S 11:56 0:00 /usr/sbin/httpd

apache 1669 0.0 0.9 175708 2392 ? S 11:56 0:00 /usr/sbin/httpd

root 1679 0.0 0.3 103244 848 pts/0 S+ 11:58 0:00 grep httpd

[root@test3 html]# netstat -nultp | grep httpd

tcp 0 0 :::80 :::* LISTEN 1660/httpd

[root@test3 html]# links --dump http://10.0.10.13

this is test3.lxm.com

[root@test3 html]

到此,第一台web已经搭建完成。。。。

主机:test4.lxm.com

[root@test4 /]# rpm -qa | grep httpd

[root@test4 /]# yum -y install httpd

[root@test4 /]# cd /var/www/html/

[root@test4 html]# ls

[root@test4 html]# echo "<h1>this is test4.lxm.com</h1>" > index.html

[root@test4 html]# vim /etc/httpd/conf/httpd.conf

将配置文件中的ServerName字段修改为:

ServerName 0.0.0.0:80

[root@test4 html]# service httpd start

Starting httpd: [ OK ]

[root@test4 html]# ps aux | grep httpd

root 1672 0.0 1.5 175708 3668 ? Ss 12:01 0:00 /usr/sbin/httpd

apache 1674 0.0 0.9 175708 2400 ? S 12:01 0:00 /usr/sbin/httpd

apache 1675 0.0 0.9 175708 2400 ? S 12:01 0:00 /usr/sbin/httpd

apache 1676 0.0 0.9 175708 2400 ? S 12:01 0:00 /usr/sbin/httpd

apache 1677 0.0 0.9 175708 2400 ? S 12:01 0:00 /usr/sbin/httpd

apache 1678 0.0 0.9 175708 2400 ? S 12:01 0:00 /usr/sbin/httpd

apache 1679 0.0 0.9 175708 2400 ? S 12:01 0:00 /usr/sbin/httpd

apache 1680 0.0 0.9 175708 2400 ? S 12:01 0:00 /usr/sbin/httpd

apache 1681 0.0 0.9 175708 2400 ? S 12:01 0:00 /usr/sbin/httpd

root 1683 0.0 0.3 103244 848 pts/1 S+ 12:01 0:00 grep httpd

[root@test4 html]# netstat -nultp | grep httpd

tcp 0 0 :::80 :::* LISTEN 1672/httpd

[root@test4 html]# links --dump http://10.0.10.14

this is test4.lxm.com

[root@test4 html]#

到此,第二台web已经搭建测试完成。。。

注意:这里的每台web服务器页面内容都是不一样的,这是为了测试的时候效果明显,在生产环境中,加入负载均衡的web服务器上的web内容必须要保持一致。

2.部署备用web上的web服务

主机:test5.lxm.com

[root@test4 /]# rpm -qa | grep httpd

[root@test4 /]# yum -y install httpd

[root@test5 /]# cd /var/www/html/

[root@test5 html]# ls

[root@test5 html]# echo "<h1>this is test5.lxm.com</h1>" > index.html

[root@test5 html]# vim /etc/httpd/conf/httpd.conf

将配置文件中的ServerName字段修改为:

ServerName 0.0.0.0:80

[root@test5 html]# service httpd start

Starting httpd: [ OK ]

[root@test5 html]# ps aux | grep httpd

root 1613 0.0 1.5 175708 3664 ? Ss 12:03 0:00 /usr/sbin/httpd

apache 1615 0.0 0.9 175708 2396 ? S 12:03 0:00 /usr/sbin/httpd

apache 1616 0.0 0.9 175708 2396 ? S 12:03 0:00 /usr/sbin/httpd

apache 1617 0.0 0.9 175708 2396 ? S 12:03 0:00 /usr/sbin/httpd

apache 1618 0.0 0.9 175708 2396 ? S 12:03 0:00 /usr/sbin/httpd

apache 1619 0.0 0.9 175708 2396 ? S 12:03 0:00 /usr/sbin/httpd

apache 1620 0.0 0.9 175708 2396 ? S 12:03 0:00 /usr/sbin/httpd

apache 1621 0.0 0.9 175708 2396 ? S 12:03 0:00 /usr/sbin/httpd

apache 1622 0.0 0.9 175708 2396 ? S 12:03 0:00 /usr/sbin/httpd

root 1624 0.0 0.3 103244 848 pts/0 S+ 12:03 0:00 grep httpd

[root@test5 html]# netstat -nultp | grep httpd

tcp 0 0 :::80 :::* LISTEN 1613/httpd

[root@test5 html]# links --dump http://10.0.10.15

hello world!!!

[root@test5 html]#

到此,备份web也搭建完成了....

3.部署lvs环境

1)安装配置两台lvs director环境

主机:test1.lxm.com

[root@test1 /]# grep -i ‘ip_vs‘ /boot/config-2.6.32-431.el6.x86_64 //查看当前系统内核是否支持lvs的功能,默认情况下都已经将lvs的模块集成到内核了。。。

CONFIG_IP_VS=m

CONFIG_IP_VS_IPV6=y

# CONFIG_IP_VS_DEBUG is not set

CONFIG_IP_VS_TAB_BITS=12

CONFIG_IP_VS_PROTO_TCP=y

CONFIG_IP_VS_PROTO_UDP=y

CONFIG_IP_VS_PROTO_AH_ESP=y

CONFIG_IP_VS_PROTO_ESP=y

CONFIG_IP_VS_PROTO_AH=y

CONFIG_IP_VS_PROTO_SCTP=y

CONFIG_IP_VS_RR=m

CONFIG_IP_VS_WRR=m

CONFIG_IP_VS_LC=m

CONFIG_IP_VS_WLC=m

CONFIG_IP_VS_LBLC=m

CONFIG_IP_VS_LBLCR=m

CONFIG_IP_VS_DH=m

CONFIG_IP_VS_SH=m

CONFIG_IP_VS_SED=m

CONFIG_IP_VS_NQ=m

CONFIG_IP_VS_FTP=m

CONFIG_IP_VS_PE_SIP=m

[root@test1 /]#

[root@test1 /]# rpm -qa | grep ipvsadm

[root@test1 /]# yum -y install ipvsadmin //安装lvs用户空间的管理软件ipvsadm,

[root@test1 /]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

[root@test1 /]#

如果能出现上面的信息,表示ipvsadm已经安装成功。。

注:这个地方有人会产生怀疑,你怎么没还没启动就开始查看了呢?其实lvs是内核的一种功能,内核默认就支持了这种功能,ipvsadm本身就是用户空间的一种管理工具,启动停止ipvsadm无非

就是刷新规则的过程。并不影响你使用ipvsadm来进行管理。不知道大家有没有注意到iptables,其实iptables也是这个特性。

配置VIP:

[root@test1 /]# ifconfig eth0:0 10.0.10.200 netmask 255.255.255.0

[root@test1 /]# ifconfig eth0:0

eth0:0 Link encap:Ethernet HWaddr 08:00:27:ED:EF:33

inet addr:10.0.10.200 Bcast:10.0.10.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

从上面的信息可见,VIP配置成功。。。

配置LVS规则:

关于LVS的模型,在上面的环境说明中已经描述,使用DR模型,此外由于该技术文档的重点是测试keepalived的功能,所以这里对负载均衡的策略选择标准的rr(轮询)策略

[root@test1 /]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

[root@test1 /]# ipvsadm -A -t 10.0.10.200:80 -s rr

[root@test1 /]# ipvsadm -a -t 10.0.10.200:80 -r 10.0.10.13:80 -g

[root@test1 /]# ipvsadm -a -t 10.0.10.200:80 -r 10.0.10.14:80 -g

[root@test1 /]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 0

-> 10.0.10.14:80 Route 1 0 0

[root@test1 /]# service ipvsadm save

[root@test1 /]#

从上面的信息可见,lvs策略设置没有问题,但是有个注意点要说下,那就是lvs的持久性,如果你设置了持久连接,那么可能一段时间内访问的都是同一台服务器,所以在你测试的时候要特别注意。

到此,一台director上的配置就完成了。。。

主机2:test1.lxm.com

[root@test2 /]# grep -i ‘ip_vs‘ /boot/config-2.6.32-431.el6.x86_64

CONFIG_IP_VS=m

CONFIG_IP_VS_IPV6=y

# CONFIG_IP_VS_DEBUG is not set

CONFIG_IP_VS_TAB_BITS=12

CONFIG_IP_VS_PROTO_TCP=y

CONFIG_IP_VS_PROTO_UDP=y

CONFIG_IP_VS_PROTO_AH_ESP=y

CONFIG_IP_VS_PROTO_ESP=y

CONFIG_IP_VS_PROTO_AH=y

CONFIG_IP_VS_PROTO_SCTP=y

CONFIG_IP_VS_RR=m

CONFIG_IP_VS_WRR=m

CONFIG_IP_VS_LC=m

CONFIG_IP_VS_WLC=m

CONFIG_IP_VS_LBLC=m

CONFIG_IP_VS_LBLCR=m

CONFIG_IP_VS_DH=m

CONFIG_IP_VS_SH=m

CONFIG_IP_VS_SED=m

CONFIG_IP_VS_NQ=m

CONFIG_IP_VS_FTP=m

CONFIG_IP_VS_PE_SIP=m

[root@test2 /]#

[root@test2 /]# rpm -qa | grep ipvsadm

[root@test2 /]# yum -y install ipvsadmin

[root@test2 /]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

[root@test2 /]#

配置VIP:

[root@test2 /]# ifconfig eth0:0 10.0.10.200 netmask 255.255.255.0

[root@test2 /]# ifconfig eth0:0

eth0:0 Link encap:Ethernet HWaddr 08:00:27:0D:26:B8

inet addr:10.0.10.200 Bcast:10.0.10.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

配置LVS规则:

[root@test2 /]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

[root@test2 /]# ipvsadm -A -t 10.0.10.200:80 -s rr

[root@test2 /]# ipvsadm -a -t 10.0.10.200:80 -r 10.0.10.13:80 -g

[root@test2 /]# ipvsadm -a -t 10.0.10.200:80 -r 10.0.10.14:80 -g

[root@test2 /]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 0

-> 10.0.10.14:80 Route 1 0 0

[root@test2 /]# service ipvsadm save

[root@test2 /]#

到此,lvs的规则配置完成。。。。

2)配置后端realserver

后端两台realserver是:test3.lxm.com test4.lxm.com,其配置内容是一致的,以test3.lxm.com为例:

主机;test3.lxm.com

配置VIP:

[root@test3 /]# ifconfig lo

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:39 errors:0 dropped:0 overruns:0 frame:0

TX packets:39 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:3438 (3.3 KiB) TX bytes:3438 (3.3 KiB)

[root@test3 /]# ifconfig lo:0 10.0.10.200 netmask 255.255.255.255

[root@test3 /]# ifconfig lo:0

lo:0 Link encap:Local Loopback

inet addr:10.0.10.200 Mask:255.255.255.255

UP LOOPBACK RUNNING MTU:16436 Metric:1

注意:这里为什么要配置在还回口上面呢?当然你愿意你也可以配置在网卡上,之所以选择还回口,是因为这是系统自带的,你网卡怎么坏,它都不会坏,除非你系统挂掉了。。

配置ARP规则:

[root@test3 /]# sysctl -w net.ipv4.conf.all.arp_announce=2 >> /etc/sysctl.conf

[root@test3 /]# sysctl -w net.ipv4.conf.all.arp_ignore=1 >> /etc/sysctl.conf

[root@test3 /]# sysctl -p

net.ipv4.ip_forward = 0

net.ipv4.conf.default.rp_filter = 1

net.ipv4.conf.default.accept_source_route = 0

kernel.sysrq = 0

kernel.core_uses_pid = 1

net.ipv4.tcp_syncookies = 1

error: "net.bridge.bridge-nf-call-ip6tables" is an unknown key

error: "net.bridge.bridge-nf-call-iptables" is an unknown key

error: "net.bridge.bridge-nf-call-arptables" is an unknown key

kernel.msgmnb = 65536

kernel.msgmax = 65536

kernel.shmmax = 68719476736

kernel.shmall = 4294967296

net.ipv4.conf.all.arp_announce = 2

net.ipv4.conf.all.arp_ignore = 2

net.ipv4.conf.all.arp_announce = 2

net.ipv4.conf.all.arp_ignore = 1

检查:

[root@test3 /]# cd /proc/sys/net/ipv4/conf/all/

[root@test3 all]# ls

accept_local arp_announce bootp_relay forwarding promote_secondaries rp_filter src_valid_mark

accept_redirects arp_filter disable_policy log_martians proxy_arp secure_redirects tag

accept_source_route arp_ignore disable_xfrm mc_forwarding proxy_arp_pvlan send_redirects

arp_accept arp_notify force_igmp_version medium_id route_localnet shared_media

[root@test3 all]# cat arp_announce

2

[root@test3 all]# cat arp_ignore

1

通过上面的设置,可见arp的内核参数,已经设置成功。。。

注:arp参数的含义:

arp_ignore:定义接收到ARP请求时的响应级别;

0:默认行为,响应所有的地址ip和mac地址;

1:仅在请求的目标地址配置在请求到达的接口地址相匹配,才给予响应

在集群中选择1

arp_announce:定义将自己地址向外通告的通告级别;

0:将本地任何接口上的任何地址向外通告;

1:试图仅向目标网络通告与其网络匹配的地址;

2:仅向与本地接口上地址匹配的网络进行通告;

在集群中选择2

为什么要设置arp抑制呢?为了防止冲突,网络通信的的底层是通过mac地址通信,但是是通过arp协议来解析mac地址,如果后端服务器上不设置arp抑制,当有请求询问10.0.10.200的mac地址是什么啊,此时所以配置有10.0.10.200的主机都会响应请求,这不就乱套了啊。。

配置主机路由:

[root@test3 /]# route add -host 10.0.10.200 dev lo:0

[root@test3 /]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

10.0.10.200 0.0.0.0 255.255.255.255 UH 0 0 0 lo

10.0.10.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

169.254.0.0 0.0.0.0 255.255.0.0 U 1002 0 0 eth0

0.0.0.0 10.0.10.254 0.0.0.0 UG 0 0 0 eth0

[root@test3 /]#

有上面的信息可知,主机路由配置成功。。。。

注:为什么要设置主机路由呢?

当一个客户端的请求发来时,目标地址是10.0.10.200,前端director收到请求后,会根据lvs规则转发到后端realserver服务器,后端realserver处理完请求后,就要发送响应包给客户端,由于本地配置有vip地址,所以会直接响应给客户端,但是此时响应的源地址是什么呢?因为客户端的目标地址是10.0.10.200,因此响应包的源地址必须是10.0.10.200,客户端才会接受。。这个时候主机路由就派上用场了,通过查看系统路由表发现10.0.10.200的目的地址通过lo:0口发出去,而此时lo:0口上的地址正式10.0.10.200,因此源地址就是10.0.10.200,这样到了客户端,就会被成功的接受下来。。

额,巴拉巴拉说了一堆,更详细的lvs知识,请看lvs专题吧。。。。累了。。。。

到此,一台realserver就配置完成了。。。。

注意;所有的realserver都要配置,而且配置一样,剩下的realserver的配置就不再巴拉巴拉了。。此外备用web也要跟realserver一样的配置。。

3)测试lvs架构

这里的测试要特别注意的是:一定要一台一台的测试lvs的架构,否则多台lvs director上都有VIP地址,都响应请求,那就完了。。你懂的。。而且在keepalived高可用中,也是一样,同一

时间内只能有一台lvs director在线工作的。。

测试:test1.lxm.com

(关闭test2.lxm.com这台director,只要取消VIP地址即可)

为了让大家看测试效果,这里选用在备用的web上进行测试,你可以在浏览器中测试:

[root@test1 /]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 0

-> 10.0.10.14:80 Route 1 0 0

[root@test5 /]# links --dump http://10.0.10.200

this is test3.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test4.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test3.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test4.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test3.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test4.lxm.com

[root@test5 /]#

//到这里,会不会有人想,你这里都是文字,是不是你手写的啊。。额,你要这么想,你看着办。。。。

[root@test1 /]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 3

-> 10.0.10.14:80 Route 1 0 3

[root@test1 /]#

由上面的信息可见,我在客户端刷了6次,而director上查看,平均分配了。。。

关于test2.lxm.com的测试,这里不在展示,请你自行测试。。。。

最后,关闭director上的ipvsadm并不允许开机自启动:

//这一点特别重要,在keepalived高可用中,lvs的规则是由keepalived来进行管理的。。以上所有的步骤只是为了验证系统运行LVS环境是否有问题。。。

[root@test2 /]# ifconfig eth0:0 down

[root@test2 /]# service ipvsadm stop

ipvsadm: Clearing the current IPVS table: [ OK ]

ipvsadm: Unloading modules: [ OK ]

[root@test2 /]# chkconfig ipvsadm off

[root@test2 /]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

[root@test2 /]#

上面的信息显示的是test2.lxm.com主机的,对于test1.lxm.com主机也是一样,要关闭ipvsadm,并取消VIP。。。

到此,lvs的架构就测试完成了。。。你还有什么问题吗?

4.部署keepalived

keepalived部署是和lvs的director在同一台主机上的,因此test1.lxm.com test2.lxm.com两台主机都要安装keepalvied软件。。。

1)安装keepalived软件

keepalived的安装比较简单,因为其是一个轻量级的高可用工具,但是也有需要注意点的地方,就是其在编译安装的时候需要使用的内核源码的头文件。

#yum -y install kernel-devel kernel-headers libnl-devel

#cd /root/soft

#tar -zxvf keepalived-1.2.7.tar.gz

#cd keepalived-1.2.7

#mkdir /usr/local/keepalived

#./configure --prefix=/usr/local/keepalived --mandir=/usr/local/share/man --with-kernel-dir=/usr/src/kernels/2.6.32-279.el6.x86_64

configure完成后,会出现下面的信息:

Keepalived configuration

------------------------

Keepalived version : 1.2.7

Compiler : gcc

Compiler flags : -g -O2

Extra Lib : -lpopt -lssl -lcrypto -lnl

Use IPVS Framework : Yes

IPVS sync daemon support : Yes

IPVS use libnl : Yes

Use VRRP Framework : Yes

Use VRRP VMAC : Yes

SNMP support : No

Use Debug flags : No

注:上面的信息就是当前keepalived所支持的功能,其中VRRP是keepalived的核心功能,这个是一定包含在内的,但是IPVS模块却是可选的,如果你要支持LVS,这里就必须为yes。。。

#make

#make install

#make clean

如果没什么错误,基本上keepalived编译安装算是完成了。。。

后续操作:

#cd /usr/local/keepalived

#cp -p etc/rc.d/init.d/keepalived /etc/rc.d/init.d

#cp -p etc/sysconfig/keepalived /etc/sysconfig/

#vim /etc/profile

export PATH=$PATH:/usr/local/keepalived/sbin/keepalived

#vim /etc/rc.d/init.d/keepalived

修改这个脚步文件,将可执行程序和配置文件改为正确的路径

keepalivebin=${keepalivebin:-/usr/local/keepalived/sbin/keepalived}

config=${config:-/usr/local/keepalived/etc/keepalived/keepalived.conf}

启动keepalived:

[root@test2 init.d]# service keepalived start

Starting keepalived: [ OK ]

[root@test2 init.d]# ps aux | grep keepalived

root 3056 0.0 0.3 42140 976 ? Ss 15:24 0:00 /usr/local/keepalived/sbin/keepalived -D -f /usr/local/keepalived/etc/keepalived/keepalived.conf

root 3058 0.5 0.9 44376 2292 ? S 15:24 0:00 /usr/local/keepalived/sbin/keepalived -D -f /usr/local/keepalived/etc/keepalived/keepalived.conf

root 3059 0.2 0.6 44244 1628 ? S 15:24 0:00 /usr/local/keepalived/sbin/keepalived -D -f /usr/local/keepalived/etc/keepalived/keepalived.conf

由上面的信息可见,keepalived安装成功,并且能成功启动,但此时如果你查看日志:tail -f /var/log/messages 会发现不停报巴拉巴拉的错误,不要管他 ,暂时跟你没关系

到此,keepalived安装就算成功了。。。同理,安装另一台keepalived主机。。

2)配置文件keepalived.conf

主机:test1.lxm.com:

! Configuration File for keepalived

global_defs {

notification_email {

root@localhost

}

notification_email_from keepalive@localhost

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_sync_group VG_1 {

group {

VI_1

}

}

vrrp_instance VI_1 {

state BACKUP

interface eth1

virtual_router_id 51

priority 100

advert_int 1

nopreempt

authentication {

auth_type PASS

auth_pass keepalivepass

}

virtual_ipaddress {

10.0.10.200/24 dev eth0 label eth0:0

}

}

virtual_server 10.0.10.200 80 {

delay_loop 3

lb_algo rr

lb_kind DR

nat_mask 255.255.255.0

# persistence_timeout 50

protocol TCP

real_server 10.0.10.13 80 {

weight 1

HTTP_GET {

url {

path /

status_code 200

}

connect_port 80

connect_timeout 1

nb_get_retry 3

delay_before_retry 2

}

}

real_server 10.0.10.14 80 {

weight 1

HTTP_GET {

url {

path /

status_code 200

}

connect_port 80

connect_timeout 1

nb_get_retry 3

delay_before_retry 2

}

}

}

主机;test2.lxm.com:

! Configuration File for keepalived

global_defs {

notification_email {

root@localhost

}

notification_email_from keepalive@localhost

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_sync_group VG_1 {

group {

VI_1

}

}

vrrp_instance VI_1 {

state BACKUP

interface eth1

virtual_router_id 51

priority 99

nopreempt

advert_int 1

authentication {

auth_type PASS

auth_pass keepalivepass

}

virtual_ipaddress {

10.0.10.200/24 dev eth0 label eth0:0

}

}

virtual_server 10.0.10.200 80 {

delay_loop 3

lb_algo rr

lb_kind DR

nat_mask 255.255.255.0

# persistence_timeout 50

protocol TCP

real_server 10.0.10.13 80 {

weight 1

HTTP_GET {

url {

path /

status_code 200

}

connect_port 80

connect_timeout 1

nb_get_retry 3

delay_before_retry 2

}

}

real_server 10.0.10.14 80 {

weight 1

HTTP_GET {

url {

path /

status_code 200

}

connect_port 80

connect_timeout 1

nb_get_retry 3

delay_before_retry 2

}

}

}

这边配置文件,我就不解释了,方便你直接贴。。。解释会专门写一篇博文。。。。

3)测试keepalived是否能成功启动,并管理lvs和VIP资源

[root@test1 keepalived]# ps aux | grep keepalived

root 3696 0.0 0.3 42140 976 ? Ss 15:57 0:00 /usr/local/keepalived/sbin/keepalived -D -f /usr/local/keepalived/etc/keepalived/keepalived.conf

root 3698 0.0 0.9 46440 2336 ? S 15:57 0:00 /usr/local/keepalived/sbin/keepalived -D -f /usr/local/keepalived/etc/keepalived/keepalived.conf

root 3699 0.0 0.6 46316 1684 ? S 15:57 0:00 /usr/local/keepalived/sbin/keepalived -D -f /usr/local/keepalived/etc/keepalived/keepalived.conf

root 3701 0.0 0.3 103244 844 pts/0 S+ 16:00 0:00 grep keepalived

[root@test1 keepalived]#

[root@test2 keepalived]# service keepalived start

Starting keepalived: [ OK ]

[root@test2 keepalived]# ps aux | grep keepalived

root 3220 0.0 0.3 42140 976 ? Ss 15:58 0:00 /usr/local/keepalived/sbin/keepalived -D -f /usr/local/keepalived/etc/keepalived/keepalived.conf

root 3222 0.0 0.9 44368 2296 ? S 15:58 0:00 /usr/local/keepalived/sbin/keepalived -D -f /usr/local/keepalived/etc/keepalived/keepalived.conf

root 3223 0.0 0.6 44244 1636 ? S 15:58 0:00 /usr/local/keepalived/sbin/keepalived -D -f /usr/local/keepalived/etc/keepalived/keepalived.conf

root 3225 0.0 0.3 103244 844 pts/0 S+ 16:00 0:00 grep keepalived

[root@test2 keepalived]#

以上信息显示,keepalived在两台主机上都已经启动了。。

注意;一定要注意的我的主机名的变化啊

查看日志:

主机test1.lxm.com

[root@test1 log]# tail -f /var/log/messages

Sep 2 15:57:58 test1 Keepalived[3695]: Starting Keepalived v1.2.7 (09/02,2014)

Sep 2 15:57:58 test1 Keepalived[3696]: Starting Healthcheck child process, pid=3698

Sep 2 15:57:58 test1 Keepalived[3696]: Starting VRRP child process, pid=3699

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Interface queue is empty

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: No such interface, eth1

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Netlink reflector reports IP 10.0.10.11 added

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Netlink reflector reports IP 10.0.0.16 added //这里提示检测到了系统的两个网卡的ip地址

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Netlink reflector reports IP fe80::a00:27ff:feed:ef33 added

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Netlink reflector reports IP fe80::a00:27ff:fe36:2415 added

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Registering Kernel netlink reflector

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Registering Kernel netlink command channel

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Registering gratuitous ARP shared channel

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Interface queue is empty

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: No such interface, eth1

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Netlink reflector reports IP 10.0.10.11 added

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Netlink reflector reports IP 10.0.0.16 added

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Netlink reflector reports IP fe80::a00:27ff:feed:ef33 added

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Netlink reflector reports IP fe80::a00:27ff:fe36:2415 added

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Registering Kernel netlink reflector

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Registering Kernel netlink command channel

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Opening file ‘/usr/local/keepalived/etc/keepalived/keepalived.conf‘.

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Opening file ‘/usr/local/keepalived/etc/keepalived/keepalived.conf‘.

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Truncating auth_pass to 8 characters

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Configuration is using : 65373 Bytes

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: Using LinkWatch kernel netlink reflector...

Sep 2 15:57:58 test1 Keepalived_vrrp[3699]: VRRP sockpool: [ifindex(3), proto(112), fd(10,11)]

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Configuration is using : 16462 Bytes

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Using LinkWatch kernel netlink reflector...

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Activating healthchecker for service [10.0.10.13]:80

Sep 2 15:57:58 test1 Keepalived_healthcheckers[3698]: Activating healthchecker for service [10.0.10.14]:80 //这个地方显示对后端服务器做检测

Sep 2 15:57:59 test1 Keepalived_vrrp[3699]: VRRP_Instance(VI_1) Transition to MASTER STATE

Sep 2 15:58:00 test1 Keepalived_vrrp[3699]: VRRP_Instance(VI_1) Entering MASTER STATE //这里显示该主机被决策为master

Sep 2 15:58:00 test1 Keepalived_vrrp[3699]: VRRP_Instance(VI_1) setting protocol VIPs.

Sep 2 15:58:00 test1 Keepalived_vrrp[3699]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 10.0.10.200

Sep 2 15:58:00 test1 Keepalived_vrrp[3699]: VRRP_Group(VG_1) Syncing instances to MASTER state

Sep 2 15:58:00 test1 Keepalived_healthcheckers[3698]: Netlink reflector reports IP 10.0.10.200 added //到这里显示,想对方主机同步master状态,并添加了VIP

Sep 2 15:58:05 test1 Keepalived_vrrp[3699]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 10.0.10.200

主机:test2.lxm.com

[root@test2 ~]# tail -f /var/log/messages

Sep 2 15:58:03 test2 Keepalived[3219]: Starting Keepalived v1.2.7 (09/02,2014)

Sep 2 15:58:03 test2 Keepalived[3220]: Starting Healthcheck child process, pid=3222

Sep 2 15:58:03 test2 Keepalived[3220]: Starting VRRP child process, pid=3223

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Interface queue is empty

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: No such interface, eth1

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Netlink reflector reports IP 10.0.10.12 added

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Netlink reflector reports IP 10.0.0.18 added

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Netlink reflector reports IP fe80::a00:27ff:fe0d:26b8 added

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Netlink reflector reports IP fe80::a00:27ff:fe35:e1f4 added

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Registering Kernel netlink reflector

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Registering Kernel netlink command channel

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Registering gratuitous ARP shared channel

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Opening file ‘/usr/local/keepalived/etc/keepalived/keepalived.conf‘.

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Interface queue is empty

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Truncating auth_pass to 8 characters

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Configuration is using : 65388 Bytes

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: Using LinkWatch kernel netlink reflector...

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: No such interface, eth1

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: VRRP_Instance(VI_1) Entering BACKUP STATE //成为了backup

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Netlink reflector reports IP 10.0.10.12 added

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Netlink reflector reports IP 10.0.0.18 added

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Netlink reflector reports IP fe80::a00:27ff:fe0d:26b8 added

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Netlink reflector reports IP fe80::a00:27ff:fe35:e1f4 added

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Registering Kernel netlink reflector

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Registering Kernel netlink command channel

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Opening file ‘/usr/local/keepalived/etc/keepalived/keepalived.conf‘.

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Configuration is using : 16477 Bytes

Sep 2 15:58:03 test2 Keepalived_vrrp[3223]: VRRP sockpool: [ifindex(3), proto(112), fd(10,11)]

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Using LinkWatch kernel netlink reflector...

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Activating healthchecker for service [10.0.10.13]:80

Sep 2 15:58:03 test2 Keepalived_healthcheckers[3222]: Activating healthchecker for service [10.0.10.14]:80

通过上面的信息可以看到,keepalived之间的心跳信息已经成功协商,并通过优先级的高低选出了主备。接下来就要验证是否能够驱动资源。在主设备应该能看到VIP和LVS的规则信息,而在从设备上应该只能看到LVS的规则信息,而没有VIP

master:test1.lxm.com:

[root@test1 keepalived]# ifconfig eth0:0

eth0:0 Link encap:Ethernet HWaddr 08:00:27:ED:EF:33

inet addr:10.0.10.200 Bcast:0.0.0.0 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

[root@test1 keepalived]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 0

-> 10.0.10.14:80 Route 1 0 0

[root@test1 keepalived]#

backup:test2.lxm.com

[root@test2 keepalived]# ifconfig eth0:0

eth0:0 Link encap:Ethernet HWaddr 08:00:27:0D:26:B8

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

[root@test2 keepalived]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 0

-> 10.0.10.14:80 Route 1 0 0

[root@test2 keepalived]#

看到没,通过上面的信息显示,验证了之前的猜想。。。

上面的日志信息还显示,keepalived对后端的服务器发送了检测信息,这是因为在keepalived配置文件中,在lvs配置段,配置了检测功能,此时查看后端web服务器的访问日志,验证是否有检测信息:

[root@test4 all]# cd /etc/httpd/logs/

[root@test4 logs]# ls

access_log error_log

[root@test4 logs]# tail -f access_log

10.0.10.11 - - [02/Sep/2014:16:12:05 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

10.0.10.12 - - [02/Sep/2014:16:12:05 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

10.0.10.11 - - [02/Sep/2014:16:12:10 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

10.0.10.12 - - [02/Sep/2014:16:12:10 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

10.0.10.11 - - [02/Sep/2014:16:12:15 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

10.0.10.12 - - [02/Sep/2014:16:12:15 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

10.0.10.11 - - [02/Sep/2014:16:12:20 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

10.0.10.12 - - [02/Sep/2014:16:12:20 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

10.0.10.11 - - [02/Sep/2014:16:12:25 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

10.0.10.12 - - [02/Sep/2014:16:12:25 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

10.0.10.11 - - [02/Sep/2014:16:12:30 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

10.0.10.12 - - [02/Sep/2014:16:12:30 +0800] "GET / HTTP/1.0" 200 31 "-" "KeepAliveClient"

通过上面的信息,验证了keepalived检测后端服务器是否在线的功能已经启用了。。你会发现这样的日志信息不停的在刷。。这个跟你设置的检测策略有关。。。

到此,关于keepalived的安装,配置,启动就成功了,初步说明keepalived+lvs架构已经搭建起来,至于效果怎么样,有待于后面的测试。。。

5.keepalived+lvs全面测试

1)基于上面完成的环境,测试keepalived+lvs能够提供web访问

[root@test5 /]# links --dump http://10.0.10.200

this is test4.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test3.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test4.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test3.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test4.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test3.lxm.com

[root@test5 /]#

[root@test1 keepalived]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 3

-> 10.0.10.14:80 Route 1 0 3

[root@test1 keepalived]#

由上面的测试信息可知,访问没有任何问题。。。因此,就之前搭建起来的环境是没有任何问题的。。。

2)测试keepalived自动切换

keepalived的切换主要分为三种;

第一种:keepalived服务挂了

基于上面的环境,现在的master是test1.lxm.com,backup是test2.lxm.com,现在模拟test1.lxm.com上的keepalived服务挂了

[root@test1 keepalived]# service keepalived stop //关闭了test1的keepalived服务,模拟keepalived挂了。。

Stopping keepalived: [ OK ]

[root@test1 keepalived]#

查看日志:

Sep 2 16:27:26 test1 Keepalived[3696]: Stopping Keepalived v1.2.7 (09/02,2014)

Sep 2 16:27:26 test1 Keepalived_vrrp[3699]: VRRP_Instance(VI_1) sending 0 priority

Sep 2 16:27:26 test1 kernel: IPVS: __ip_vs_del_service: enter

Sep 2 16:27:26 test1 Keepalived_vrrp[3699]: VRRP_Instance(VI_1) removing protocol VIPs.

上面显示keepalived服务停止了,移除了VIP

查看资源:

[root@test1 keepalived]# ifconfig

eth0 Link encap:Ethernet HWaddr 08:00:27:ED:EF:33

inet addr:10.0.10.11 Bcast:10.0.10.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:feed:ef33/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:13896 errors:0 dropped:0 overruns:0 frame:0

TX packets:13783 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:1768054 (1.6 MiB) TX bytes:1575730 (1.5 MiB)

eth1 Link encap:Ethernet HWaddr 08:00:27:36:24:15

inet addr:10.0.0.16 Bcast:10.0.0.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe36:2415/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:5 errors:0 dropped:0 overruns:0 frame:0

TX packets:2174 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:300 (300.0 b) TX bytes:117492 (114.7 KiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:348 errors:0 dropped:0 overruns:0 frame:0

TX packets:348 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:27544 (26.8 KiB) TX bytes:27544 (26.8 KiB)

[root@test1 keepalived]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

[root@test1 keepalived]#

从上面的信息,可见VIP没了,ipvsadm的规则也被清空了。。

此时查看test2.lxm.com主机:

Sep 2 16:27:25 test2 Keepalived_vrrp[3223]: VRRP_Instance(VI_1) Transition to MASTER STATE

Sep 2 16:27:25 test2 Keepalived_vrrp[3223]: VRRP_Group(VG_1) Syncing instances to MASTER state

Sep 2 16:27:26 test2 Keepalived_vrrp[3223]: VRRP_Instance(VI_1) Entering MASTER STATE

Sep 2 16:27:26 test2 Keepalived_vrrp[3223]: VRRP_Instance(VI_1) setting protocol VIPs.

Sep 2 16:27:26 test2 Keepalived_vrrp[3223]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 10.0.10.200

Sep 2 16:27:26 test2 Keepalived_healthcheckers[3222]: Netlink reflector reports IP 10.0.10.200 added

Sep 2 16:27:31 test2 Keepalived_vrrp[3223]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 10.0.10.200

由上面的信息可知,原来的backup主机已经切换到master主机了

查看资源:

[root@test2 keepalived]# ifconfig

eth0 Link encap:Ethernet HWaddr 08:00:27:0D:26:B8

inet addr:10.0.10.12 Bcast:10.0.10.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe0d:26b8/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:10498 errors:0 dropped:0 overruns:0 frame:0

TX packets:11324 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:1449942 (1.3 MiB) TX bytes:1171364 (1.1 MiB)

eth0:0 Link encap:Ethernet HWaddr 08:00:27:0D:26:B8

inet addr:10.0.10.200 Bcast:0.0.0.0 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

eth1 Link encap:Ethernet HWaddr 08:00:27:35:E1:F4

inet addr:10.0.0.18 Bcast:10.0.0.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe35:e1f4/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:2131 errors:0 dropped:0 overruns:0 frame:0

TX packets:239 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:127860 (124.8 KiB) TX bytes:13002 (12.6 KiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:87 errors:0 dropped:0 overruns:0 frame:0

TX packets:87 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:7300 (7.1 KiB) TX bytes:7300 (7.1 KiB)

看到没,多了个VIP。。。好,切换成功

此时在使用客户端访问测试:

[root@test5 /]# date

Tue Sep 2 16:31:37 CST 2014

[root@test5 /]# links --dump http://10.0.10.200

this is test4.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test3.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test4.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test3.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test4.lxm.com

[root@test5 /]# links --dump http://10.0.10.200

this is test3.lxm.com

[root@test5 /]#

[root@test2 keepalived]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 3

-> 10.0.10.14:80 Route 1 0 3

通过上面的信息,访问没有任何影响,从这个测试看出了,keepalived在自身服务挂掉的情况下,可以正常切换。且也验证了,使用另一台keepalived+lvs主机,访问也是正常的。。

注意:这个时候可能有人有疑问了?如果我挂掉的keepalived主机重新上线了,会不会再次变为主设备,因为它的优先级高。我想说可以,只要你设置了抢占规则,但是在线上环境不建议设置抢占,就算时间很短也会有抖动。。默认情况下如果没有设置nopreempt,会根据优先级自动进行抢占的。。。而我上面的配置文件中设置了nopreempt,因此我这里是不会抢占的。

第二种:ipvsadm挂了

先看一下在没有做任何措施的情况下,停止ipvsadm服务是否会切换:

基于此前的环境,这里的master是test2.lxm.com,因此在该主机上检测:

[root@test2 keepalived]# service ipvsadm stop

ipvsadm: Clearing the current IPVS table: [ OK ]

ipvsadm: Unloading modules: [ OK ]

查看日志:

Sep 2 16:53:55 test2 kernel: IPVS: __ip_vs_del_service: enter

Sep 2 16:53:55 test2 kernel: IPVS: [rr] scheduler unregistered.

Sep 2 16:53:55 test2 kernel: IPVS: ipvs unloaded.

从日志看出,就报了个IPVS 调度器为注册,ipvs模块卸载了或者未加载。。其他啥也没了。此时查看一下VIP信息:

[root@test2 keepalived]# ifconfig

eth0 Link encap:Ethernet HWaddr 08:00:27:0D:26:B8

inet addr:10.0.10.12 Bcast:10.0.10.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe0d:26b8/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:13432 errors:0 dropped:0 overruns:0 frame:0

TX packets:14776 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:1831388 (1.7 MiB) TX bytes:1499838 (1.4 MiB)

eth0:0 Link encap:Ethernet HWaddr 08:00:27:0D:26:B8

inet addr:10.0.10.200 Bcast:0.0.0.0 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

eth1 Link encap:Ethernet HWaddr 08:00:27:35:E1:F4

inet addr:10.0.0.18 Bcast:10.0.0.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe35:e1f4/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:2716 errors:0 dropped:0 overruns:0 frame:0

TX packets:1065 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:162960 (159.1 KiB) TX bytes:57606 (56.2 KiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:99 errors:0 dropped:0 overruns:0 frame:0

TX packets:99 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:8300 (8.1 KiB) TX bytes:8300 (8.1 KiB)

[root@test2 keepalived]#

从上面信息看出,VIP信息还在,由此可判断出,并没有切换。。。好,那该怎么办呢?

这个时候就需要keepalived一种特殊的功能:嵌套脚本

此时修改keepalived.conf配置文件,内容如下:

! Configuration File for keepalived

global_defs {

notification_email {

root@localhost

}

notification_email_from keepalive@localhost

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_sync_group VG_1 {

group {

VI_1

}

}

vrrp_script check_lvs { //这段内容就是添加的内容,用来导入外部的脚本

script "/usr/local/keepalived/etc/keepalived/lvs_check.sh" //该选项就是指定外部脚步的位置

interval 1 //没间隔一秒钟执行脚步一次

weight -10 //如果检测失败,则降低本主机keepalived的优先级

fall 1 // 检测失败一次就失败,生产中不建议这样,建议3次左右

rise 1 //检测一次成功,就表示成功。。。

}

vrrp_instance VI_1 {

state BACKUP

interface eth1

virtual_router_id 51

priority 100

advert_int 1

nopreempt

authentication {

auth_type PASS

auth_pass keepalivepass

}

virtual_ipaddress {

10.0.10.200/24 dev eth0 label eth0:0

}

track_script { //这段内容也是添加的,必须要和vrrp_script联合使用,这表示调用vrrp_script定义的脚本。

check_lvs

}

}

virtual_server 10.0.10.200 80 {

delay_loop 3

lb_algo rr

lb_kind DR

nat_mask 255.255.255.0

# persistence_timeout 50

protocol TCP

real_server 10.0.10.13 80 {

weight 1

HTTP_GET {

url {

path /

status_code 200

}

connect_port 80

connect_timeout 1

nb_get_retry 3

delay_before_retry 2

}

}

real_server 10.0.10.14 80 {

weight 1

HTTP_GET {

url {

path /

status_code 200

}

connect_port 80

connect_timeout 1

nb_get_retry 3

delay_before_retry 2

}

}

}

注意:修改keepalived.conf配置文件,必须要相应的同步所有的keepalived主机,你不可能希望一台具有某个功能,另一台没有吧,但是要注意,主备的不同设置和相同设置

创建/usr/local/keepalived/etc/keepalived/lvs_check.sh脚步,一个简单的脚步内容如下:

#!/bin/bash

function mailSend() {

echo "ipvsadm service is down" | mail -s "ipvsadm service is down" root@localhost

}

num=`ipvsadm --list -n | grep 10.0.10.200| wc -l`

[ $num -eq 0 ] && mailSend && exit 1 || exit 0

注:我这个脚步主要是帮助大家测试一下,调用外部脚本来检测服务,达到keepalived切换的目的。其次在该脚步中,当lvs的规则都没有时,此时就考虑ipvsadm挂了,需要切换。那有人就会问了,假如还有lvs规则,但是踢掉了几个规则,怎么办?那我想估计有两种情况,一是你的服务器太脆弱,别人随时能上去玩玩或者你自己踢了玩,二就是后端web服务器有些主机故障了,lvs踢掉了一些,但这个时候即使你切换了,还不是一样的效果哦。。

修改了配置文件,创建的脚本并赋予执行权限,重启服务,再次测试:

注意:我这里还保持主为test2.lxm.com (亲,请不要再问我怎么保持了,如果你是根据我上面做的来,那么此时主默认就是test2.lxm.com,否则根据自己的情况实验即可)

[root@test2 keepalived]# service ipvsadm stop

ipvsadm: Clearing the current IPVS table: [ OK ]

ipvsadm: Unloading modules: [ OK ]

[root@test2 keepalived]#

查看test2.lxm.com的日志:

Sep 5 13:52:21 test2 kernel: IPVS: __ip_vs_del_service: enter

Sep 5 13:52:21 test2 kernel: IPVS: [rr] scheduler unregistered.

Sep 5 13:52:21 test2 kernel: IPVS: ipvs unloaded.

发现日志信息中还是多了这么三行信息,其他没任何反应,怎么回事?按道理来说如果发生了切换,日志中肯定会报移除了虚拟ip地址,但是这里没有,因此可以猜测VIP还在。。

(这里声明下,有人可能眼睛厉害,看到我日志的时间好像和前面不一样啊,哈,亲,因为实验不是一天测完的,本人有工作)

查看VIP地址:

[root@test2 keepalived]# ifconfig

eth0 Link encap:Ethernet HWaddr 08:00:27:0D:26:B8

inet addr:10.0.10.12 Bcast:10.0.10.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe0d:26b8/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:13438 errors:0 dropped:0 overruns:0 frame:0

TX packets:15234 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:1632335 (1.5 MiB) TX bytes:1576327 (1.5 MiB)

eth0:0 Link encap:Ethernet HWaddr 08:00:27:0D:26:B8

inet addr:10.0.10.200 Bcast:0.0.0.0 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

eth1 Link encap:Ethernet HWaddr 08:00:27:35:E1:F4

inet addr:10.0.0.18 Bcast:10.0.0.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe35:e1f4/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:917 errors:0 dropped:0 overruns:0 frame:0

TX packets:2562 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:55020 (53.7 KiB) TX bytes:138084 (134.8 KiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:341 errors:0 dropped:0 overruns:0 frame:0

TX packets:341 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:24801 (24.2 KiB) TX bytes:24801 (24.2 KiB)

从上面的信息可见,VIP还在,因此并没有切换成功,根据日志的信息来看,没有报任何跟vrrp_script有关的信息,这是什么原因?傻眼了。。。其实这个错误是我故意展示的,这里是为了给你加深映像,如果不给你点出来,你可能感觉呀,以前实验好像成功啊,现在咋了?大部分人可能折腾几个小时,甚至更长都搞不明白。。。说实话我也是折腾了好久,查来查去无果,最后不得已扒日志,一条一条看,发现了这么一句话:

Keepalived_vrrp[14961]: VRRP_Instance(VI_1) : ignoring tracked script with weights due to SYNC group

上面这句话的意思:由于sync group的原因,忽略了带有权值的跟踪脚本。。意思就是track_script这个设置根本没起作用。回过头来看看配置文件,果然有这么一段配置:

vrrp_sync_group VG_1 {

group {

VI_1

}

}

好,既然找到了原因,那么接下来,就要测试是否是这个原因,注释掉这段配置,然后再次启动keepalived:

这个时候,查看日志,发现没有了那句话,而且出现了下面一句话:

Sep 5 15:00:54 test2 Keepalived_vrrp[2074]: VRRP_Script(check_lvs) succeeded

由此说明,脚本调用已经成功了。

测试;

[root@test2 keepalived]# service ipvsadm stop

ipvsadm: Clearing the current IPVS table: [ OK ]

ipvsadm: Unloading modules: [ OK ]

[root@test2 keepalived]#

看日志:

Sep 5 15:02:37 test2 kernel: IPVS: __ip_vs_del_service: enter

Sep 5 15:02:37 test2 kernel: IPVS: [rr] scheduler unregistered.

Sep 5 15:02:37 test2 kernel: IPVS: ipvs unloaded.

Sep 5 15:02:37 test2 kernel: IPVS: Registered protocols (TCP, UDP, SCTP, AH, ESP)

Sep 5 15:02:37 test2 kernel: IPVS: Connection hash table configured (size=4096, memory=64Kbytes)

Sep 5 15:02:37 test2 kernel: IPVS: ipvs loaded.

Sep 5 15:02:37 test2 Keepalived_vrrp[2074]: VRRP_Script(check_lvs) failed

从上面的信息看到,脚本已经检测到lvs的规则被刷掉,且返回的结果是失败,这就表示脚本成功返回了值1.但是还是没有移除虚拟ip的信息,这是为什么?

分析:

这个时候就要分析了,当前所在的主机是test2.lxm.com,本来是作为备用主机的,其优先级比较低,但是master发生了故障,切换到了该主机,因此该主机变成了master。当此前master主

机,即test1.lxm.com恢复时,因为设置了nopreempt,因此不会抢占回去。那如果这个时候,test2主机的ipvsadm挂了,规则没了,但是keepalived的心跳还在,keepalived服务并没有挂掉,此时还是可以和tes1主机进行心跳沟通,这个时候发现test1是不抢占的机制,本来test2的优先级就比test1低,此时检测到故障再次降低优先级是一个效果,因此,此时并不会进行主备切换。这个时候,如果想发生错误进行切换,就要修改脚本test2.lxm.com上脚本的内容。

修改脚本如下:

#!/bin/bash

function mailSend() {

echo "ipvsadm service is down" | mail -s "ipvsadm service is down" root@localhost

}

num=`ipvsadm --list -n | grep 10.0.10.200| wc -l`

[ $num -eq 0 ] && mailSend && service keepalived stop || exit 0 //如果检测到失败,直接停止keepalived服务

测试;

[root@test2 keepalived]# service ipvsadm stop

ipvsadm: Clearing the current IPVS table: [ OK ]

ipvsadm: Unloading modules: [ OK ]

看日志:

Sep 5 15:14:58 test2 kernel: IPVS: __ip_vs_del_service: enter

Sep 5 15:14:58 test2 kernel: IPVS: [rr] scheduler unregistered.

Sep 5 15:14:58 test2 kernel: IPVS: ipvs unloaded.

Sep 5 15:14:58 test2 kernel: IPVS: Registered protocols (TCP, UDP, SCTP, AH, ESP)

Sep 5 15:14:58 test2 kernel: IPVS: Connection hash table configured (size=4096, memory=64Kbytes)

Sep 5 15:14:58 test2 kernel: IPVS: ipvs loaded.

Sep 5 15:14:58 test2 Keepalived[7365]: Stopping Keepalived v1.2.7 (09/05,2014)

Sep 5 15:14:58 test2 Keepalived_vrrp[7368]: VRRP_Instance(VI_1) sending 0 priority

Sep 5 15:14:58 test2 Keepalived_vrrp[7368]: VRRP_Instance(VI_1) removing protocol VIPs.

Sep 5 15:14:58 test2 Keepalived_healthcheckers[7367]: Netlink reflector reports IP 10.0.10.200 removed

Sep 5 15:14:58 test2 Keepalived_healthcheckers[7367]: IPVS: No such destination

Sep 5 15:14:58 test2 Keepalived_healthcheckers[7367]: IPVS: Service not defined

Sep 5 15:14:58 test2 Keepalived_healthcheckers[7367]: IPVS: No such service

从日志上看到,keepalived的停止了,VIP被移除了。。。

[root@test2 keepalived]# ifconfig

eth0 Link encap:Ethernet HWaddr 08:00:27:0D:26:B8

inet addr:10.0.10.12 Bcast:10.0.10.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe0d:26b8/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:25996 errors:0 dropped:0 overruns:0 frame:0

TX packets:29273 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:3154978 (3.0 MiB) TX bytes:3116748 (2.9 MiB)

eth1 Link encap:Ethernet HWaddr 08:00:27:35:E1:F4

inet addr:10.0.0.18 Bcast:10.0.0.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe35:e1f4/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:2282 errors:0 dropped:0 overruns:0 frame:0

TX packets:5657 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:136920 (133.7 KiB) TX bytes:305214 (298.0 KiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:3681 errors:0 dropped:0 overruns:0 frame:0

TX packets:3681 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:204929 (200.1 KiB) TX bytes:204929 (200.1 KiB)

这个时候,看到VIP资源没了,查看test1的信息,日志中你会看到切换成了master,VIP信息也添加完成。。。。

到这里,有人肯定会想了?刚刚你在test2上的时候需要修改脚本才行,那test1是不是也要修改?这里的答案是不需要,因为test2是抢占的机制,一旦test1的优先级降低,test2就会立刻抢占过去:

测试:

test1上的脚本内容:

#!/bin/bash

function mailSend() {

echo "ipvsadm service is down" | mail -s "ipvsadm service is down" root@localhost

}

num=`ipvsadm --list -n | grep 10.0.10.200| wc -l`

[ $num -eq 0 ] && mailSend && exit 1 || exit 0

[root@test1 keepalived]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 0

-> 10.0.10.14:80 Route 1 0 0

You have new mail in /var/spool/mail/root

[root@test1 keepalived]# service ipvsadm stop

ipvsadm: Clearing the current IPVS table: [ OK ]

ipvsadm: Unloading modules: [ OK ]

查看日志:

Sep 5 15:15:34 test1 kernel: IPVS: __ip_vs_del_service: enter

Sep 5 15:15:34 test1 kernel: IPVS: [rr] scheduler unregistered.

Sep 5 15:15:34 test1 kernel: IPVS: ipvs unloaded.

Sep 5 15:15:34 test1 kernel: IPVS: Registered protocols (TCP, UDP, SCTP, AH, ESP)

Sep 5 15:15:34 test1 kernel: IPVS: Connection hash table configured (size=4096, memory=64Kbytes)

Sep 5 15:15:34 test1 kernel: IPVS: ipvs loaded.

Sep 5 15:15:34 test1 Keepalived_vrrp[5788]: VRRP_Script(check_lvs) failed

Sep 5 15:15:36 test1 Keepalived_vrrp[5788]: VRRP_Instance(VI_1) Received higher prio advert

Sep 5 15:15:36 test1 Keepalived_vrrp[5788]: VRRP_Instance(VI_1) Entering BACKUP STATE

Sep 5 15:15:36 test1 Keepalived_vrrp[5788]: VRRP_Instance(VI_1) removing protocol VIPs.

Sep 5 15:15:36 test1 Keepalived_healthcheckers[5787]: Netlink reflector reports IP 10.0.10.200 removed

从上面的信息看到没,日志报的和刚刚test2不一样,当脚本检测到失败时,立即降低了自身了优先级,然后提示收到高优先级通告,然后转换到backup状态,移除了VIP。。

好,到这里,关于用脚本检测第三方服务成功与否实现VIP的切换就成功了。。。。

不过,这里还残留一个问题,估计你也想到了,就是vrrp_sync_group和vrrp_script同时使用的问题,从上面的讨论来看,好像是有冲突的概念。但是万一生产中,就是要同时使用怎么办呢?

经过我的测试,如果你想在不注释vrrp_sync_group的情况下,使用vrrp_script的话,那就要修改track_script的内容如下:

track_script {

check_lvs weight 0

}

从上面看出,就是要在脚本名后面 明确的加上weight 0 字段... 关于这个,自行测试吧。我测试是通过。。。

第三种:网卡通信故障

在keepalived中,还可以对网卡故障进行检测,一旦检测到对外通信的网卡发生了故障,就可以进行VIP的切换。在keepalived中有两种方式来检测网卡,一种是向上面一样使用外部脚本的

的方式,另一种就是使用keepalived自身的track_interface检测

测试验证:

测试中的master还是test2.lxm.com(一直在这上面测的原因是其优先级低,如果优先级低的都能正常切换,那么优先级高的就没有问题)

[root@test2 keepalived]#ifdown eth0 down

日志:

Sep 5 15:38:36 test2 Keepalived_vrrp[14989]: VRRP_Instance(VI_1) Received higher prio advert

Sep 5 15:38:36 test2 Keepalived_vrrp[14989]: VRRP_Instance(VI_1) Entering BACKUP STATE

Sep 5 15:38:36 test2 Keepalived_vrrp[14989]: VRRP_Instance(VI_1) removing protocol VIPs.

Sep 5 15:38:36 test2 Keepalived_healthcheckers[14988]: Netlink reflector reports IP 10.0.10.200 removed

查看test1:

Sep 5 15:35:55 test1 Keepalived_vrrp[19581]: VRRP_Instance(VI_1) Transition to MASTER STATE

Sep 5 15:35:56 test1 Keepalived_vrrp[19581]: VRRP_Instance(VI_1) Entering MASTER STATE

Sep 5 15:35:56 test1 Keepalived_vrrp[19581]: VRRP_Instance(VI_1) setting protocol VIPs.

Sep 5 15:35:56 test1 Keepalived_vrrp[19581]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 10.0.10.200

Sep 5 15:35:56 test1 Keepalived_healthcheckers[19580]: Netlink reflector reports IP 10.0.10.200 added

可见test1变成了master,此时在将test2的网卡重新上线,发现test1的日志:

Sep 5 15:38:34 test1 Keepalived_vrrp[19581]: VRRP_Instance(VI_1) Received lower prio advert, forcing new election

Sep 5 15:38:34 test1 Keepalived_vrrp[19581]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 10.0.10.200

Sep 5 15:38:35 test1 Keepalived_vrrp[19581]: VRRP_Instance(VI_1) Received lower prio advert, forcing new election

Sep 5 15:38:35 test1 Keepalived_vrrp[19581]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 10.0.10.200

说明其心跳通信正常。。

再次测试,关掉test1的网卡,看test2的日志:

Sep 5 15:42:45 test2 Keepalived_vrrp[14989]: VRRP_Instance(VI_1) Transition to MASTER STATE

Sep 5 15:42:46 test2 Keepalived_vrrp[14989]: VRRP_Instance(VI_1) Entering MASTER STATE

Sep 5 15:42:46 test2 Keepalived_vrrp[14989]: VRRP_Instance(VI_1) setting protocol VIPs.

Sep 5 15:42:46 test2 Keepalived_healthcheckers[14988]: Netlink reflector reports IP 10.0.10.200 added

Sep 5 15:42:46 test2 Keepalived_vrrp[14989]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 10.0.10.200

Sep 5 15:42:51 test2 Keepalived_vrrp[14989]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 10.0.10.200

发现test2立即变成了master,看VIP

[root@test2 ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 08:00:27:0D:26:B8

inet addr:10.0.10.12 Bcast:10.0.10.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe0d:26b8/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:29585 errors:0 dropped:0 overruns:0 frame:0

TX packets:33483 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:3618179 (3.4 MiB) TX bytes:3520824 (3.3 MiB)

eth0:0 Link encap:Ethernet HWaddr 08:00:27:0D:26:B8

inet addr:10.0.10.200 Bcast:0.0.0.0 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

eth1 Link encap:Ethernet HWaddr 08:00:27:35:E1:F4

inet addr:10.0.0.18 Bcast:10.0.0.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe35:e1f4/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:2722 errors:0 dropped:0 overruns:0 frame:0

TX packets:7036 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:163320 (159.4 KiB) TX bytes:379776 (370.8 KiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:3691 errors:0 dropped:0 overruns:0 frame:0

TX packets:3691 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:205595 (200.7 KiB) TX bytes:205595 (200.7 KiB)

[root@test2 ~]#

发现VIP成功切换。。。。

注:这里就不详细贴日志,自己测试即可。。。

说明:对于上面2和3的测试看起来似乎绕了很大一圈,但是不绕这么一圈,你可能学不到什么,网上一大堆都是巴拉拉巴的都在优先级高的上测试,随便弄个例子就OK了。我这么做,就是让你知道这里面有这么个弯子,你自己想怎么用,自己看着办了。。。

第四种:后端web服务器全部故障时,使用sorry_server定向请求到其他备用服务器

对于这个功能,其实是可有可无,在生产环境中,肯定是每台服务器上的服务都是有所监控的,一旦发现错误就会理解处理,基本上不会发生后端web服务全部故障无法返回数据的情况。但

是凡事不是绝对的,说不定奇葩了呢。。所以还是说一下这个功能。

在测试上面的功能,先测试一下,当后端web服务器有故障时,lvs是否会踢掉有故障的规则:

测试:

在master上查看lvs规则:

[root@test2 ~]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 0

-> 10.0.10.14:80 Route 1 0 0

[root@test2 ~]#

停止后端一台web服务器的httpd服务:

[root@test4 ~]# service httpd stop

Stopping httpd: [ OK ]

[root@test4 ~]#

在查看lvs规则:

[root@test2 ~]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 0

You have new mail in /var/spool/mail/root

[root@test2 ~]#

看日志:

Sep 5 15:55:44 test2 Keepalived_healthcheckers[19580]: Error connecting server [10.0.10.14]:80.

Sep 5 15:55:44 test2 Keepalived_healthcheckers[19580]: Removing service [10.0.10.14]:80 from VS [10.0.10.200]:80

Sep 5 15:55:44 test2 Keepalived_healthcheckers[19580]: Remote SMTP server [127.0.0.1]:25 connected.

Sep 5 15:55:44 test2 Keepalived_healthcheckers[19580]: SMTP alert successfully sent.

可以看到,但后端web服务故障时,对应的规则成功被踢掉。。。

在启动httpd服务:

[root@test4 ~]# service httpd start

Starting httpd: [ OK ]

[root@test4 ~]#

看日志:

Sep 5 15:57:22 test2 Keepalived_healthcheckers[19580]: HTTP status code success to [10.0.10.14]:80 url(1).

Sep 5 15:57:25 test2 Keepalived_healthcheckers[19580]: Remote Web server [10.0.10.14]:80 succeed on service.

Sep 5 15:57:25 test2 Keepalived_healthcheckers[19580]: Adding service [10.0.10.14]:80 to VS [10.0.10.200]:80

Sep 5 15:57:25 test2 Keepalived_healthcheckers[19580]: Remote SMTP server [127.0.0.1]:25 connected.

Sep 5 15:57:25 test2 Keepalived_healthcheckers[19580]: SMTP alert successfully sent.

查看规则:

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 0

-> 10.0.10.14:80 Route 1 0 0

[root@test2 ~]#

可以看到,规则又成功备添加回来了。。。。这说明keepalived可以实时检测后端服务,并刷新规则。。。

测试sorry_server的功能:

在配置文件中添加下面这么一句话:

virtual_server 10.0.10.200 80 {

delay_loop 3

lb_algo rr

lb_kind DR

nat_mask 255.255.255.0

# persistence_timeout 50

protocol TCP

sorry_server 10.0.10.15 80 //我这是配置文件的一部分,看清楚是在什么位置添加的。。。

real_server 10.0.10.13 80 {

weight 1

重启keepalived服务:

[root@test2 keepalived]# service keepalived restart

Stopping keepalived: [ OK ]

Starting keepalived: [ OK ]

[root@test2 keepalived]#

查看规则:

[root@test2 keepalived]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.13:80 Route 1 0 0

-> 10.0.10.14:80 Route 1 0 0

[root@test2 keepalived]#

停止后端所有web服务:

[root@test4 ~]# service httpd stop

Stopping httpd: [ OK ]

[root@test4 ~]#

[root@test3 logs]# service httpd stop

Stopping httpd: [ OK ]

[root@test3 logs]#

查看日志:

[root@test2 keepalived]#tail -f /var/log/message

Sep 5 16:05:15 test2 Keepalived_healthcheckers[25096]: Removing service [10.0.10.13]:80 from VS [10.0.10.200]:80

Sep 5 16:05:15 test2 Keepalived_healthcheckers[25096]: Remote SMTP server [127.0.0.1]:25 connected.

Sep 5 16:05:15 test2 Keepalived_healthcheckers[25096]: SMTP alert successfully sent.

Sep 5 16:05:20 test2 Keepalived_healthcheckers[25096]: Error connecting server [10.0.10.14]:80.

Sep 5 16:05:20 test2 Keepalived_healthcheckers[25096]: Removing service [10.0.10.14]:80 from VS [10.0.10.200]:80

Sep 5 16:05:20 test2 Keepalived_healthcheckers[25096]: Lost quorum 1-0=1 > 0 for VS [10.0.10.200]:80

Sep 5 16:05:20 test2 Keepalived_healthcheckers[25096]: Adding sorry server [10.0.10.15]:80 to VS [10.0.10.200]:80

Sep 5 16:05:20 test2 Keepalived_healthcheckers[25096]: Removing alive servers from the pool for VS [10.0.10.200]:80

Sep 5 16:05:20 test2 Keepalived_healthcheckers[25096]: Remote SMTP server [127.0.0.1]:25 connected.

Sep 5 16:05:20 test2 Keepalived_healthcheckers[25096]: SMTP alert successfully sent.

查看规则:

[root@test2 keepalived]# ipvsadm --list -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.0.10.200:80 rr

-> 10.0.10.15:80 Route 1 0 0

You have new mail in /var/spool/mail/root

[root@test2 keepalived]#

好,到这个地方,请你注意了,对比一样,当添加了sorry_server之后重启了keepalived服务,第一次查看规则,发现没有任何变化,并没有添加备用web的规则信息,当停止掉所有后端服务时

此时在查看规则,发现自动生成了备用web的规则。。

此时在客户端测试访问,发现访问到的是备用web上的内容。。

第五种:主备状态切换的报警

对于keepalived来说,特别重要的就是当前的主备状态,以便于我们根据需要进行调整。。。

配置如下:

vrrp_instance VI_1 {

state BACKUP

interface eth1

virtual_router_id 51

priority 99

advert_int 1

authentication {

auth_type PASS

auth_pass keepalivepass

}

virtual_ipaddress {

10.0.10.200/24 dev eth0 label eth0:0

}

track_script {

check_lvs

}

track_interface {

eth0

}

notify_master "/usr/local/keepalived/etc/keepalived/notify.sh master 10.0.10.200"

notify_backup "/usr/local/keepalived/etc/keepalived/notify.sh backup 10.0.10.200"

notify_fault "/usr/local/keepalived/etc/keepalived/notify.sh fault 10.0.10.200"

smtp_alert //这个必须有,是报警的开关

}

上面的配置是个片段,报警配置是:notify开头的配置。上面的master,backup好理解,fault通常是跟心跳有关,例如心跳网卡宕了,检测不到心跳信息了等。。

一个简单的notify.sh的内容:

#!/bin/bash

#

contact=‘root@localhost‘

Usage() {

echo "Usage:`basename $0` {master|backup|fault} VIP"

}

Notify() {

subject="`hostname`‘s keepalived state changed to $1"

mailBody="`date "+%F %T"`:`hostname`‘s keepalived state change to $1,$VIP floating."

echo $mailBody | mail -s "$subject" $contact

}

[ $# -lt 2 ] && Usage && exit 1

VIP=$2

case $1 in

master)

Notify master

;;

backup)

Notify backup

;;

fault)

Notify fault

;;

*)

Usage

exit 1

;;

esac

配置完成后,重启keepalived服务,当主备发生切换时,就会有邮件报警,在生产环境中,可以将邮件地址填写为自己的邮箱即可。。

其次配置了这里的报警,就可以取消全局配置中的邮件报警了,notification_email相关的设置...,使用该方式,你可以任意定义报警的内容。。。

到此位置,关于keepalived+lvs的部署就说道这了,不可能面面俱到,但是也说的差不多了。。。至于keepalived的本身其它的内容设置,会在keepalived.conf配置文件分析中再聊一聊。。

在啰嗦一句,这文档有点长啊,不想在从头审核了,看到错误的话,你自己意会吧啊,不过我想应该不会有什么笔误吧。

结束!!!

笨蛋的技术------不怕你不会!!!!

本文出自 “笨蛋的技术” 博客,请务必保留此出处http://mingyang.blog.51cto.com/2807508/1549408

集群系列教程之:keepalived+lvs 部署