首页 > 代码库 > LVS(DR)+keepalived+nfs+raid+LVM

LVS(DR)+keepalived+nfs+raid+LVM

LVS理论篇

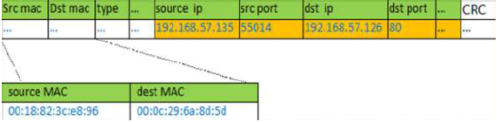

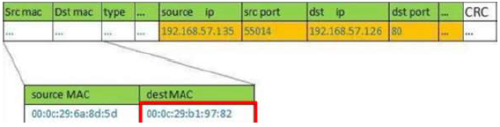

1、Client 向目标VIP 发出请求,Director(负载均衡器)接收。此时IP 包头及数据帧信息为:

2、Director 根据负载均衡算法选择RealServer_1,不修改也不封装IP 报文,而是将数据帧的MAC 地址改为RealServer_1 的MAC 地址,然后在局域网上发送。IP 包头及数据帧头信息如下:

3、RealServer_1 收到这个帧,解封装后发现目标IP 与本机匹配(RealServer 事先绑定了VIP,必须的!)于是处理这个报文,随后重新封装报文,发送到局域网。此时IP 包头及数据帧头信息如下:

4、Client 将收到回复报文。Client 认为得到正常的服务,而不会知道是哪台服务器处理的(注意,如果跨网段,那么报文通过路由器经由Internet 返回给用户)

LVS-DR 中的ARP 问题

在LVS-DR 负载均衡群集中,负载均衡器与节点服务器都要配置相同的VIP 地址,在局

域网中具有相同的IP 地址,势必会造成各种服务器ARP 通信紊乱。当一个ARP 广播发送到

LVS-DR 群集时,因为负载均衡器和节点服务器都是连接到相同的网络上的,它们都会接收

到ARP 广播,这个时候,应该只有前段的负载均衡器进行响应,其他节点服务器不应该响

应ARP 广播。

1、对节点服务器进行处理,使其不影响针对VIP 的ARP 请求。

使用虚拟接口lo:0 承载VIP 地址

设置内核参数arp_ignore=1,系统只响应目的IP 为本地IP 的ARP 请求

2、RealServer 返回报文(源IP 是VIP)经由路由器转发,在重新封装报文时,需要现货区路由器的MAC 地址,发送ARP 请求保重的源IP 地址,而不使用发送接口(如eth0)的IP地址

3、路由器收到ARP 请求后,将跟新ARP 表项,原有的VIP 对应的Director 的MAC 地址将会被跟新为VIP 对应的RealServer 的MAC 地址。

此时新来的报文请求,路由器根据ARP 表项,会将该报文转发给RealServer,从而导致

Director 的VIP 失效!

15

云计算

3、路由器收到ARP 请求后,将跟新ARP 表项,原有的VIP 对应的Director 的MAC 地址将会被跟新为VIP 对应的RealServer 的MAC 地址。此时新来的报文请求,路由器根据ARP 表项,会将该报文转发给RealServer,从而导致Director 的VIP 失效!

解决方法:

对节点服务器进行处理,设置内核参数arp_announce=2,系统不使用IP 包的源地址来

设置ARP 请求的源地址,而选择发送接口的IP 地址。解决ARP 的两个问题的设置方法:修改/etc/sysctl.conf 文件

net.ipv4.conf.lo.arp_ignore = 1

net.ipv4.conf.lo.arp_announce = 2

net.ipv4.conf.all.arp_ignore = 1

net.ipv4.conf.all.arp_announce = 2

LVS+DR实战篇:

实验环境:

VIP: 192.168.18.41

BL: 192.168.18.31

web1: 192.168.18.32

web2: 192.168.18.33

nfs: 192.168.18.34

NFS共享存储:

[root@doo2 ~]# mount /dev/cdrom /media/cdrom/

mount: block device /dev/sr0 is write-protected, mounting read-only

[root@doo2 ~]# yum -y install nfs-utils rpcbind

[root@doo2 ~]# rpm -q nfs-utils rpcbind

[root@doo2 ~]# mkdir /www

[root@doo2 ~]# vi /etc/exports

/www 192.168.18.0/24(ro,sync,no_root_squash)

[root@doo2 ~]# service rpcbind start

正在启动 rpcbind: [确定]

[root@doo2 ~]# service nfs start

启动 NFS 服务: [确定]

启动 NFS mountd: [确定]

启动 NFS 守护进程: [确定]

正在启动 RPC idmapd: [确定]

[root@doo2 ~]# showmount -e 192.168.18.34

Export list for 192.168.18.34:

/www 192.168.18.0/24

[root@doo2 ~]# chkconfig rpcbind on

[root@doo2 ~]# chkconfig nfs on

[root@doo2 ~]# echo "<h1>ce shi ye</h1>">/www/index.html

web服务器配置:

web1

[root@doo ~]# rpm -q httpd

httpd-2.2.15-29.el6.centos.x86_64

[root@doo ~]# vi /etc/httpd/conf/httpd.conf

[root@doo ~]# yum -y install nfs-utils

[root@doo ~]# service httpd start

正在启动 httpd: [确定]

[root@doo ~]# mount 192.168.18.34:/www /var/www/html/

[root@doo ~]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/vg_doo-lv_root 18G 3.9G 13G 24% /

tmpfs 383M 0 383M 0% /dev/shm

/dev/sda1 485M 35M 426M 8% /boot

192.168.18.34:/www 18G 1.3G 16G 8% /var/www/html

[root@doo ~]# vi /etc/fstab

192.168.18.34:/www /var/www/html nfs defaults,_netdev 1 2

(备份,第二检测)

web2:(同web1)

3、LVS-NAT 部署

ipvsadm 工具参数说明:

-A 添加虚拟服务器

-D 删除虚拟服务器

-C 删除所有配置条目

-E 修改虚拟服务器

-L 或-l,列表查看

-n 不做解析,以数字形式显示

-c 输出当前IPVS 连接

-a 添加真实服务器

-d 删除某个节点

-t 指定VIP 地址及TCP 端口

-s 指定负载调度算法,rr|wrr|lc|wlc|lblc|lblcr|dh|sh|sed|nq,默认wlc

-m NAT 群集模式

-g DR 模式

-i TUN 模式

-w 设置权重(权重为0 时表示暂停节点)

--help 帮助

BL:

[root@doo2 ~]# modprobe ip_vs//加载ip_vs模块

[root@doo2 ~]# yum -y install ipvsadm

[root@doo2 ~]# service ipvsadm stop

ipvsadm: Clearing the current IPVS table: [确定]

ipvsadm: Unloading modules: [确定]

[root@doo2 ~]# ipvsadm -C

[root@doo2 ~]# vi /opt/vip.sh

#!/bin/bash

# VIP

VIP="192.168.18.41"

/sbin/ifconfig eth1:vip $VIP broadcast $VIP netmask 255.255.255.255

/sbin/route add -host $VIP dev eth1:vip

~

[root@doo2 ~]# chmod +x /opt/vip.sh

[root@doo2 ~]# /opt/vip.sh

[root@doo2 ~]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

192.168.18.41 0.0.0.0 255.255.255.255 UH 0 0 0 eth1

192.168.18.0 0.0.0.0 255.255.255.0 U 0 0 0 eth1

169.254.0.0 0.0.0.0 255.255.0.0 U 1002 0 0 eth1

0.0.0.0 192.168.18.2 0.0.0.0 UG 0 0 0 eth1

[root@doo2 ~]# ipvsadm -A -t 192.168.18.41:80 -s rr

[root@doo2 ~]# ipvsadm -a -t 192.168.18.41:80 -r 192.168.18.32:80 -g

[root@doo2 ~]# ipvsadm -a -t 192.168.18.41:80 -r 192.168.18.33:80 -g

[root@doo2 ~]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.18.41:80 rr

-> 192.168.18.32:80 Route 1 0 0

-> 192.168.18.33:80 Route 1 0 0

[root@doo2 ~]# ipvsadm-save >/etc/sysconfig/ipvsadm

对web1:

[root@doo ~]# vi /opt/lvs-dr

#!/bin/bash

# lvs-dr

VIP="192.168.18.41"

/sbin/ifconfig lo:vip $VIP broadcast $VIP netmask 255.255.255.255

/sbin/route add -host $VIP dev lo:vip

echo 1 > /proc/sys/net/ipv4/conf/lo/arp_ignore

echo 2 > /proc/sys/net/ipv4/conf/lo/arp_announce

echo 1 > /proc/sys/net/ipv4/conf/all/arp_ignore

echo 2 > /proc/sys/net/ipv4/conf/all/arp_announce

[root@doo ~]# chmod +x /opt/lvs-dr

[root@doo ~]# /opt/lvs-dr

[root@doo ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

inet 192.168.2.41/32 brd 192.168.2.41 scope global lo:vip

[root@web1 ~]# scp /opt/lvs-dr 192.168.2.4:/opt

对web2也一样操作

对BL 可以看到分配的SYNC请求:

[root@doo2 ~]# ipvsadm -lnc

IPVS connection entries

pro expire state source virtual destination

TCP 14:59 ESTABLISHED 192.168.18.140:53385 192.168.18.41:80 192.168.18.33:80

TCP 01:24 FIN_WAIT 192.168.18.140:53380 192.168.18.41:80 192.168.18.32:80

TCP 01:58 FIN_WAIT 192.168.18.140:53387 192.168.18.41:80 192.168.18.33:80

TCP 01:24 FIN_WAIT 192.168.18.140:53379 192.168.18.41:80 192.168.18.33:80

TCP 01:25 FIN_WAIT 192.168.18.140:53382 192.168.18.41:80 192.168.18.32:80

加上keepalived:

对BL:

实验环境:

VIP: 192.168.18.41

BL1:193.168.18.30(从)

BL2: 192.168.18.31(主)

web1: 192.168.18.32

web2: 192.168.18.33

nfs: 192.168.18.34 (raid+LVM)

[root@doo2 ~]# yum -y install keepalived

[root@doo2 ~]# cd /etc/keepalived/

[root@doo2 keepalived]# cp keepalived.conf keepalived.conf.bak

[root@doo2 keepalived]# vi keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

doo@163.com//自己的警报邮箱

}

notification_email_from ya@163.com//发送邮箱

smtp_server ping.com.cn//邮件服务器的SMTP地址

smtp_connect_timeout 30//smtp服务器超时时间

router_id LVS_DEVEL_BLM//邮件标题识别,可乱写

}

vrrp_instance VI_1 {

state MASTER

interface eth1

virtual_router_id 51//同一个虚拟VIP的路由标记,同一个keepalived下主备必须一样

priority 100//优先级,数字越大优先级越高,1-254

advert_int 2//MASTER与SLAVE之间的同步检查时间

authentication {

auth_type PASS//验证类型,有PASS和AH两种

auth_pass 1111//同一个keepalived下必须密码一样

}

virtual_ipaddress {

192.168.18.41//VIP,可以设置多个

}

}

virtual_server 192.168.18.41 80 {//设置VIP

delay_loop 2//健康检查时间

lb_algo rr//轮询算法

lb_kind DR//负载均衡机制NAT ,DR,TUN

! nat_mask 255.255.255.0//非NAT,要注释,下同

! persistence_timeout 300//存留超时时间

protocol TCP

real_server 192.168.18.32 80 {

weight 1//权重值

TCP_CHECK {//readserver的状态检测部分

connect_timeout 10//10秒无响应超时

nb_get_retry 3//重试次数

delay_before_retry 3//两个重试时间间隔为3秒

connect_port 80//检测连接端口

}

}

real_server 192.168.18.33 80 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 80

}

}

}

[root@doo2 keepalived]# service keepalived start

正在启动 keepalived: [确定]

[root@doo2 keepalived]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.18.41:80 rr

-> 192.168.18.32:80 Route 1 0 0

-> 192.168.18.33:80 Route 1 0 0

对从BL2:192.168.18.30

[root@doo2 ~]# modprobe ip_vs

[root@doo2 ~]# yum -y install keepalived ipvsadm

[root@doo2 ~]# cd /etc/keepalived/

[root@doo2 keepalived]# cp keepalived.conf keepalived.conf.bak

[root@doo2 keepalived]# vi keepalived.conf.bak

! Configuration File for keepalived

global_defs {

notification_email {

doo@163.com

}

notification_email_from ya@163.com

smtp_server ping.com.cn

smtp_connect_timeout 30

router_id LVS_DEVEL_BLM

}

vrrp_instance VI_1 {

state BACKUP

interface eth1

virtual_router_id 51

priority 99

advert_int 2

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.18.41

}

}

virtual_server 192.168.18.41 80 {

delay_loop 2

lb_algo rr

lb_kind DR

! nat_mask 255.255.255.0

! persistence_timeout 300

protocol TCP

real_server 192.168.18.32 80 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 80

}

}

real_server 192.168.18.33 80 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 80

}

}

}

[root@doo2 keepalived]# ip a//没有VIP,就对了

[root@doo2 keepalived]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.18.41:80 rr

-> 192.168.18.32:80 Route 1 0 0

-> 192.168.18.33:80 Route 1 0 0

对Web1:

[root@doo ~]# service httpd stop

停止 httpd: [确定]

对BL:

[root@doo2 keepalived]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.18.41:80 rr

-> 192.168.18.33:80 Route 1 0 0

对web1:

[root@doo ~]# service httpd start

正在启动 httpd: [确定]

对BL:

[root@doo2 keepalived]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.18.41:80 rr

-> 192.168.18.32:80 Route 1 0 0

-> 192.168.18.33:80 Route 1 0 0

高可用测试:

对主BL:

[root@doo2 keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:9d:cf:f3 brd ff:ff:ff:ff:ff:ff

inet 192.168.18.31/24 brd 192.168.18.255 scope global eth1

inet 192.168.18.41/32 scope global eth1

inet6 fe80::20c:29ff:fe9d:cff3/64 scope link

valid_lft forever preferred_lft forever

[root@doo2 keepalived]# service keepalived stop

停止 keepalived: [确定]

对BL从:

[root@doo2 keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:34:d3:f8 brd ff:ff:ff:ff:ff:ff

inet 192.168.18.30/24 brd 192.168.18.255 scope global eth1

inet 192.168.18.41/32 scope global eth1

inet6 fe80::20c:29ff:fe34:d3f8/64 scope link

valid_lft forever preferred_lft forever

[root@doo2 keepalived]# ipvsadm -l -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.18.41:80 rr

-> 192.168.18.32:80 Route 1 0 0

-> 192.168.18.33:80 Route 1 0 0

NFS+raid5+LVM 192.168.18.34

对NFS:

[root@doo2 ~]# fdisk -l|grep dev

Disk /dev/sda: 21.5 GB, 21474836480 bytes

/dev/sda1 * 1 64 512000 83 Linux

/dev/sda2 64 2611 20458496 8e Linux LVM

Disk /dev/sdb: 2147 MB, 2147483648 bytes

Disk /dev/sdc: 2147 MB, 2147483648 bytes

Disk /dev/sdd: 2147 MB, 2147483648 bytes

Disk /dev/sde: 2147 MB, 2147483648 bytes

Disk /dev/mapper/vg_doo2-lv_root: 18.9 GB, 18865979392 bytes

Disk /dev/mapper/vg_doo2-lv_swap: 2080 MB, 2080374784 bytes

[root@doo2 ~]# yum -y install parted

[root@doo2 ~]# parted /dev/sdb

GNU Parted 2.1

使用 /dev/sdc

Welcome to GNU Parted! Type ‘help‘ to view a list of commands.

(parted) mklabel

新的磁盘标签类型? gpt

(parted) mkpart

分区名称? []? a

文件系统类型? [ext2]? ext3

起始点? 1

结束点? -1

(parted) p

Model: VMware, VMware Virtual S (scsi)

Disk /dev/sdc: 2147MB

Sector size (logical/physical): 512B/512B

Partition Table: gpt

Number Start End Size File system Name 标志

1 1049kB 2146MB 2145MB a

(parted) q

信息: You may need to update /etc/fstab.

同样的方法对 c d e....

[root@doo2 ~]# yum -y install mdadm

[root@doo2 ~]# mdadm -Cv /dev/md5 -a yes -n3 -x1 -l5 /dev/sd[b-e]1

mdadm: layout defaults to left-symmetric

mdadm: layout defaults to left-symmetric

mdadm: super1.x cannot open /dev/sdb1: Device or resource busy

mdadm: Cannot use /dev/sdb1: It is busy

mdadm: cannot open /dev/sdb1: Device or resource busy

[root@doo2 ~]# mkfs.ext3 /dev/md5

[root@nfs ~]# mdadm -D -s >/etc/mdadm.conf

[root@nfs ~]# sed -i ‘1 s/$/ auto=yes/‘ /etc/mdadm.conf

LVM:

[root@doo2 ~]# pvcreate /dev/md5

Physical volume "/dev/md5" successfully created

[root@doo2 ~]# vgcreate vg0 /dev/md5

Volume group "vg0" successfully created

[root@doo2 ~]# lvcreate -L 2G -n web vg0

Logical volume "web" created

[root@doo2 ~]# mkdir /web

[root@doo2 ~]# mkfs.ext4 /dev/vg0/web

[root@doo2 ~]# mount /dev/vg0/web /web/

[root@doo2 ~]# echo "doo">/web/index.html

[root@nfs ~]# vim /etc/fstab

……

/dev/vg0/web /web ext4 defaults 1 2

[root@nfs ~]# vim /etc/exports

/web 192.168.1.0/24(rw,sync,no_root_squash)

[root@nfs ~]# /etc/init.d/rpcbind start

[root@nfs ~]# /etc/init.d/nfs start

[root@doo2 ~]# showmount -e 192.168.18.34

Export list for 192.168.18.34:

/web 192.168.18.0/24

对web1和web2挂载

[root@web1 ~]# vim /etc/fstab

……

192.168.18.34:/web /var/www/html nfs defaults,_netdev 1 2

[root@web1 ~]# yum -y install nfs-utils

[root@doo ~]# mount 192.168.18.34:/web /var/www/html/

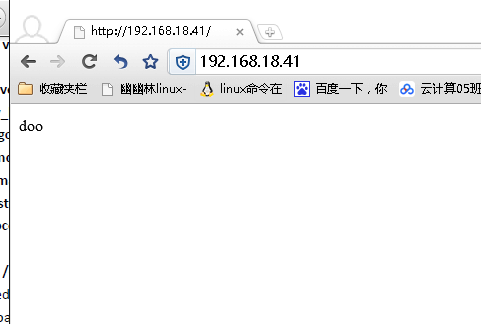

客户端测试:

多刷新几次

[root@doo2 keepalived]# ipvsadm -L -n -c

IPVS connection entries

pro expire state source virtual destination

TCP 01:58 FIN_WAIT 192.168.18.140:53988 192.168.18.41:80 192.168.18.32:80

TCP 01:57 FIN_WAIT 192.168.18.140:53977 192.168.18.41:80 192.168.18.33:80

TCP 00:58 SYN_RECV 192.168.18.140:53998 192.168.18.41:80 192.168.18.33:80

TCP 01:57 FIN_WAIT 192.168.18.140:53981 192.168.18.41:80 192.168.18.32:80

TCP 01:57 FIN_WAIT 192.168.18.140:53974 192.168.18.41:80 192.168.18.32:80

TCP 01:57 FIN_WAIT 192.168.18.140:53976 192.168.18.41:80 192.168.18.32:80

LVS(DR)+keepalived+nfs+raid+LVM