首页 > 代码库 > 高可用集群corosync+pacemaker+drbd+httpd----手动配置篇

高可用集群corosync+pacemaker+drbd+httpd----手动配置篇

高可用集群corosync+pacemaker+drbd+httpd----手动配置篇

共享存储高可用方案 -----DRBD

Drbd :Distributed Replicated Block Device

高可用集群中的文件共享方案之一

共享存储的常见实现方式

DAS:直接附加存储 Direct attached storage:通过专用线缆直接连接至主板存储控制器接口的设备成为直接附加存储.,如外置的raid阵列

并行口: IDE SCSI

两种接口的区别:

ide接口的存取过程:

首先将从文件的读取说起;当用户空间进程要读写文件时首先向内核发起系统调用,然后进程有用户模式切换至内核模式,内核调用驱动程序使用特定的协议去驱动硬件并将数据按block块的方式读入内核空间的缓冲区,当数据准备完成再将数据复制到用户空间的内存中,然后通知内核空间进程数据准备完毕可以使用,此时有内核模式转换为用户模式,在这个过程中,需要cpu参与数据的寻址,控制信号传输,内存寻址等等会消耗cpu时间

而scsi本身就叫做小型计算机系统接口,本身就含有cpu等硬件设备,可以节省主机cpu时间,系统当读取文件时,系统直接将scsi协议的数据直接交由scsi卡去处理就行这样可以大大节省cpu时间占用,提高效率.这也是为甚么scsi接口的硬盘要比IDE接口硬盘贵的原因通常scsi接口的对cpu的占用率大约为IDE接口占用率的10%左右.在接口方面一块icsi可以接7(窄带)块或15(宽带)块硬盘..其中窄带总线接口有八个口其中一个接终结器.所谓“终结”就是在最后一个SCSI设备上设置一个跳线或安装一个终结器,通知SCSI控制器SCSI总线到此处就结束了

时至今日scsi一个接口以经可以接一块scsi卡而不仅仅是一块盘,这样就组成了scsi的存贮网络 总线上的每一个口称为一个target每个口上磁盘被记作一个lun (逻辑单元号)

串行口SATA SAS USB

NAS:network attached storage:

在一个网络内以服务的形式提供存储.例如NFS,Samba等此时提供的存储是文件系统级别的

SAN:Storage Area Network

由于SCSI协议的传输依赖于特定的传输线缆,为了实现更远距离的传输可以将ICSI协议封装的数据,再以网络传输协议进行第二次封装借助于网线进行数据传输,以提高传输距离另一端则有网络接口卡接受下来,并理解scsi协议存入存储设备,因此后端的存储设备可以是任何形式,不局限于scsi硬盘.这样所提供的为块级别的存储,因为前端并不需要理解整个存储的传送过程.在其开来自己所连接的就是一块scsi硬盘,可以对硬盘进行格式化等操作,所以此种方式提供的是块级别的存储

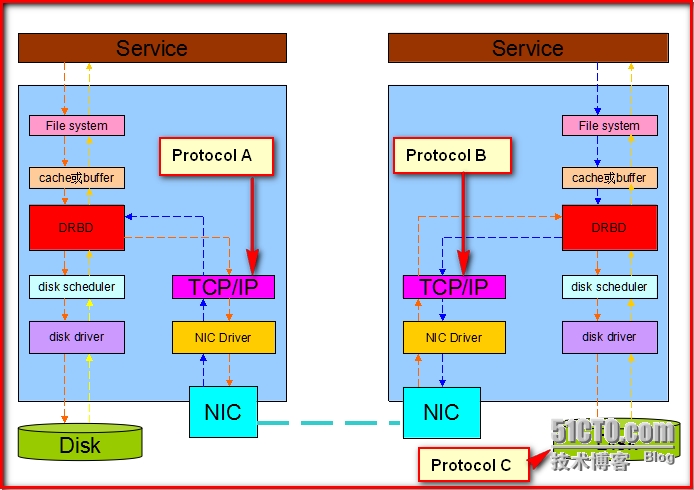

DRBD:原理图

当数据到达缓存或缓冲层时数据会分流一份写入本地磁盘,另一份将通过drbd设备发送至网络,传输到远端节点,远端节点的drbd设备接受到数据后将数据写入磁盘.在这个过程中

数据只发送至本地tcp/ip协议栈就认为数据写完成此时称作Protocol A

数据发送至远端的tcp/ip协议栈就认为数据写完成此时称作Protocol B

数据直到存储到远端磁盘才认为数据写完成此时称作Protocol C

工作模型为

1,master/secondary:此模式下只有主节点可读写 从节点不能读写也不能挂载

2,master/master:双主模型,此模式下要借助高可用集群文件系统实现读写

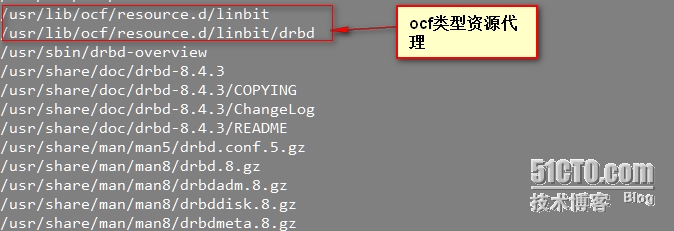

DRBD安装

注意安装包必须与系统的发行版本号(uname -r)一致

下载地址为ftp://rpmfind.net/linux/atrpms

drbd-8.4.3-33.el6.x86_64.rpm 用户工具

drbd-kmdl-2.6.32-431.el6-8.4.3-33.el6.x86_64.rpm 内核模块

[root@localhost~]#rpm -ih \

drbd-8.4.3-33.el6.x86_64.rpm drbd-kmdl-2.6.32-431.el6-8.4.3-33.el6.x86_64.rpm \

drbd-8.4.3-33.el6.x86_64.rpm \

warning:: Header V4 DSA/SHA1 Signature, key ID 66534c2b: NOKEY

########################################### [100%]

########################################### [ 50%]

########################################### [100%]

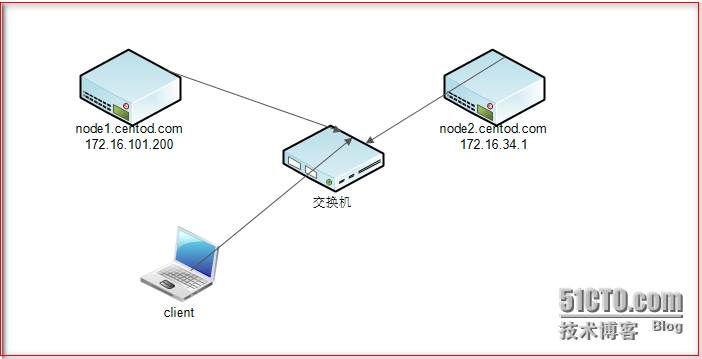

实验拓扑图:

分别在Node1和node2 安装两个包,并且修改hosts文件够解析主机名,并且时间同步

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.16.101.200 node1.centod.com node1

172.16.34.1 node2.centod.com node2

在两台主机上创建两个大小相同的分区假设2G

echo -e "n \n p \n 3 \n \n +2G \n w \n" |fdisk /dev/sda

配置文件为分段式的

1,global { usage-count no; }是否通知drbd已经安装用于官方计数 全局配置段

2,command {

Protocol C;

Handlers { }

Startup { }

Disk { on-io-error detach } 一旦本地drbd设备对应对应资源的磁盘发生错误时的处理动作 detach表示移除 同步过程也无法进行

Net { cram-hmac-alg “sha1”; 认证算法

Shared-secret “hzm132”; 密码

}

Syncer { rate 1000M; } 传输速率 drbd刚启动时要逐位对齐 要进行全盘同步

} 共享段

没列出的使用默认配置

3,定义资源格式如下

Vim /etc/drbd.d/web.res

Resource web {

On node1.centod.com {

Device /dev/drbd0;

Disk /dev/sdb3;

Address 172.16.101.200:7789;

Meta-disk internal;

}

On node2.centod.com {

Device /dev/drbd0;

Disk /dev/sdb3;

Address 172.16.34.1;

Meta-disk internal;

}

分别在两台主机执行初始化:

Drbdadm create-md web

[need to type ‘yes‘ to confirm] yes

Writing meta data...

initializing activity log

NOT initializing bitmap

lk_bdev_save(/var/lib/drbd/drbd-minor-0.lkbd) failed: No such file or directory

New drbd meta data block successfully created.

lk_bdev_save(/var/lib/drbd/drbd-minor-0.lkbd) failed: No such file or directory

此时初始化成功

然后在两台主机启动drbd服务

[root@localhost ~]# service drbd start

Starting DRBD resources: [

create res: web

prepare disk: web

adjust disk: web

adjust net: web

]

..........

***************************************************************

DRBD‘s startup script waits for the peer node(s) to appear.

- In case this node was already a degraded cluster before the

reboot the timeout is 0 seconds. [degr-wfc-timeout]

- If the peer was available before the reboot the timeout will

expire after 0 seconds. [wfc-timeout]

(These values are for resource ‘web‘; 0 sec -> wait forever)

To abort waiting enter ‘yes‘ [ 14]:

.查看状态

cat /proc/drbd

version: 8.4.3 (api:1/proto:86-101)

GIT-hash: 89a294209144b68adb3ee85a73221f964d3ee515 build by gardner@, 2013-11-29 12:28:00

0: cs:Connected ro:Secondary/Secondary ds:Inconsistent/Inconsistent C r-----

ns:0 nr:0 dw:0 dr:0 al:0 bm:0 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:2103412

刚启动时默认为Secondary/Secondary模式

强制提升其中一个为主节点

[root@localhost ~]# drbdadm primary --force web

[root@localhost ~]# cat /proc/drbd

version: 8.4.3 (api:1/proto:86-101)

GIT-hash: 89a294209144b68adb3ee85a73221f964d3ee515 build by gardner@, 2013-11-29 12:28:00

0: cs:SyncSource ro:Primary/Secondary ds:UpToDate/Inconsistent C r---n-

ns:217216 nr:0 dw:0 dr:224928 al:0 bm:13 lo:2 pe:3 ua:8 ap:0 ep:1 wo:f oos:188837

[=>..................] sync‘ed: 10.4% (1888372/2103412)K 开始同步

finish: 0:00:26 speed: 71,680 (71,680) K/sec

查看状态

[root@localhost ~]# cat /proc/drbd

version: 8.4.3 (api:1/proto:86-101)

GIT-hash: 89a294209144b68adb3ee85a73221f964d3ee515 build by gardner@, 2013-11-29 12:28:00

0: cs:Connected ro:Primary/Secondary ds:UpToDate/UpToDate C r-----

ns:2103412 nr:0 dw:0 dr:2104084 al:0 bm:129 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:0

此时以为主从模式

格式化主节点

[root@localhost ~]# mke2fs -t ext4 /dev/drbd0

mke2fs 1.41.12 (17-May-2010)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

Stride=0 blocks, Stripe width=0 blocks

131648 inodes, 525853 blocks

26292 blocks (5.00%) reserved for the super user

First data block=0

Maximum filesystem blocks=541065216

17 block groups

32768 blocks per group, 32768 fragments per group

7744 inodes per group

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912

Writing inode tables: done

Creating journal (16384 blocks): done

Writing superblocks and filesystem accounting information: done

This filesystem will be automatically checked every 39 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

挂载主节点并写入数据

[root@localhost ~]# mount /dev/drbd0 /mnt

[root@localhost ~]# cp /etc/issue /mnt

[root@localhost ~]# ls /mnt

issue lost+found

查看是否数据已经同步

卸载主节点设为从节点

[root@localhost ~]# umount /dev/drbd0

[root@localhost ~]# drbdadm secondary web

[root@localhost ~]# cat /proc/drbd

version: 8.4.3 (api:1/proto:86-101)

GIT-hash: 89a294209144b68adb3ee85a73221f964d3ee515 build by gardner@, 2013-11-29 12:28:00

0: cs:Connected ro:Secondary/Secondary ds:UpToDate/UpToDate C r-----

ns:2202732 nr:0 dw:99320 dr:2104805 al:27 bm:129 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:0

将node2提升为主节点上挂载设备查看是否有数据

[root@node2 ~]# drbdadm primary web

[root@node2 ~]# cat /proc/drbd

version: 8.4.3 (api:1/proto:86-101)

GIT-hash: 89a294209144b68adb3ee85a73221f964d3ee515 build by gardner@, 2013-11-29 12:28:00

0: cs:Connected ro:Primary/Secondary ds:UpToDate/UpToDate C r-----

ns:0 nr:2202732 dw:2202732 dr:672 al:0 bm:129 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:0

[root@node2 ~]# mount /dev/drbd0 /mnt

[root@node2 ~]# ls /mnt

issue lost+found

至此实验成功

http+corosync+pacemaker+drbd实现基于drbd存储的高可用集群

实验环境为centos6.5 使用上面两台主机继续进行下面的配置

首先在两台主机安装高可用集群套件:

[root@localhost ~]# yum install corosync pacemaker

安装高可用命令行配置工具crmsh 依赖于pssh redhat-rpm-config

yum install crmsh-1.2.6-4.el6.x86_64.rpm \

pssh-2.3.1-2.el6.x86_64.rpm \

redhat-rpm-config-9.0.3-42.el6.centos.noarch.rpm \

Corosync配置文件为:

compatibility: whitetank

totem {

version: 2

secauth: on

threads: 0

interface {

ringnumber: 0

bindnetaddr: 172.16.0.0

mcastaddr: 226.194.231.1

mcastport: 5405

ttl: 1

}

}

logging {

fileline: off

to_stderr: no

to_logfile: yes

to_syslog: no

logfile: /var/log/cluster/corosync.log

debug: off

timestamp: on

logger_subsys {

subsys: AMF

debug: off

}

}

amf {

mode: disabled

}

service {

ver: 0

name: pacemaker

# user_mgmtd: yes

}

aisexec {

user: root

group: root

}

生成秘钥

corosync-keygen

如果此时熵池中数据不够另起一终端敲键盘

配置高可用资源

[root@node1 corosync]# crm

crm(live)# status

Last updated: Thu Sep 18 16:12:48 2014

Last change: Thu Sep 18 16:07:11 2014 via crmd on node2.centod.com

Stack: classic openais (with plugin)

Current DC: node2.centod.com - partition with quorum

Version: 1.1.10-14.el6-368c726

2 Nodes configured, 2 expected votes

0 Resources configured

Online: [ node1.centod.com node2.centod.com ]

Vip资源代理

crm(live)# configure

crm(live)configure# primitive vip ocf:heartbeat:IPaddr params ip=172.16.101.220

crm(live)configure# verify

Httpd资源代理

crm(live)configure# primitive httpd lsb:httpd op monitor interval=20s timeout=20s op start timeout=20s op stop timeout=20s

crm(live)configure# verify

定义drbd主资源

crm(live)configure# primitive drbd ocf:linbit:drbd params drbd_resource=web op monitor role=Master interval=20s timeout=20s op monitor role=Slave interval=20s timeout=20s op start timeout=240s op stop timeout=100s

crm(live)configure# verify

定义克隆资源同时会指定主从资源

crm(live)configure# master ms-webdrbd drbd meta master-max=1 master-node-max=1 clone-max=2 clone-node-max=1 notify=ture

crm(live)configure# verify

定义存储资源

crm(live)configure# primitive httpd-storage ocf:heartbeat:Filesystem params device=/dev/drbd0 directory=/var/www/html fstype=etx4 op monitor interval=20s timeout=40s op start timeout=60s op stop timeout=60s

crm(live)configure# verify

定义位置约束关系

crm(live)configure# colocation storage-nrbd-master-httpd-vip inf: httpd-storage ms-webdrbd:Master httpd vip

crm(live)configure# verify

定义顺序约束

crm(live)configure# order nrbd-storage-vip-httpd inf: ms-webdrbd:promote httpd-storage:start vip httpd

crm(live)configure# verify

无误提交

Commit

查看结果

Crm configure show

node node2.centod.com

node node3.centod.com

primitive drbd ocf:linbit:drbd \

params drbd_resource="web" \

op monitor role="Master" interval="20s" timeout="30s" \

op monitor role="Slave" interval="40s" timeout="30" \

op start timeout="240s" interval="0" \

op stop timeout="100s" interval="0"

primitive httpd lsb:httpd \

op monitor interval="20s" timeout="20s" \

op start timeout="20s" interval="0" \

op stop timeout="20s" interval="0" \

meta target-role="Started"

primitive httpd-storage ocf:heartbeat:Filesystem \

params device="/dev/drbd0" directory="/var/www/html" fstype="ext4" \

op monitor interval="20s" timeout="40s" \

op start timeout="60s" interval="0" \

op stop timeout="60s" interval="0" \

meta target-role="Started"

primitive vip ocf:heartbeat:IPaddr \

params ip="172.16.101.220" \

meta target-role="Started"

ms ms-webdrbd drbd \

meta master-max="1" master-node-max="1" clone-max="2" clone-node-max="1" notify="true" target-role="Started"

colocation storage-nrbd-master-httpd-vip inf: httpd-storage ms-webdrbd:Master httpd vip

order nrbd-storage-vip-httpd inf: ms-webdrbd:promote httpd-storage:start vip httpd

property $id="cib-bootstrap-options" \

dc-version="1.1.10-14.el6-368c726" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

查看高可用集群状态信息

Online: [ node1.centod.com ]

OFFLINE: [ node2.centod.com ]

vip(ocf::heartbeat:IPaddr):Started node1.centod.com

httpd(lsb:httpd):Started node1.centod.com

Master/Slave Set: ms-webdrbd [drbd]

Masters: [ node1.centod.com ]

Stopped: [ node2.centod.com ]

httpd-storage(ocf::heartbeat:Filesystem):Started node1.centod.com

高可用集群corosync+pacemaker+drbd+httpd----手动配置篇