首页 > 代码库 > 高可用分布式存储(Corosync+Pacemaker+DRBD+MooseFS)

高可用分布式存储(Corosync+Pacemaker+DRBD+MooseFS)

=========================================================================================

一、服务器分布及相关说明

=========================================================================================

1、服务器信息

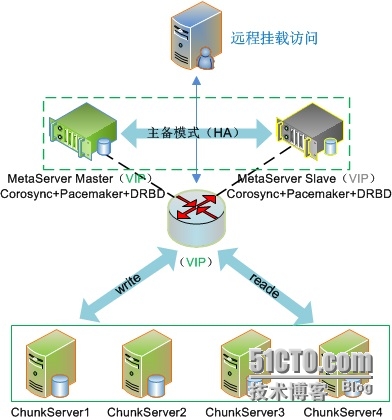

2、总体架构

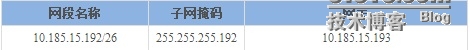

3、网络参数

4、元数据节点上需要额外部署的服务

Corosync + Pacemaker + DRBD

5、系统环境

CentOS 6.3

6、内核版本

kernel-2.6.32.43

7、需要启动的服务

corosync

【注意:其他服务资源皆由此服务进行接管】

=========================================================================================

二、DRBD编译安装

=========================================================================================

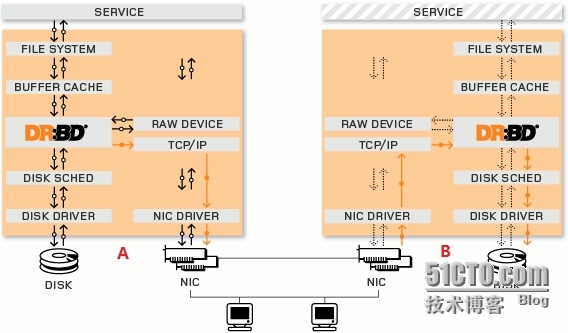

1、DRBD描述

详细信息请参考:http://www.drbd.org/

DRBD源码包:

http://oss.linbit.com/drbd/8.4/drbd-8.4.3.tar.gz

注意:

(1)、内核版本为2.6.32,推荐使用drbd 8.4.3;

(2)、同时从2.6.33内核版本开始,drbd已作为标准组件默认自带。

# yum -y install kernel-devel kernel-headers flex

2、安装用户空间工具

# tar xvzf drbd-8.4.3.tar.gz

# cd drbd-8.4.3

# ./configure --prefix=/ --with-km

# make KDIR=/usr/src/kernel-linux-2.6.32.43

# make install

3、安装drbd模块

# cd drbd

# make clean

# make KDIR=/usr/src/kernel-linux-2.6.32.43

# mkdir -p /lib/modules/`uname-r`/kernel/lib/

# cp drbd.ko /lib/modules/`uname-r`/kernel/lib/

注意启动顺序:

(1)、启动DRDB服务,即“/etc/init.d/drbd”,同时会动态加载一个DRBD内核模块;

(2)、最后挂载DRBD设备“/dev/drbd0”,推荐放在“/etc/rc.d/rc.local”,不推荐修改“/etc/fstab”。

4、主机名设置

注意:

10.185.15.241 为元数据主节点,名称为node1

10.185.15.242为元数据备用节点,名称为node2

(1)、node1节点

# vim /etc/sysconfig/network

HOSTNAME=node1.mfs.com

# hostname node1.mfs.com

# vim /etc/hosts

10.185.15.241 node1.mfs.com node1

10.185.15.242 node2.mfs.com node2

(2)、node2节点

# vim /etc/sysconfig/network

HOSTNAME=node2.mfs.com

# hostname node2.mfs.com

# vim /etc/hosts

10.185.15.241 node1.mfs.com node1

10.185.15.242 node2.mfs.com node2

5、SSH信任部署

http://blog.csdn.net/codepeak/article/details/14447627

6、DRBD配置

(1)、全局公共配置

# vim /etc/drbd.d/global_common.conf

global {

usage-count no;

# minor-count dialog-refresh disable-ip-verification

}

common {

protocol C;

handlers {

# These are EXAMPLE handlers only.

# They may have severe implications,

# like hard resetting the node under certaincircumstances.

# Be careful when chosing your poison.

pri-on-incon-degr"/lib/drbd/notify-pri-on-incon-degr.sh;/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot-f";

pri-lost-after-sb"/lib/drbd/notify-pri-lost-after-sb.sh;/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot-f";

local-io-error "/lib/drbd/notify-io-error.sh;/lib/drbd/notify-emergency-shutdown.sh; echo o > /proc/sysrq-trigger ; halt-f";

# fence-peer "/lib/drbd/crm-fence-peer.sh";

# split-brain "/lib/drbd/notify-split-brain.shroot";

# out-of-sync "/lib/drbd/notify-out-of-sync.shroot";

# before-resync-target"/lib/drbd/snapshot-resync-target-lvm.sh -p 15 -- -c 16k";

# after-resync-target/lib/drbd/unsnapshot-resync-target-lvm.sh;

}

startup {

# wfc-timeout degr-wfc-timeout outdated-wfc-timeoutwait-after-sb

wfc-timeout 0;

degr-wfc-timeout 120;

outdated-wfc-timeout 120;

}

options {

# cpu-mask on-no-data-accessible

}

disk {

# size max-bio-bvecs on-io-error fencing disk-barrierdisk-flushes

# disk-drain md-flushes resync-rate resync-afteral-extents

# c-plan-ahead c-delay-target c-fill-target c-max-rate

# c-min-rate disk-timeout

on-io-error detach;

#fencing resource-only;

}

net {

# protocol timeout max-epoch-size max-buffersunplug-watermark

# connect-int ping-int sndbuf-size rcvbuf-size ko-count

# allow-two-primaries cram-hmac-alg shared-secretafter-sb-0pri

# after-sb-1pri after-sb-2pri always-asbp rr-conflict

# ping-timeout data-integrity-alg tcp-corkon-congestion

# congestion-fill congestion-extents csums-algverify-alg

# use-rle

timeout 60;

connect-int 10;

ping-int 10;

max-buffers 2048;

max-epoch-size 2048;

cram-hmac-alg "sha1";

shared-secret "my_mfs_drbd";

}

syncer {

rate 1000M;

}

}

(2)、存储资源配置

# vim /etc/drbd.d/mfs_store.res

resource mfs_store {

on node1.mfs.com {

device /dev/drbd0;

disk /dev/sda4;

address 10.185.15.241:7789;

meta-disk internal;

}

on node2.mfs.com {

device /dev/drbd0;

disk /dev/sda4;

address 10.185.15.242:7789;

meta-disk internal;

}

}

# scp /etc/drbd.d/{global_common.conf,mfs_store.res} root@node2:/etc/drbd.d/

【注意:配置文件global_common.conf、mfs_store.res,在主从节点上需保持一致】

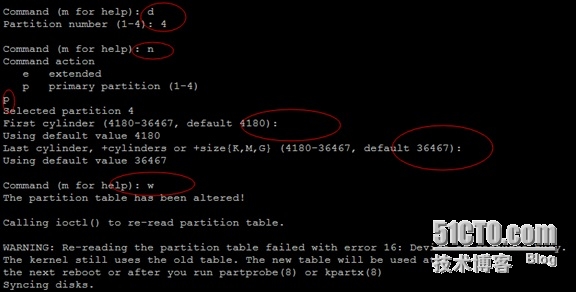

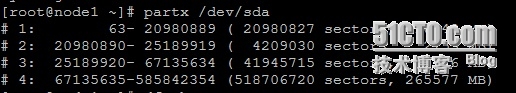

(3)、分区设置

## 删除空闲的分区,并重新分区,但无需格式化

# umount /dev/sda4

# fdisk /dev/sda

# partx /dev/sda

# dd if=/dev/zero bs=1M count=128 of=/dev/sda4

## 去掉/dev/sda4的挂载项

# vim /etc/fstab

#/dev/sda4 /data ext3 noatime,acl,user_xattr 1 2

【注意:以上操作在主从节点都需进行】

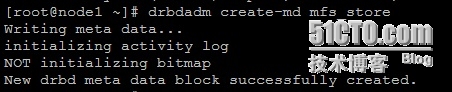

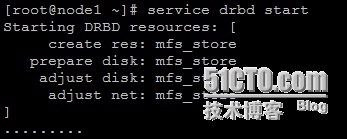

(4)、DRBD服务操作

## node1与node2上初始化资源,并启动DRBD服务

# drbdadm create-md mfs_store

# service drbd start

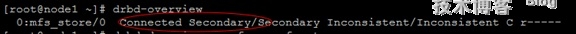

## 提升node1为主节点

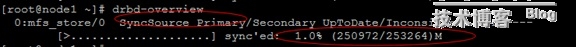

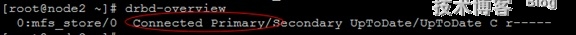

# drbd-overview

# drbdadm primary --force mfs_store

# drbd-overview

【注意:正在将node1节点的“/dev/sda4”分区同步到node2节点的“/dev/sda4”分区,需要稍等一段时间】

# drbd-overview

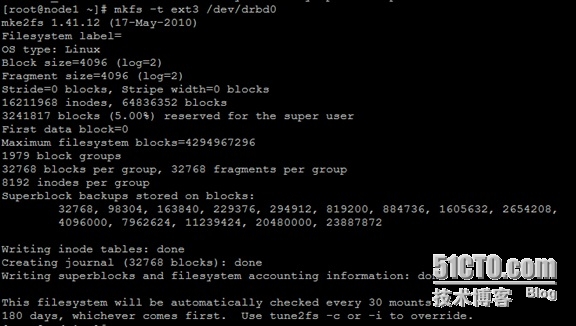

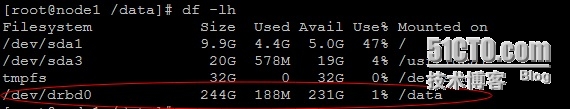

## 格式化主节点,并挂载

# mkfs -t ext3 /dev/drbd0

# mount /dev/drbd0 /data

(5)、DRBD主从节点角色切换测试

主节点(node1)上执行:

# umount /data

# drbdadm secondary mfs_store

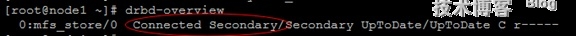

# drbd-overview

从节点(node2)上执行:

# drbdadm primary mfs_store

# drbd-overview

# mount /dev/drbd0 /data

(6)、解决脑裂问题

一般情况下会出现类似如下信息:

0:mfs_store/0 WFConnection Primary/UnknownUpToDate/DUnknown C r-----

从节点上执行:

# drbdadm disconnect mfs_store

# drbdadm secondary mfs_store

# drbdadm --discard-my-data connectmfs_store

# cat /proc/drbd

主节点上执行:

# drbdadm connect mfs_store

# cat /proc/drbd

=========================================================================================

三、MFS编译安装

=========================================================================================

概述:

MooseFS是一种分布式文件系统,MooseFS文件系统结构包括以下四种角色:

(1)、管理服务器managing server(master)

(2)、元数据日志服务器Metalogger server(Metalogger)

(3)、数据存储服务器 dataservers(chunkservers)

(4)、客户机挂载使用 client computers

各种角色作用:

(1)、管理服务器:负责各个数据存储服务器的管理,文件读写调度,文件空间回收以及恢复,多节点拷贝;

(2)、元数据日志服务器:负责备份master服务器的变化日志文件,文件类型为changelog_ml.*.mfs,以便于在masterserver出问题的时候接替其进行工作;

(3)、数据存储服务器:负责连接管理服务器,听从管理服务器调度,提供存储空间,并为客户提供数据传输;

(4)、客户端:通过fuse内核接口挂接远程管理服务器上所管理的数据存储服务器,看起来共享的文件系统和本地UNIX文件系统使用一样的效果。

相关说明:

此处,我们没有用到Metalogger节点,因为已经通过DRBD对元节点进行了网络备份容灾。

1、Metaserver Master节点部署

# /usr/sbin/groupadd mfs

# /usr/sbin/useradd mfs -g mfs -s /sbin/nologin

# tar xvzf mfs-1.6.27-5.gz

# cd mfs-1.6.27

# ./configure --prefix=/usr/local/mfs \

--with-default-user=mfs \

--with-default-group=mfs \

--disable-mfschunkserver \

--disable-mfsmount

# make && make install

# cd /usr/local/mfs/etc/mfs

# cp mfsmaster.cfg.dist mfsmaster.cfg

# cp mfsexports.cfg.dist mfsexports.cfg

# cp mfstopology.cfg.dist mfstopology.cfg

# vim mfsexports.cfg

10.0.0.0/8 / rw,alldirs,maproot=0

172.0.0.0/8 / rw,alldirs,maproot=0

# vim mfsmaster.cfg

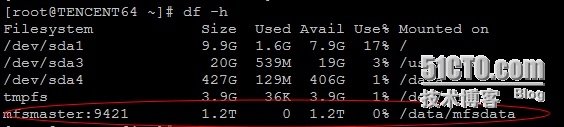

DATA_PATH = /data/mfsmetadata

# mkdir -p /data/mfsmetadata

# cp -a /usr/local/mfs/var/mfs/metadata.mfs.empty /data/mfsmetadata/metadata.mfs

# chown -R mfs:mfs /data/mfsmetadata

# vim /etc/hosts

10.185.15.241 mfsmaster 【注意:后续会修改为VIP】

10.137.153.224 mfschunkserver1

10.166.147.229 mfschunkserver2

10.185.4.99 mfschunkserver3

# /usr/local/mfs/sbin/mfsmaster start

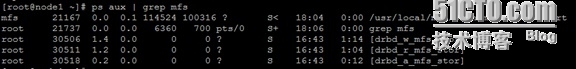

# ps aux | grep mfs

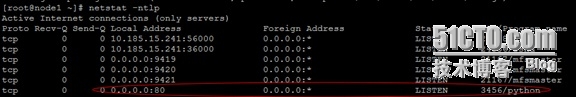

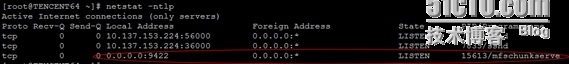

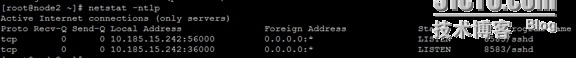

# netstat -ntlp

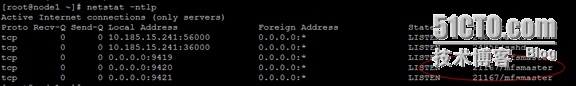

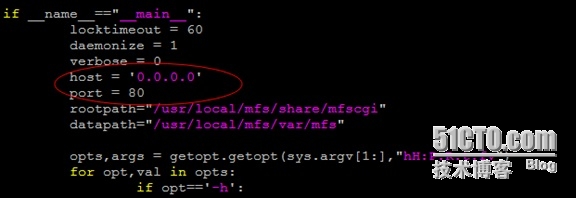

监控平台,修改默认绑定IP地址和端口:

any --> 0.0.0.0

9425 --> 80

# vim /usr/local/mfs/sbin/mfscgiserv

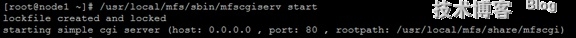

# /usr/local/mfs/sbin/mfscgiserv start

# netstat -ntlp

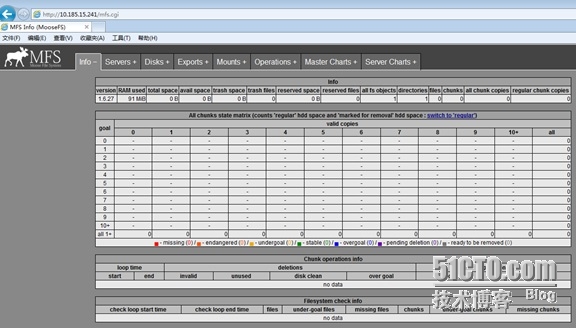

访问:http://10.185.15.241 【注意:后续以VIP的方式进行访问】

2、Metaserver Slave节点部署

# /usr/sbin/groupadd mfs

# /usr/sbin/useradd mfs -g mfs -s /sbin/nologin

# scp –r root@node1:/usr/local/mfs/usr/local/

# vim /etc/hosts

10.185.15.241 mfsmaster 【注意:后续会修改为VIP】

10.137.153.224 mfschunkserver1

10.166.147.229 mfschunkserver2

10.185.4.99 mfschunkserver3

3、chunkservers节点部署

# /usr/sbin/groupadd mfs

# /usr/sbin/useradd mfs -g mfs -s /sbin/nologin

# tar xvzf mfs-1.6.27-5.gz

# cd mfs-1.6.27

# ./configure --prefix=/usr/local/mfs \

--with-default-user=mfs \

--with-default-group=mfs \

--disable-mfsmaster \

--disable-mfsmount

# make && make install

# cd /usr/local/mfs/etc/mfs

# cp mfschunkserver.cfg.dist mfschunkserver.cfg

# cp mfshdd.cfg.dist mfshdd.cfg

# vim /etc/hosts

10.185.15.241 mfsmaster 【注意:后续会修改为VIP】

# mkdir -p /data/mfsmetadata

# chown -R mfs:mfs /data/mfsmetadata

# vim mfschunkserver.cfg

DATA_PATH =/data/mfsmetadata

# mkdir -p /data/mfschunk

# chown -R mfs:mfs /data/mfschunk

## 可以添加多个路径

# vim mfshdd.cfg

/data/mfschunk

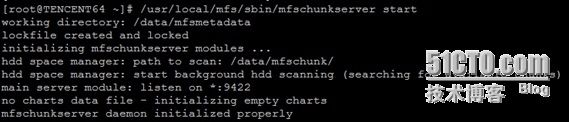

# /usr/local/mfs/sbin/mfschunkserver start

# netstat -ntlp

4、MFSclient节点部署

# /usr/sbin/groupadd mfs

# /usr/sbin/useradd mfs -g mfs -s /sbin/nologin

# tar xvzf fuse-2.9.2.tar.gz

# cd fuse-2.9.2

# ./configure --prefix=/usr/local

# make && make install

运行以下export命令,否则挂载moosefs系统会失败

# vim /etc/profile

export PKG_CONFIG_PATH=/usr/local/lib/pkgconfig:$PKG_CONFIG_PATH

# source /etc/profile

# tar xvzf mfs-1.6.27-5.gz

# cd mfs-1.6.27

# ./configure --prefix=/usr/local/mfs \

--with-default-user=mfs \

--with-default-group=mfs \

--enable-mfsmount

# make && make install

# vim /etc/hosts

10.185.15.241 mfsmaster 【注意:后续会修改为VIP】

# mkdir -p /data/mfsdata

# /usr/local/mfs/bin/mfsmount /data/mfsdata -H mfsmaster

## 设置文件副本数

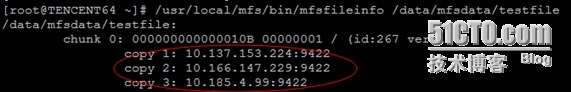

/usr/local/mfs/bin/mfsrsetgoal 3 /data/mfsdata

echo ‘mytest‘ > /data/mfsdata/testfile

/usr/local/mfs/bin/mfsfileinfo /data/mfsdata/testfile

## 设置删除文件后空间回收时间

/usr/local/mfs/bin/mfsrsettrashtime 600 /data/mfsdata

## 取消本地挂载

# umount -l /data/mfsdata

5、一点建议

安全停止MooseFS集群,建议如下步骤:

umount -l /data/mfsdata # 客户端卸载MooseFS文件系统

/usr/local/mfs/sbin/mfschunkserver stop # 停止chunkserver进程

/usr/local/mfs/sbin/mfsmaster stop #停止主控masterserver进程

安全启动MooseFS集群,建议如下步骤:

/usr/local/mfs/sbin/mfsmaster start #启动主控masterserver进程

/usr/local/mfs/sbin/mfschunkserver start # 启动chunkserver进程

/usr/local/mfs/bin/mfsmount /data/mfsdata -H mfsmaster # 客户端挂载MooseFS文件系统

=========================================================================================

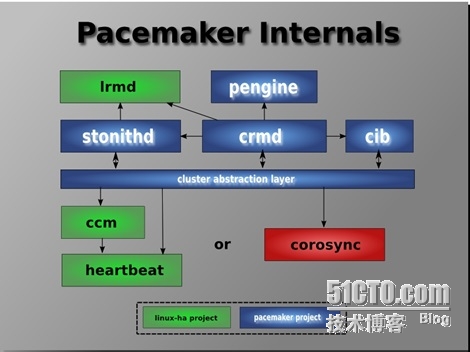

四、CoroSync + Pacemaker(HA)

=========================================================================================

1、相关描述

详细信息请参考:

https://github.com/corosync

https://github.com/ClusterLabs/pacemaker

https://github.com/crmsh/crmsh

2、一些准备工作

(1)、为MasterServer提供lsb格式启动脚本

# vim /etc/init.d/mfsmaster

#!/bin/sh

#

# mfsmaster - this scriptstart and stop the mfs metaserver daemon

#

# chkconfig: 345 91 10

# description: startupscript for mfs metaserver

PATH=/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/sbin:/usr/bin

MFS_PATH="/usr/local/mfs/sbin"

LOCK_FILE1="/usr/local/mfs/var/mfs/.mfscgiserv.lock"

LOCK_FILE2="/data/mfsmetadata/.mfsmaster.lock"

. /etc/rc.d/init.d/functions

. /etc/sysconfig/network

start() {

[[ -z `netstat -ntlp | grep mfsmaster` ]] && rm -f ${LOCK_FILE2}

[[ -z `ps aux | grep mfscgiserv | grep -v grep` ]] && rm -f ${LOCK_FILE1}

if [[ -z `netstat -ntlp | grep mfsmaster` && -n `ps aux | grep mfscgiserv | grep -v grep` ]]; then

kill -9 `ps aux | grep mfscgiserv | grep -v grep | awk ‘{print $2}‘`

rm -f ${LOCK_FILE1} ${LOCK_FILE2}

fi

$MFS_PATH/mfsmaster start

$MFS_PATH/mfscgiserv start

}

stop() {

$MFS_PATH/mfsmaster stop

$MFS_PATH/mfscgiserv stop

}

restart() {

$MFS_PATH/mfsmaster restart

$MFS_PATH/mfscgiserv restart

}

status() {

$MFS_PATH/mfsmaster test

$MFS_PATH/mfscgiserv test

}

case "$1" in

start)

start

;;

stop)

stop

;;

restart)

restart

;;

status)

status

;;

*)

echo $"Usage: $0 start|stop|restart|status"

exit 1

esac

exit 0

# chmod 755 /etc/init.d/mfsmaster

# chkconfig mfsmaster off

# service mfsmaster stop

【注意:主从节点上皆执行】

(2)、关闭DRBD服务并卸载设备

# umount /data 【注意:只针对主节点】

# chkconfig drbd off

# service drbd stop

【注意:主从节点上皆执行】

(3)、修改hosts文件中的mfsmater指向

10.185.15.244 mfsmaster

【注意:包括主节点、从节点、数据节点、客户端】

3、CoroSync+ Pacemaker高可用配置

http://parallel-ssh.googlecode.com/files/pssh-2.3.1.tar.gz

http://pyyaml.org/download/pyyaml/PyYAML-3.11.tar.gz

https://github.com/crmsh/crmsh/archive/1.2.6.tar.gz

(1)、基础安装

# yum -y install corosync* pacemaker*

# tar xvzf pssh-2.3.1.tar.gz

# cd pssh-2.3.1

# python setup.py install

# tar xvzf PyYAML-3.11.tar.gz

# cd PyYAML-3.11

# python setup.py install

# tar xvzf crmsh-1.2.6.tar.gz

# cd crmsh-1.2.6

# ./autogen.sh

# ./configure --prefix=/usr

# make && make install

(2)、主配置修改

# cd /etc/corosync

# vim corosync.conf

compatibility: whitetank

totem {

version: 2

secauth: on

threads: 16

interface {

ringnumber: 0

bindnetaddr: 10.185.15.192

mcastaddr: 226.94.1.1

mcastport: 5405

ttl: 1

}

}

logging {

fileline: off

to_stderr: no

to_logfile: yes

to_syslog: no

logfile: /var/log/cluster/corosync.log

debug: off

timestamp: on

logger_subsys {

subsys: AMF

debug: off

}

}

amf {

mode: disabled

}

service {

ver: 0

name: pacemaker

}

aisexec {

user: root

group: root

}

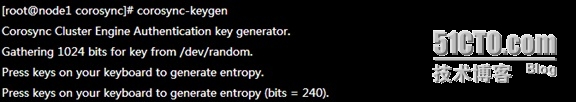

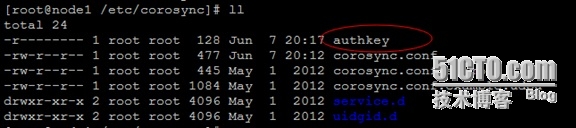

(3)、生成authkey

使用corosync-keygen生成key时,由于要使用/dev/random生成随机数,因此如果新装的系统操作不多,没有足够的熵,可能会出现如下提示:

此时只需要在本地登录后狂敲键盘即可!

# corosync-keygen

# scp authkey corosync.conf root@node2:/etc/corosync/

【注意:需要把corosync.conf、authkey也拷贝到备用节点上】

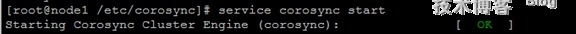

(4)、服务启动

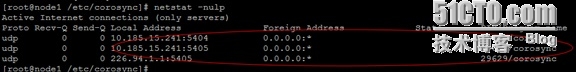

# service corosync start

# netstat -nulp

【注意:在两个节点上都需要执行】

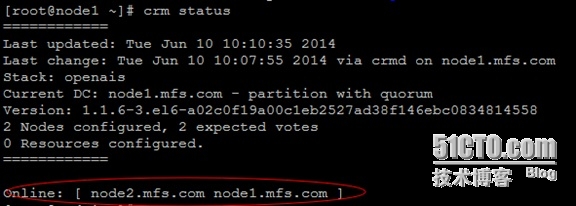

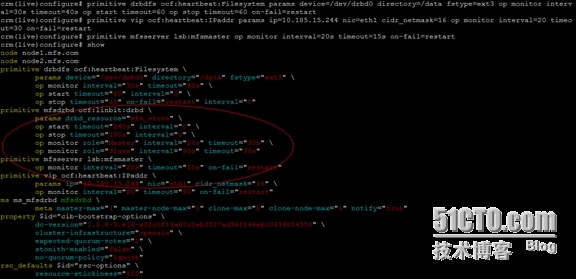

(5)、资源管理详细配置

# crm status

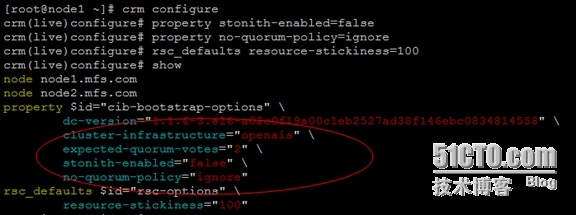

## 禁用STONISH、忽略法定票数、设置资源粘性

# crm configure

crm(live)configure# property stonith-enabled=false

crm(live)configure# property no-quorum-policy=ignore

crm(live)configure# rsc_defaults resource-stickiness=100

crm(live)configure# show

crm(live)configure# verify

crm(live)configure# commit

【注意:如果已经设置了,就无需设置】

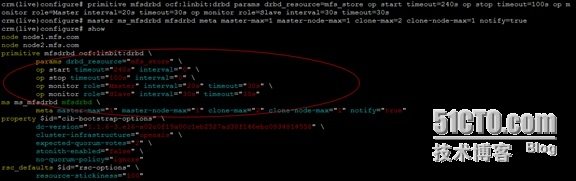

## 添加DRBD资源

crm(live)configure# primitive mfsdrbd ocf:linbit:drbd params drbd_resource=mfs_store op start timeout=240s op stop timeout=100s op monitor role=Master interval=20s timeout=30s op monitor role=Slave interval=30stimeout=30s

crm(live)configure# master ms_mfsdrbd mfsdrbd meta master-max=1master-node-max=1 clone-max=2 clone-node-max=1 notify=true

crm(live)configure# show

crm(live)configure# verify

crm(live)configure# commit

crm(live)configure# cd

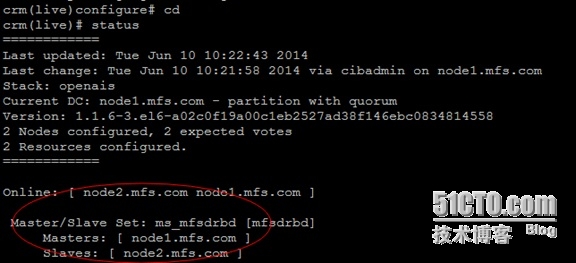

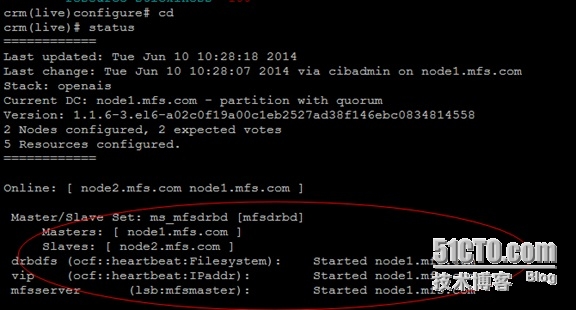

crm(live)# status

## 添加文件系统资源

crm(live)configure# primitive drbdfs ocf:heartbeat:Filesystem params device=/dev/drbd0 directory=/data fstype=ext3 op monitor interval=30s timeout=40s op start timeout=60 op stop timeout=60 on-fail=restart

## 添加VIP资源

crm(live)configure# primitive vip ocf:heartbeat:IPaddr params ip=10.185.15.244 nic=eth1 cidr_netmask=26 op monitor interval=20 timeout=30 on-fail=restart

## 定义MFS服务

crm(live)configure# primitive mfsserver lsb:mfsmaster op monitor interval=20s timeout=15s on-fail=restart

crm(live)configure# show

crm(live)configure# verify

crm(live)configure# commit

## 定义约束(排列约束、顺序约束)

crm(live)configure# colocation drbd_with_ms_mfsdrbd inf: drbdfs ms_mfsdrbd:Master

【注:挂载资源追随drbd主资源】

crm(live)configure# order drbd_after_ms_mfsdrbd mandatory: ms_mfsdrbd:promote drbdfs:start

【注:节点上存在drbdMaster才能启动drbdfs服务】

crm(live)configure# colocation mfsserver_with_drbdfs inf: mfsserver drbdfs

【注:mfs服务追随挂载资源】

crm(live)configure# order mfsserver_after_drbdfs mandatory: drbdfs:start mfsserver:start

【注:drbdfs服务启动才能启动mfs服务】

crm(live)configure# colocation vip_with_mfsserver inf: vip mfsserver

【注:vip追随mfs服务】

crm(live)configure# order vip_before_mfsserver mandatory: vip mfsserver

【注:vip启动才能启动mfs服务】

crm(live)configure# show

crm(live)configure# verify

crm(live)configure# commit

crm(live)configure# cd

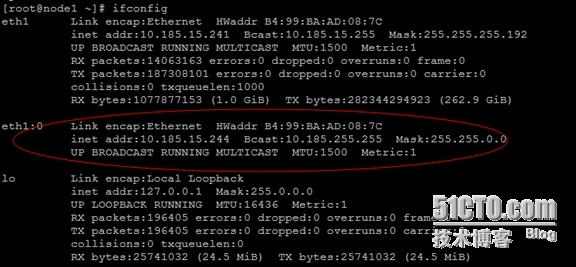

crm(live)# status

# ifconfig

=========================================================================================

五、MFS元数据节点HA测试

=========================================================================================

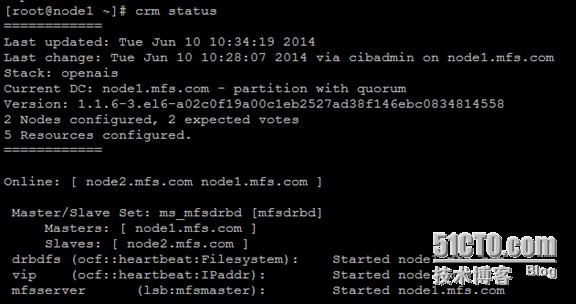

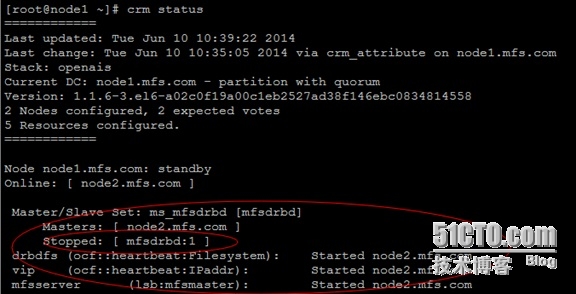

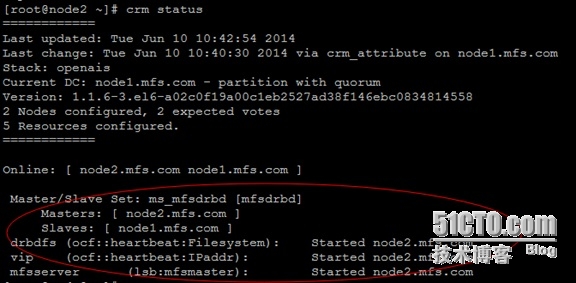

1、模拟主从节点自动切换

## 切换前

# crm status

## 切换后

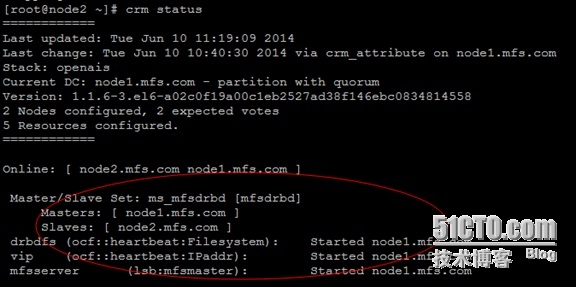

# crm node standby node1.mfs.com

# crm status

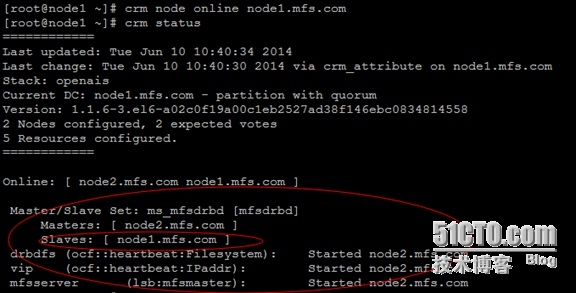

## 对下线的节点进行上线

# crm node online node1.mfs.com

# crm status

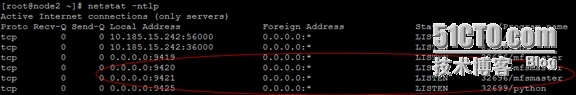

2、模拟MFS元数据服务挂掉

## 挂掉前

# netstat -ntlp

## 模拟挂掉操作

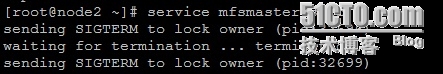

# service mfsmaster stop

## 挂掉后

# netstat -ntlp

# crm status

注意:

KILL掉服务后,服务是不会自动重启的。因为节点没有故障,所以资源不会转移,默认情况下,pacemaker不会对任何资源进行监控。所以,即便是资源关掉了,只要节点没有故障,资源依然不会转移。要想达到资源转移的目的,得定义监控(monitor)。

虽然我们在MFS资源定义中加了“monitor”选项,但发现并没有起到作用,服务不会自动拉起,所以通过加监控脚本的方式暂时解决。

# vim /usr/local/src/check_mfsmaster.sh

#!/bin/sh

PATH=/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/sbin:/usr/bin

VIP=`awk ‘/mfsmaster/ {print $1}‘ /etc/hosts `

[[ -z `/sbin/ip addr show eth1 | grep ${VIP}` ]] && exit 1

ONLINE_HOST=`/usr/sbin/crm status | awk ‘/mfsserver/ {print $4}‘`

CURRENT_HOST=`/bin/hostname`

[[ "${ONLINE_HOST}" != "${CURRENT_HOST}" ]] && exit 1

[[ -z `netstat -ntlp | grep mfsmaster` ]] && /sbin/service mfsmaster start

# chmod +x /usr/local/src/check_mfsmaster.sh

# crontab -e

* * * * * sleep 10; /usr/local/src/check_mfsmaster.sh >/dev/null 2>&1

* * * * * sleep 20; /usr/local/src/check_mfsmaster.sh >/dev/null 2>&1

* * * * * sleep 30; /usr/local/src/check_mfsmaster.sh >/dev/null 2>&1

* * * * * sleep 40; /usr/local/src/check_mfsmaster.sh >/dev/null 2>&1

* * * * * sleep 50; /usr/local/src/check_mfsmaster.sh >/dev/null 2>&1

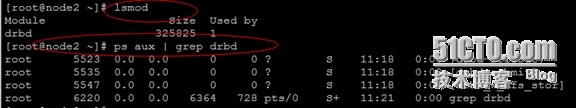

3、模拟DRBD服务挂掉

# service mfsmaster stop

# umount /data

# service drbd stop

# crm status

【注意:在测试这一步的时候,可以将前面加的crontab项临时去掉,以避免不必要的麻烦】

# lsmod

# ps aux | grep drbd

【注意:node2上的drbd服务在被停掉后会自动拉起,同时也会自动将主节点切换到node1】

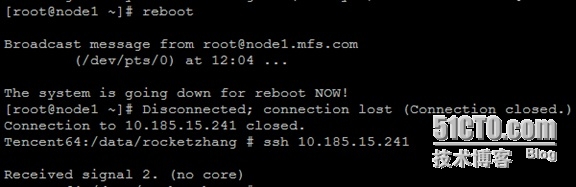

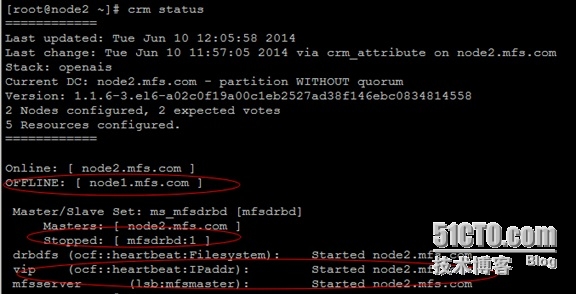

4、模拟corosync服务挂掉

目前在线提供服务的是node1节点,选择“关闭corosync服务”或者“重启服务器”皆可,我们这里选择“重启服务器”

# reboot

# crm status

本文出自 “人生理想在于坚持不懈” 博客,请务必保留此出处http://sofar.blog.51cto.com/353572/1429162