首页 > 代码库 > keepalived+amoeba+mysql-mmm+mysql实现mysql读写分离及高可用

keepalived+amoeba+mysql-mmm+mysql实现mysql读写分离及高可用

最近尝试了一下mysql的读写分离和高可用的搭建。搭好之后体验了一下,效果还不错。这里跟大家分享一下。

1、首先介绍一下mysql-mmm这个工具是干嘛使的?

众所周知,mysql自身提供了AB复制。我们也可以很轻松的实现master-master双向复制,同时再为其中的一个master节点搭建一个slave库。这样就实现了master1与master2之间的双向复制,同时master1与slave1之间主从复制这样的架构。这样整个体系中就存在两个master,正常情况下只有一个master对外提供服务。如果对外提供服务的master意外宕机了,这时mysql本身并不具备failover切换的能力,这样尽管系统中还有一个正常的master节点,但应用仍不可用,这个正常的master尽管存在,但无疑是个摆设。mysql-mmm就是在这样的条件下诞生的。

Mysql-MMM是Master-Master Replication Manager for MySQL(mysql主主复制管理器)的简称,该项目来自于Google,旨在用来监控mysql主主复制和做失败转移。其原理是将真实数据库节点的IP映射为虚拟IP集,在这个虚拟的IP集中,有一个用于write的IP,多个用于read的IP,这个用于write的虚拟IP映射着数据库集群中的两台master的IP,依次来实现failover的切换,如果觉得不是很明白,没有关系,后边具体配置部分还会再做说明。

Mysql-MMM是一个开源的项目,官网:http://mysql-mmm.org

2、接着来说amoeba是个什么物件?

可能您听说过mysql-proxy,这个mysql官方维护的一个实现mysql读写分离的工具,曾经测试使用过,但没有在生产中使用。网上大家讨论比较多的是mysql-proxy的配置比较麻烦,其实不是的,但说mysql-proxy的配置的话是比较简单的,不比amoeba麻烦多少,主要是mysql-proxy自身不带有启动脚本,如果你想实现像mysql服务那样的启动方式就需要自己来编写服务脚本。这里实现mysql读写分离,使用淘宝开源出来的amoeba,amoeba是用java开发出来的一款软件,其配置文件为xml格式。选择amoeba是因为amoeba是淘宝在生产环境中使用过的,经过实践测试的,相比mysql-proxy来说,风险性要小一些。

3、最后来说keepalived

keeplived是用来实现服务的高可用的一款优秀的工具,需要说明的是keepalived会为代理的服务虚拟一个IP,用于外部访问,正常情况下,这个虚拟IP是绑定在master上的。master通过脚本来周期性判断服务是否正常运行,如果发现服务异常,就会停掉keepalived服务,这时原本绑定在master上的虚拟IP就会浮动到backup上,由于这个虚拟IP仍然存在,所以外部仍旧可以访问这个服务。

实验环境:

hadoop0.updb.com 192.168.0.100

hadoop1.updb.com 192.168.0.101

hadoop2.updb.com 192.168.0.102

hadoop3.updb.com 192.168.0.103

hadoop4.updb.com 192.168.0.104

hadoop5.updb.com 192.168.0.105

mysql 5.6

所有节点的系统均为centos,使用自带网络yum源,扩展epel源,保证你的各节点均能访问公网,因为我们的mysql-mmm和keepalived均使用epel源进行网络安装。

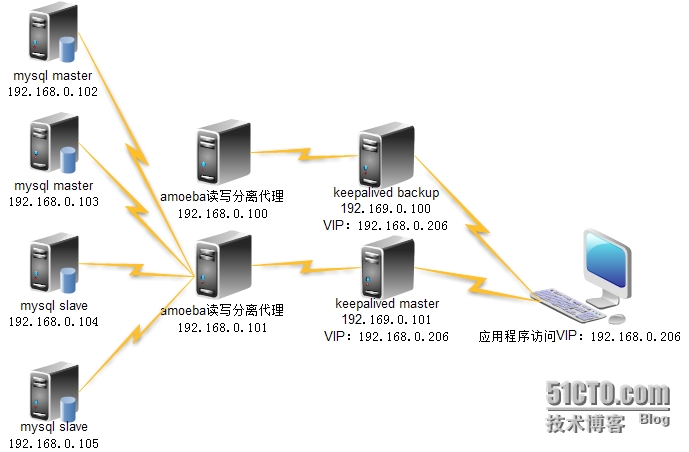

最终架构:

为了尽可能简洁而清楚的表达,上图中并没有显示mysql-mmm的部署规划。mysql-mmm分为monitor端和agent端,实验中在所有的mysql节点(192.168.0.102-192.168.0.105)上安装agent端,在192.168.0.101上安装monitor端。

好了,到这里相信你的心中已经有了丘壑,下面我们将一步一步来实现

1、搭建mysql集群,基本的mysql安装这里不再介绍(这里主主复制、主从复制的搭建是在全新安装的数据库的基础上,所以在设置同步参数时,binlog为mysql-bin.000001)

a、mysql 主主复制

首先停掉hadoop2、hadoop3上的mysql服务,修改配置文件,hadoop2配置如下:

[root@hadoop2 ~]# cat /etc/my.cnf [mysqld] server-id=1 log-bin=mysql-bin.log sync_binlog=1 log-slave-updates innodb_buffer_pool_size = 512M innodb_flush_log_at_trx_commit=1 sql_mode=STRICT_TRANS_TABLES,NO_AUTO_CREATE_USER,NO_ENGINE_SUBSTITUTION,NO_AUTO_VALUE_ON_ZERO lower_case_table_names=1 log_bin_trust_function_creators=1 character-set-server=utf8 [mysql] default-character-set=utf8

hadoop3配置文件,注意server-id不能重

[root@hadoop3 ~]# cat /etc/my.cnf [mysqld] server-id=2 log-bin=mysql-bin.log sync_binlog=1 log-slave-updates innodb_buffer_pool_size = 512M innodb_flush_log_at_trx_commit=1 sql_mode=STRICT_TRANS_TABLES,NO_AUTO_CREATE_USER,NO_ENGINE_SUBSTITUTION,NO_AUTO_VALUE_ON_ZERO lower_case_table_names=1 log_bin_trust_function_creators=1 character-set-server=utf8 [mysql] default-character-set=utf8

重启hadoop2、hadoop3上的mysql服务

hadoop2、hadoop3上都执行添加同步用户的操作

mysql> grant replication slave on *.* to ‘rep‘@‘192.168.0.%‘ identified by ‘123456‘;

hadoop3上设置同步参数

mysql> CHANGE MASTER TO > MASTER_HOST=‘192.168.0.102‘, > MASTER_PORT=3306, > MASTER_USER=‘rep‘, > MASTER_PASSWORD=‘123456‘, > MASTER_LOG_FILE=‘mysql-bin.000001‘, > MASTER_LOG_POS=107;

注意上面的MASTER_LOG_POS=107中的107是通过下面命令在其主库中查询的,hadoop3的主库为hadoop2

mysql> show master status; +------------------+----------+--------------+------------------+-------------------+ | File | Position | Binlog_Do_DB | Binlog_Ignore_DB | Executed_Gtid_Set | +------------------+----------+--------------+------------------+-------------------+ | mysql-bin.000001 | 107 | | | | +------------------+----------+--------------+------------------+-------------------+ 1 row in set (0.00 sec)

设置好hadoop3的主库同步参数后,启动其slave的角色

mysql> start slave; ## 查看是否同步成功 mysql> show slave status\G *************************** 1. row *************************** Slave_IO_State: Waiting for master to send event Master_Host: 192.168.0.102 Master_User: rep Master_Port: 3306 Connect_Retry: 60 Master_Log_File: mysql-bin.000001 Read_Master_Log_Pos: 107 Relay_Log_File: hadoop3-relay-bin.00002 Relay_Log_Pos: 283 Relay_Master_Log_File: mysql-bin.000001 Slave_IO_Running: Yes Slave_SQL_Running: Yes

如果看到上面的最后两项都为Yes,代表同步成功。

hadoop2上设置同步参数

mysql> CHANGE MASTER TO > MASTER_HOST=‘192.168.0.103‘, > MASTER_PORT=3306, > MASTER_USER=‘rep‘, > MASTER_PASSWORD=‘123456‘, > MASTER_LOG_FILE=‘mysql-bin.000001‘, > MASTER_LOG_POS=203;

同样需要到hadoop2的主库hadoop3上去查看二进制日志的位置来确定MASTER_LOG_POS的值

mysql> show master status; +------------------+----------+--------------+------------------+-------------------+ | File | Position | Binlog_Do_DB | Binlog_Ignore_DB | Executed_Gtid_Set | +------------------+----------+--------------+------------------+-------------------+ | mysql-bin.000001 | 203 | | | | +------------------+----------+--------------+------------------+-------------------+ 1 row in set (0.00 sec)

设置好hadoop2的主库同步参数后,启动其slave的角色

mysql> start slave; ## 查看是否同步成功 mysql> show slave status\G *************************** 1. row *************************** Slave_IO_State: Waiting for master to send event Master_Host: 192.168.0.103 Master_User: rep Master_Port: 3306 Connect_Retry: 60 Master_Log_File: mysql-bin.000001 Read_Master_Log_Pos: 203 Relay_Log_File: hadoop2-relay-bin.00002 Relay_Log_Pos: 190 Relay_Master_Log_File: mysql-bin.000001 Slave_IO_Running: Yes Slave_SQL_Running: Yes

如果看到上面的最后两项都为Yes,代表同步成功。至此,主主复制完成,限于篇幅,不再贴出测试结果。

b、为hadoop2搭建主从复制,slave节点为hadoop4、hadoop5

配置hadoop4的配置文件

[root@hadoop4 ~]# cat /etc/my.cnf [mysqld] server-id=3 innodb_buffer_pool_size = 512M innodb_flush_log_at_trx_commit=1 sql_mode=STRICT_TRANS_TABLES,NO_AUTO_CREATE_USER,NO_ENGINE_SUBSTITUTION,NO_AUTO_VALUE_ON_ZERO lower_case_table_names=1 log_bin_trust_function_creators=1 character-set-server=utf8 [mysql] default-character-set=utf8

hadoop4上设置同步参数

mysql> CHANGE MASTER TO > MASTER_HOST=‘192.168.0.102‘, > MASTER_PORT=3306, > MASTER_USER=‘rep‘, > MASTER_PASSWORD=‘123456‘, > MASTER_LOG_FILE=‘mysql-bin.000002‘, > MASTER_LOG_POS=234;

查询主库的MASTER_LOG_POS

mysql> show master status; +------------------+----------+--------------+------------------+-------------------+ | File | Position | Binlog_Do_DB | Binlog_Ignore_DB | Executed_Gtid_Set | +------------------+----------+--------------+------------------+-------------------+ | mysql-bin.000002 | 234 | | | | +------------------+----------+--------------+------------------+-------------------+ 1 row in set (0.00 sec)

设置好hadoop4的主库同步参数后,启动slave

mysql> start slave; ## 查看是否同步成功 mysql> show slave status\G *************************** 1. row *************************** Slave_IO_State: Waiting for master to send event Master_Host: 192.168.0.102 Master_User: rep Master_Port: 3306 Connect_Retry: 60 Master_Log_File: mysql-bin.000002 Read_Master_Log_Pos: 234 Relay_Log_File: hadoop4-relay-bin.00001 Relay_Log_Pos: 292 Relay_Master_Log_File: mysql-bin.000002 Slave_IO_Running: Yes Slave_SQL_Running: Yes

hadoop5的配置过程与hadoop4一样,需要注意的是hadoop5配置文件中的server-id=4,不能跟其他节点重复,这里不再贴测试结果。

2、搭建mysql-mmm

首先在mysql中添加mysql-mmm访问mysql的用户,由于已经配置了主主、主从复制,所以只需要在hadoop2上进行授权即可

mysql> GRANT REPLICATION CLIENT ON *.* TO ‘mmm_monitor‘@‘192.168.0.%‘ IDENTIFIED BY ‘123456‘; mysql> GRANT SUPER, REPLICATION CLIENT, PROCESS ON *.* TO ‘mmm_agent‘@‘192.168.0.%‘ IDENTIFIED BY ‘123456‘; mysql> flush privileges;

在hadoop1-hadoop5这5个节点上安装mysql-mmm

yum install mysql-mmm* -y

在hadoop1上配置mmm_common.conf,然后将该文件拷贝到其他节点的相同位置,覆盖默认的mmm-common.conf文件

[root@hadoop1 ~]# cat /etc/mysql-mmm/mmm_common.conf active_master_role writer <host default> cluster_interface eth0 pid_path /var/run/mysql-mmm/mmm_agentd.pid bin_path /usr/libexec/mysql-mmm/ replication_user rep ## mysql中复制用的同步用户 replication_password 123456 ## mysql中复制用的同步密码 agent_user mmm_agent ## mysql中mysql-mmm的agent用户 agent_password 123456 ## mysql中mysql-mmm的agent密码 </host> <host db1> ip 192.168.0.102 mode master peer db2 </host> <host db2> ip 192.168.0.103 mode master peer db1 </host> <host db3> ip 192.168.0.104 mode slave </host> <host db4> ip 192.168.0.105 mode slave </host> ## 定义虚拟IP192.168.0.201为wirte的IP,可以看到映射的是两个master <role writer> hosts db1, db2 ips 192.168.0.201 mode exclusive </role> ## 定义虚拟IP192.168.0.202-205为read的IP,同时使用负载均衡模式 <role reader> hosts db1, db2, db3, db4 ips 192.168.0.202, 192.168.0.203, 192.168.0.204, 192.168.0.205 mode balanced </role>

将hadoop1上修改好的mmm_common.conf拷贝到其他个节点上的相同位置覆盖默认的mmm_common.conf

hadoop1上修改monitor的配置文件mmm_mon.conf

[root@hadoop1 ~]# cat /etc/mysql-mmm/mmm_mon.conf include mmm_common.conf <monitor> ip 127.0.0.1 pid_path /var/run/mysql-mmm/mmm_mond.pid bin_path /usr/libexec/mysql-mmm status_path /var/lib/mysql-mmm/mmm_mond.status ## 这里的ip填写mysql集群各节点的真实IP ping_ips 192.168.0.102,192.168.0.103,192.168.0.104,192.168.0.105 auto_set_online 60 # The kill_host_bin does not exist by default, though the monitor will # throw a warning about it missing. See the section 5.10 "Kill Host # Functionality" in the PDF documentation. # # kill_host_bin /usr/libexec/mysql-mmm/monitor/kill_host # </monitor> <host default> monitor_user mmm_monitor ##mysql中设置的mmm_monitor用户 monitor_password 123456 ##mysql中设置的mmm_monitor密码 </host> debug 0 ##如果设为1,会在启动时打印DEBUG信息,用于排障

好,monitor的配置到此结束,紧接着来配置hadoop2-hadoop5上的agent,以hadoop2为例

[root@hadoop2 ~]# cat /etc/mysql-mmm/mmm_agent.conf include mmm_common.conf # The ‘this‘ variable refers to this server. Proper operation requires # that ‘this‘ server (db1 by default), as well as all other servers, have the # proper IP addresses set in mmm_common.conf. this db1

非常的简单,只要修改最后一行,跟mmm_common.conf中的配置要一致。hadoop3中改为db2,hadoop4中改为db3,hadoop5中改为db4。

hadoop1上启动monitor

/etc/init.d/mysql-mmm-monitor start

hadoop2、hadoop3、hadoop4、hadoop5中启动agent

/etc/init.d/mysql-mmm-agent start

hadoop1上查看状态

[root@hadoop1 ~]# mmm_control show db1(192.168.0.102) master/AWAITING_RECOVERY. Roles: db2(192.168.0.103) master/AWAITING_RECOVERY. Roles: db3(192.168.0.104) slave/AWAITING_RECOVERY. Roles: db4(192.168.0.105) slave/AWAITING_RECOVERY. Roles:

第一次使用,发现是状态是等待恢复,使用命令改变状态

[root@hadoop1 ~]# mmm_control set_online db1

再次查看状态

[root@hadoop1 ~]# mmm_control show db1(192.168.0.102) master/ONLINE. Roles: reader(192.168.0.205), writer(192.168.0.201) db2(192.168.0.103) master/ONLINE. Roles: reader(192.168.0.203) db3(192.168.0.104) slave/ONLINE. Roles: reader(192.168.0.204) db4(192.168.0.105) slave/ONLINE. Roles: reader(192.168.0.202)

现在停掉hadoop2上的mysql,查看虚拟IP的浮动

[root@hadoop2 ~]# /etc/init.d/mysql stop Shutting down MySQL.... SUCCESS!

hadoop1上查看状态,发现writer绑定到了hadoop3上

[root@hadoop1 ~]# mmm_control show db1(192.168.0.102) master/HARD_OFFLINE. Roles: db2(192.168.0.103) master/ONLINE. Roles: reader(192.168.0.203), writer(192.168.0.201) db3(192.168.0.104) slave/ONLINE. Roles: reader(192.168.0.204), reader(192.168.0.205) db4(192.168.0.105) slave/ONLINE. Roles: reader(192.168.0.202)

hadoop4中查看原本与hadoop2做同步的slave现在的master是哪个?

[root@hadoop4 ~]# mysql -uroot -p -e "show slave status\G" Enter password: *************************** 1. row *************************** Slave_IO_State: Waiting for master to send event Master_Host: 192.168.0.103 Master_User: rep Master_Port: 3306 Connect_Retry: 60 Master_Log_File: mysql-bin.000004 Read_Master_Log_Pos: 120 Relay_Log_File: hadoop4-relay-bin.000002 Relay_Log_Pos: 283 Relay_Master_Log_File: mysql-bin.000004 Slave_IO_Running: Yes Slave_SQL_Running: Yes

发现原本与hadoop2同步的hadoop4和hadoop5已经自动的与hadoop3同步,是不是觉得很神奇,哈哈,我也被mysql-mmm的牛叉所震精!!!!

现在我们重启hadoop2的mysql,看hadoop1上的状态事都会改变

[root@hadoop2 ~]# /etc/init.d/mysql start Starting MySQL.. SUCCESS!

hadoop1上查看状态,hadoop2变为待恢复状态

[root@hadoop1 ~]# mmm_control show db1(192.168.0.102) master/AWAITING_RECOVERY. Roles: db2(192.168.0.103) master/ONLINE. Roles: reader(192.168.0.203), writer(192.168.0.201) db3(192.168.0.104) slave/ONLINE. Roles: reader(192.168.0.204), reader(192.168.0.205) db4(192.168.0.105) slave/ONLINE. Roles: reader(192.168.0.202)

隔一小段时间,再次查看,发现其中的一个read 虚拟IP已经浮动到hadoop2上,此时writer仍位于hadoop3上,当然slave此时会从hadoop3来同步数据。直到hadoop3上的mysql服务不可用之后,才会重新切换回与hadoop2同步。

[root@hadoop1 ~]# mmm_control show db1(192.168.0.102) master/ONLINE. Roles: reader(192.168.0.204) db2(192.168.0.103) master/ONLINE. Roles: reader(192.168.0.203), writer(192.168.0.201) db3(192.168.0.104) slave/ONLINE. Roles: reader(192.168.0.205) db4(192.168.0.105) slave/ONLINE. Roles: reader(192.168.0.202)

3、amoeba实现读写分离

在hadoop0、haoop1上安装配置amoeba

首先在hadoop0、hadoop1上安装配置jdk和mysql客户端,实验中使用jdk1.7

## 安装mysql客户端 yum install mysql -y ## 安装jdk [root@hadoop1 ~]# rpm -ivh jdk-7u60-linux-x64.rpm ## 验证jdk环境是否安装成功 [root@hadoop1 ~]# java -version java version "1.7.0_45" OpenJDK Runtime Environment (rhel-2.4.3.3.el6-x86_64 u45-b15) OpenJDK 64-Bit Server VM (build 24.45-b08, mixed mode) ## 配置环境变量 [root@hadoop1 ~]# cat .bash_profile # .bash_profile # Get the aliases and functions if [ -f ~/.bashrc ]; then . ~/.bashrc fi # User specific environment and startup programs PATH=$PATH:$HOME/bin export JAVA_HOME=/usr/java/jdk1.7.0_60 export JRE_HOME=/usr/java/jdk1.7.0_60/jre export CLASSPATH=./:/usr/java/jdk1.7.0_60/lib:/usr/java/jdk1.7.0_60/jre/lib export PATH ## 使环境变量生效 [root@hadoop1 ~]# . .bash_profile

hadoop1上安装amoeba

[root@hadoop1 pub]# tar xf amoeba-mysql-binary-2.2.0.tar.gz -C /opt/ [root@hadoop1 pub]# cd /opt/amoeba-mysql/

将amoeba的bin命令添加到环境变量

[root@hadoop1 ~]# cat .bash_profile # .bash_profile # Get the aliases and functions if [ -f ~/.bashrc ]; then . ~/.bashrc fi # User specific environment and startup programs PATH=$PATH:$HOME/bin:/opt/amoeba-mysql/bin export JAVA_HOME=/usr/java/jdk1.7.0_60 export JRE_HOME=/usr/java/jdk1.7.0_60/jre export CLASSPATH=./:/usr/java/jdk1.7.0_60/lib:/usr/java/jdk1.7.0_60/jre/lib export PATH [root@hadoop1 ~]# . .bash_profile

尝试启动amoeba,验证是否安装成功,若出现如下信息,则表明安装成功

[root@hadoop1 ~]# amoeba start log4j:WARN log4j config load completed from file:/opt/amoeba-mysql/conf/log4j.xml 2014-10-27 00:11:22,040 INFO context.MysqlRuntimeContext - Amoeba for Mysql current versoin=5.1.45-mysql-amoeba-proxy-2.2.0 log4j:WARN ip access config load completed from file:/opt/amoeba-mysql/conf/access_list.conf 2014-10-27 00:11:22,788 INFO net.ServerableConnectionManager - Amoeba Monitor Server listening on /0.0.0.0:9066. 2014-10-27 00:11:22,788 INFO net.ServerableConnectionManager - Amoeba for Mysql listening on /0.0.0.0:3306.

如出现下面的错误:

[root@hadoop1 ~]# amoeba start amoeba startThe stack size specified is too small, Specify at least 160k Error: Could not create the Java Virtual Machine. Error: A fatal exception has occurred. Program will exit.

按照下面的方法解决

[root@hadoop1 ~]# cd /opt/amoeba-mysql/bin/ [root@hadoop1 bin]# vi amoeba ## 将DEFAULT_OPTS="-server -Xms256m -Xmx256m -Xss256k"这行中的数值调大一些,我这里全部设置成256

接着开始配置amoeba

[root@hadoop1 ~]# cd /opt/amoeba-mysql/

[root@hadoop1 amoeba-mysql]# vi conf/dbServers.xml

<?xml version="1.0" encoding="gbk"?>

<!DOCTYPE amoeba:dbServers SYSTEM "dbserver.dtd">

<amoeba:dbServers xmlns:amoeba="http://amoeba.meidusa.com/">

<!--

Each dbServer needs to be configured into a Pool,

If you need to configure multiple dbServer with load balancing that can be simplified by the following configuration:

add attribute with name virtual = "true" in dbServer, but the configuration does not allow the element with name factoryConfig

such as ‘multiPool‘ dbServer

-->

<dbServer name="abstractServer" abstractive="true">

<factoryConfig class="com.meidusa.amoeba.mysql.net.MysqlServerConnectionFactory">

<property name="manager">${defaultManager}</property>

<property name="sendBufferSize">64</property>

<property name="receiveBufferSize">128</property>

<!-- mysql port -->

<property name="port">3306</property>

<!-- mysql schema -->

<!-- 实验中使用的是javashop库 -->

<property name="schema">test</property>

<!-- mysql user -->

<!-- kora用户名是用来远程连接数据库javashop用的,

需要提前在数据库中对该用户授权,在hadoop2上

授权就可以了,其他节点就会同步授权。

-->

<property name="user">kora</property>

<!-- 特别要注意这个地方,默认情况下这行是被注释掉的,会导致连接失败 -->

<property name="password">123456</property>

</factoryConfig>

<poolConfig class="com.meidusa.amoeba.net.poolable.PoolableObjectPool">

<property name="maxActive">500</property>

<property name="maxIdle">500</property>

<property name="minIdle">10</property>

<property name="minEvictableIdleTimeMillis">600000</property>

<property name="timeBetweenEvictionRunsMillis">600000</property>

<property name="testOnBorrow">true</property>

<property name="testOnReturn">true</property>

<property name="testWhileIdle">true</property>

</poolConfig>

</dbServer>

<!-- 这里的master会对外承担写入的功能 -->

<dbServer name="master" parent="abstractServer">

<factoryConfig>

<!-- 这里的IP对应mysql-mmm设置的writer的虚拟ip -->

<property name="ipAddress">192.168.0.201</property>

</factoryConfig>

</dbServer>

<dbServer name="slave1" parent="abstractServer">

<factoryConfig>

<!-- 这里的IP对应mysql-mmm设置的reader的虚拟ip中的其中一个 -->

<property name="ipAddress">192.168.0.202</property>

</factoryConfig>

</dbServer>

<dbServer name="slave2" parent="abstractServer">

<factoryConfig>

<!-- 这里的IP对应mysql-mmm设置的reader的虚拟ip中的其中一个 -->

<property name="ipAddress">192.168.0.203</property>

</factoryConfig>

</dbServer>

<dbServer name="slave3" parent="abstractServer">

<factoryConfig>

<!-- 这里的IP对应mysql-mmm设置的reader的虚拟ip中的其中一个 -->

<property name="ipAddress">192.168.0.204</property>

</factoryConfig>

</dbServer>

<dbServer name="slave4" parent="abstractServer">

<factoryConfig>

<!-- 这里的IP对应mysql-mmm设置的reader的虚拟ip中的其中一个 -->

<property name="ipAddress">192.168.0.205</property>

</factoryConfig>

</dbServer>

<!-- 这里将上面设置的slave添加到一个虚拟的组virtualslave里面,对外提供读的功能,同时实现负载均衡 -->

<dbServer name="virtualslave" virtual="true">

<poolConfig class="com.meidusa.amoeba.server.MultipleServerPool">

<!-- Load balancing strategy: 1=ROUNDROBIN , 2=WEIGHTBASED , 3=HA-->

<property name="loadbalance">1</property>

<!-- Separated by commas,such as: server1,server2,server1 -->

<property name="poolNames">slave1,slave2,slave3,slave4</property>

</poolConfig>

</dbServer>

</amoeba:dbServers>

[root@hadoop1 amoeba-mysql]# vi conf/amoeba.xml

<?xml version="1.0" encoding="gbk"?>

<!DOCTYPE amoeba:configuration SYSTEM "amoeba.dtd">

<amoeba:configuration xmlns:amoeba="http://amoeba.meidusa.com/">

<proxy>

<!-- service class must implements com.meidusa.amoeba.service.Service -->

<service name="Amoeba for Mysql" class="com.meidusa.amoeba.net.ServerableConnectionManager">

<!-- 为了方便应用程序访问,将端口改为3306 -->

<property name="port">3306</property>

<!-- 这里监听需要在0.0.0.0 -->

<property name="ipAddress">0.0.0.0</property>

<property name="manager">${clientConnectioneManager}</property>

<property name="connectionFactory">

<bean class="com.meidusa.amoeba.mysql.net.MysqlClientConnectionFactory">

<property name="sendBufferSize">128</property>

<property name="receiveBufferSize">64</property>

</bean>

</property>

<property name="authenticator">

<bean class="com.meidusa.amoeba.mysql.server.MysqlClientAuthenticator">

<!-- 这里的kora用户跟数据库的用户无关,是应用程序使用amoeba做代理

连接数据库时的用户,是amoeba的用户 -->

<property name="user">kora</property>

<property name="password">123456</property>

<property name="filter">

<bean class="com.meidusa.amoeba.server.IPAccessController">

<property name="ipFile">${amoeba.home}/conf/access_list.conf</property>

</bean>

</property>

</bean>

</property>

</service>

<!-- server class must implements com.meidusa.amoeba.service.Service -->

<service name="Amoeba Monitor Server" class="com.meidusa.amoeba.monitor.MonitorServer">

<!-- port -->

<property name="port">9066</property>

<!-- 这里也将监听在0.0.0.0 -->

<property name="ipAddress">0.0.0.0</property>

<property name="daemon">true</property>

<property name="manager">${clientConnectioneManager}</property>

<property name="connectionFactory">

<bean class="com.meidusa.amoeba.monitor.net.MonitorClientConnectionFactory"></bean>

</property>

</service>

<runtime class="com.meidusa.amoeba.mysql.context.MysqlRuntimeContext">

<!-- proxy server net IO Read thread size -->

<property name="readThreadPoolSize">20</property>

<!-- proxy server client process thread size -->

<property name="clientSideThreadPoolSize">30</property>

<!-- mysql server data packet process thread size -->

<property name="serverSideThreadPoolSize">30</property>

<!-- per connection cache prepared statement size -->

<property name="statementCacheSize">500</property>

<!-- query timeout( default: 60 second , TimeUnit:second) -->

<property name="queryTimeout">60</property>

</runtime>

</proxy>

<!--

Each ConnectionManager will start as thread

manager responsible for the Connection IO read , Death Detection

-->

<connectionManagerList>

<connectionManager name="clientConnectioneManager" class="com.meidusa.amoeba.net.MultiConnectionManagerWrapper">

<property name="subManagerClassName">com.meidusa.amoeba.net.ConnectionManager</property>

<property name="processors">5</property>

</connectionManager>

<connectionManager name="defaultManager" class="com.meidusa.amoeba.net.MultiConnectionManagerWrapper">

<property name="subManagerClassName">com.meidusa.amoeba.net.AuthingableConnectionManager</property>

<property name="processors">5</property>

</connectionManager>

</connectionManagerList>

<!-- default using file loader -->

<dbServerLoader class="com.meidusa.amoeba.context.DBServerConfigFileLoader">

<property name="configFile">${amoeba.home}/conf/dbServers.xml</property>

</dbServerLoader>

<queryRouter class="com.meidusa.amoeba.mysql.parser.MysqlQueryRouter">

<property name="ruleLoader">

<bean class="com.meidusa.amoeba.route.TableRuleFileLoader">

<property name="ruleFile">${amoeba.home}/conf/rule.xml</property>

<property name="functionFile">${amoeba.home}/conf/ruleFunctionMap.xml</property>

</bean>

</property>

<property name="sqlFunctionFile">${amoeba.home}/conf/functionMap.xml</property>

<property name="LRUMapSize">1500</property>

<!-- 这里设置默认的连接,一般设置为writer对应的dbserver -->

<property name="defaultPool">master</property>

<!-- 这里设置谁作为writer,对应dbServer.xml中的master -->

<property name="writePool">master</property>

<!-- 这里设置谁作为reader,对应dbServer.xml中的virtualslave组 -->

<property name="readPool">virtualslave</property>

<property name="needParse">true</property>

</queryRouter>

</amoeba:configuration>将hadoop1上配置好的amoeba的安装目录scp到hadoop0上,然后配置环境变量就OK了

[root@hadoop1 ~]# scp -r /opt/amoeba-mysql/ hadoop0:/opt/ root@hadoop0‘s password:

配置环境变量

[root@hadoop0 ~]# cat .bash_profile # .bash_profile # Get the aliases and functions if [ -f ~/.bashrc ]; then . ~/.bashrc fi # User specific environment and startup programs PATH=$PATH:$HOME/bin:/opt/amoeba-mysql/bin export JAVA_HOME=/usr/java/jdk1.7.0_60 export JRE_HOME=/usr/java/jdk1.7.0_60/jre export CLASSPATH=./:/usr/java/jdk1.7.0_60/lib:/usr/java/jdk1.7.0_60/jre/lib export PATH

在hadoop0、hadoop1上启动amoeba

[root@hadoop1 ~]# amoeba start & [1] 17345 [root@hadoop1 ~]# log4j:WARN log4j config load completed from file:/opt/amoeba-mysql/conf/log4j.xml 2014-10-27 00:48:22,983 INFO context.MysqlRuntimeContext - Amoeba for Mysql current versoin=5.1.45-mysql-amoeba-proxy-2.2.0 log4j:WARN ip access config load completed from file:/opt/amoeba-mysql/conf/access_list.conf 2014-10-27 00:48:23,627 INFO net.ServerableConnectionManager - Amoeba Monitor Server listening on /0.0.0.0:9066. 2014-10-27 00:48:23,627 INFO net.ServerableConnectionManager - Amoeba for Mysql listening on /0.0.0.0:3306.

使用amoeba连接后端的mysql

[root@hadoop1 ~]# mysql -ukora -p -h127.0.0.1 Enter password: Welcome to the MySQL monitor. Commands end with ; or \g. Your MySQL connection id is 926449292 Server version: 5.1.45-mysql-amoeba-proxy-2.2.0 MySQL Enterprise Server - Advanced Edition (Commercial) Copyright (c) 2000, 2014, Oracle and/or its affiliates. All rights reserved. Oracle is a registered trademark of Oracle Corporation and/or its affiliates. Other names may be trademarks of their respective owners. Type ‘help;‘ or ‘\h‘ for help. Type ‘\c‘ to clear the current input statement. mysql> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | javashop | +--------------------+ 2 rows in set (0.00 sec)

可见,amoeba工作正常,需要注意的是这里的kora用户是amoeba的用户。-h参数后面的IP为127.0.0.1,说明连接的是本机的mysql数据库,但是本机我们是没有安装mysql数据库的,只是安装了mysql的客户端,即mysql命令,但是仍然连接到了后端的mysql中去,是不是感觉很不可思议!哈哈,过程是这样的,首先用户使用mysql -u kora -p -h 127.0.0.1命令请求连接时(没有指定端口默认为3306),由于amoeba监听在0.0.0.0:3306,当然会接受这条命令的请求,于是开始和amoeba.xml文件中的登录口令做校验,发现用户名和密码与amoeba的用户名密码完全吻合,好,执行代理,代理中设置了writer的master以及reader的virtualslave,通过负载均衡,可能这时连接请求被负载到slave1上去了,slave1在dbServer.xml中设置的虚拟IP为192.168.0.202,slave1继承了abstractServer中的端口3306,用户名kora,schema javashop,密码123456,mysql客户端发出的连接请求经过amoeba代理后变成了mysql -u kora -p123456 -h 192.168.0.202 -D javashop -P 3306。由于虚拟IP192.168.0.202是在mysql-mmm中映射出来的,很自然的这条命令进入mysql-mmm中,根据配置文件的映射关系,最终转化为了mysql -u kora -p123456 -h 192.168.0.103 -D javashop -P 3306,最终成功连接上后端的数据库。

再来捋一下,mysql客户端的命令首先经过amoeba代理,然后再经过mysql-mmm代理,最终到达后端的mysql集群,这个过程其实就是真实IP->虚拟IP->真实IP的转换,只不过中间还有负载均衡。

验证amoeba实现了读写分离功能的测试办法,这里只说思路,亲测可用,这个不必担心,首先在hadoop2上创建一张表,然后插入一条数据,这时4个节点中都会存在一条记录。这时停掉hadoop4、hadoop5与hadoop2的同步,在hadoop4、hadoop5中各插入一条不一样的记录。然后打开hadoop4、hadoop5与hadoop2的同步,最终的结果是hadoop2、hadoop3中有一条一样的数据,hadoop4、hadoop5中除了含有一条与hadoop2、hadoop3一样的数据外,还有一条各自的数据,这时用amoeba连入,不停的执行select * from tab;就能看到数据的条数在不断的变化,这就说明负载均衡和读写分离已经实现。

4、keepalived实现amoeba的HA

hadoop0、hadoop1上安装keepalived

yum install keepalived -y

hadoop1作为keepalived的master,配置如下:

[root@hadoop0 ~]# vi /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_script check_services {

script "/opt/check_services.sh"

interval 2

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.0.206

}

track_script {

check_services

}

} check_services.sh脚本,当amoeba服务挂掉后,stop keepalived,让虚拟IP浮动到backup上

#!/bin/bash #author: kora #date: 2014-10-21 #description: check service such as amoeba # MYSQL_CLIENT=/usr/bin/mysql MYSQL_HOST=127.0.0.1 MYSQL_USER=kora MYSQL_PORT=3306 MYSQL_PASSWD=upbjsxt CHECK_TIME=3 MYSQL_OK=1 NOW=`date` STATUS=‘OK!‘ AMOEBA_STATUS=`ps -ef |grep -v "grep"|grep amoeba|wc -l` pidfile=/var/lock/subsys/`basename $0`.pid if [ -f "$pidfile" ] && [ -e /proc/`cat $pidfile` ] then exit 1 fi trap `rm -rf $pidfile; exit 0` 1 2 3 15 echo $$ > "$pidfile" if [ "$AMOEBA_STATUS" -eq 0 ] then amoeba start > /dev/null & sleep 5 fi while [ "$CHECK_TIME" -ne 0 ] do let "CHECK_TIME-=1" "$MYSQL_CLIENT" -h "$MYSQL_HOST" -u "$MYSQL_USER" -p"$MYSQL_PASSWD" -P "$MYSQL_PORT" -e "show databases;" > /dev/null 2>&1 if [ "$?" -eq 0 ] then MYSQL_OK=1 CHECK_TIME=0 else MYSQL_OK=0 fi done if [ "$MYSQL_OK" -eq 0 ] then status=‘fail!‘ /etc/init.d/keepalived stop fi echo "$NOW:$STATUS" >> ~/keepalived.log exit 0

hadoop0作为keepalived的backup,配置如下:

[root@hadoop0 ~]# vi /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 51

priority 80

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.0.206

}

}由master和backup的配置文件看出,keepalived为amoeba配置的虚拟IP为192.168.0.206,在hadoop0、hadoop1上启动keepalived

/etc/init.d/keepalived start

在hadoop1上查看虚拟IP是否绑定在了网卡上

[root@hadoop1 ~]# ip add 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:a8:50:e2 brd ff:ff:ff:ff:ff:ff inet 192.168.0.101/24 brd 192.168.0.255 scope global eth0 inet 192.168.0.206/32 scope global eth0 inet6 fe80::20c:29ff:fea8:50e2/64 scope link valid_lft forever preferred_lft forever

192.168.0.206已经绑定上来了,使用mysql客户端连接这个虚拟IP

[root@hadoop1 ~]# mysql -u kora -p -h 192.168.0.206 Enter password: Welcome to the MySQL monitor. Commands end with ; or \g. Your MySQL connection id is 926449292 Server version: 5.1.45-mysql-amoeba-proxy-2.2.0 MySQL Enterprise Server - Advanced Edition (Commercial) Copyright (c) 2000, 2014, Oracle and/or its affiliates. All rights reserved. Oracle is a registered trademark of Oracle Corporation and/or its affiliates. Other names may be trademarks of their respective owners. Type ‘help;‘ or ‘\h‘ for help. Type ‘\c‘ to clear the current input statement. mysql> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | javashop | +--------------------+ 2 rows in set (0.01 sec)

说明keepalived已经成功代理了amoeba,验证当hadoop1上的amoeba服务挂掉后,虚拟IP是否会自动转移,amoeba是否仍能对外服务

hadoop1中的amoeba服务停掉后,虚拟ip不见了

[root@hadoop1 ~]# amoeba stop amoeba server shutting down with port=9066 [root@hadoop1 ~]# 2014-10-27 02:02:36,902 WARN net.ServerableConnectionManager - Amoeba for Mysql shutdown completed! 2014-10-27 02:02:36,903 WARN net.ServerableConnectionManager - Amoeba Monitor Server shutdown completed! [1]+ Done amoeba start [root@hadoop1 ~]# ps -ef |grep amoeba root 31419 3847 0 02:02 pts/0 00:00:00 grep amoeba [root@hadoop1 ~]# ip add 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:a8:50:e2 brd ff:ff:ff:ff:ff:ff inet 192.168.0.101/24 brd 192.168.0.255 scope global eth0 inet6 fe80::20c:29ff:fea8:50e2/64 scope link valid_lft forever preferred_lft forever

hadoop0上出现了虚拟IP 192.168.0.206

[root@hadoop0 ~]# ip add 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:74:bc:ae brd ff:ff:ff:ff:ff:ff inet 192.168.0.100/24 brd 192.168.0.255 scope global eth0 inet 192.168.0.206/32 scope global eth0 inet6 fe80::20c:29ff:fe74:bcae/64 scope link valid_lft forever preferred_lft forever

hadoop1上验证amoeba是否仍能对外服务

[root@hadoop1 ~]# ip add 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:a8:50:e2 brd ff:ff:ff:ff:ff:ff inet 192.168.0.101/24 brd 192.168.0.255 scope global eth0 inet6 fe80::20c:29ff:fea8:50e2/64 scope link valid_lft forever preferred_lft forever [root@hadoop1 ~]# mysql -u kora -p -h 192.168.0.206 Enter password: Welcome to the MySQL monitor. Commands end with ; or \g. Your MySQL connection id is 1877392091 Server version: 5.1.45-mysql-amoeba-proxy-2.2.0 MySQL Enterprise Server - Advanced Edition (Commercial) Copyright (c) 2000, 2014, Oracle and/or its affiliates. All rights reserved. Oracle is a registered trademark of Oracle Corporation and/or its affiliates. Other names may be trademarks of their respective owners. Type ‘help;‘ or ‘\h‘ for help. Type ‘\c‘ to clear the current input statement. mysql> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | javashop | +--------------------+ 2 rows in set (0.02 sec)

ok,没有问题,在hadoop1上的amoeba服务停掉之后,应用程序不需要做任何改动,仍可以访问后台数据,至此keepalived已经实现了amoeba的HA。

写这篇博文,虽累但还是很开心,记录下了自己成长的过程,也告诉自己,要努力让坚持成为一种习惯,不早了,洗洗睡了,晚安!

本文出自 “不逼自己就不自知有多牛逼” 博客,转载请与作者联系!

keepalived+amoeba+mysql-mmm+mysql实现mysql读写分离及高可用